Trump Voice AI in 2026: Navigating the Risks and Rewards

Explore the world of Trump voice AI technology. Learn how these vocal clones are created, their ethical implications, and their potential uses.

Introduction: From Meme to Reality

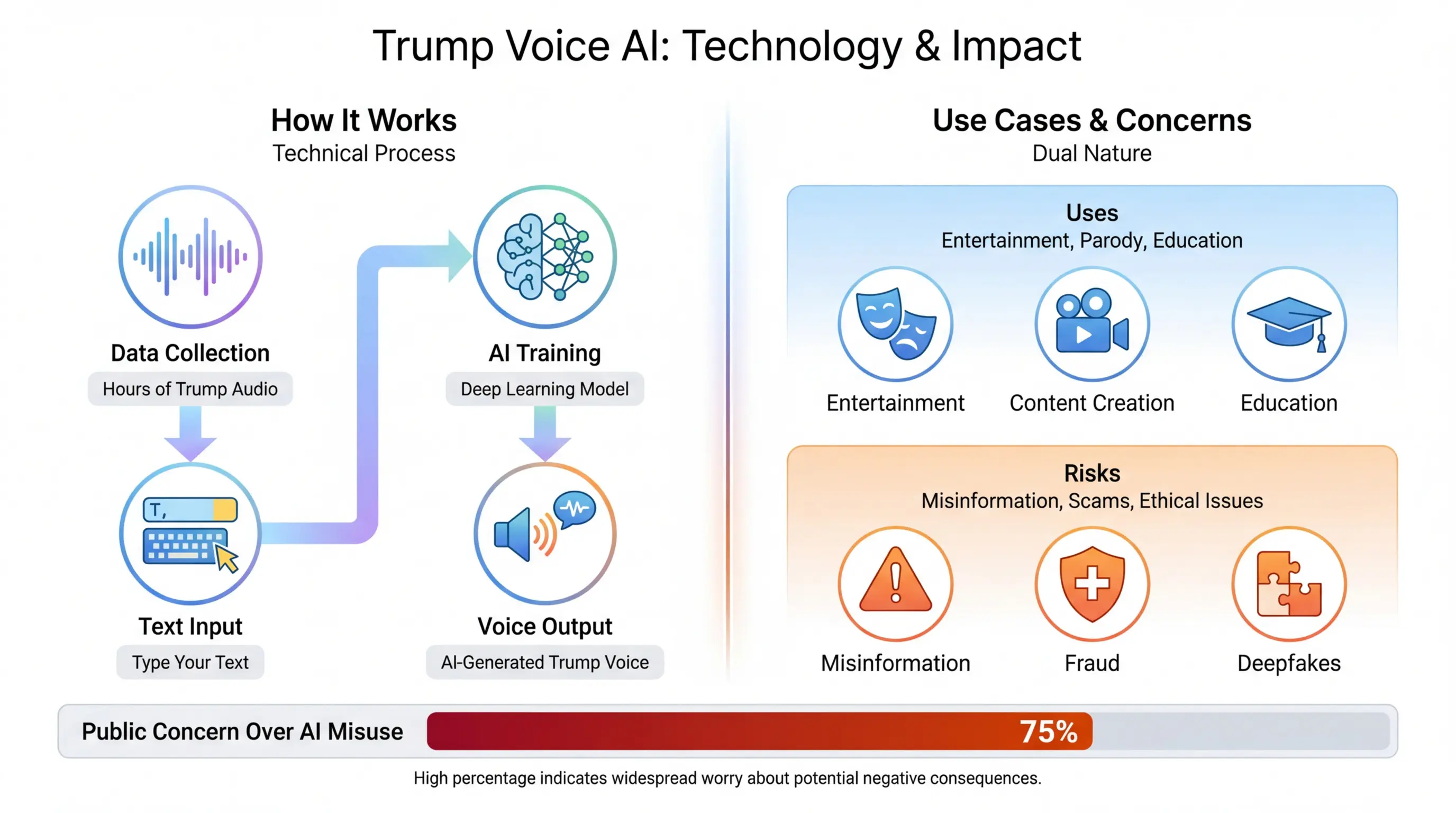

People have long loved exaggerating politicians with comic sketches, but now the joke becomes a reality. Trump voice AI turns simple typed words into unmistakable presidential tones, blurring the line between satire and deep‑fake menace. Every clip that plays on your feed feels authentic – “Congratulations, we will make America great again” spoken with an unmistakable cadence. Yet behind the staggering realism lies a complex web of data, algorithms, and legal gray areas. Understanding how this technology works and why it matters is essential for creators, regulators, and voters alike.

When a novice uploads thirty seconds of text and hears the trademark pauses and inflections, their first thought is, “That’s almost too good.” This reaction is no accident. The technology that powers these voices takes hours of audio, processes millions of neural network steps, and outputs a waveform that mimics the speaker’s pitch, speed, and emotional micro‑twitches. Today, we break down each part of the journey – from data collection to public policy – and discover the implications that have far‑reaching consequences beyond the Twitter feed.

Consider a small content creator who wants to add humor to a TikTok. They compose a quick script, paste it into a voice generator, and within seconds a clip surfaces that might go viral. The same tool that fuels jokes can drive misinformation. A voter might encounter a fabricated speech that sounds like a candidate’s voice, causing confusion and eroding trust in the political ecosystem. The stakes are high, and the tools are improving at lightning speed.

- What will you learn today? A panoramic view of Trump voice AI from technical foundations to societal impact.

- Who will benefit? Creators, marketers, educators, regulators, and anyone who wants to stay ahead of AI ethics.

- Why should you care? The same algorithms that make memes funny can spin deception at scale. Understanding them is a prerequisite for responsible use.

What is Trump Voice AI?

Trump voice AI refers to software that replicates former President Donald Trump’s voice through artificial intelligence. This involves two main steps: gathering audio samples and training a neural network until it can render any text as if spoken by Trump. The distinction between mere dubbing and deep learning‑based cloning is crucial. Dubbing copies an existing recording, while cloning can produce a brand‑new voice from a new script. The voice model learned stylistic marks such as his New York accent, the way he elongates certain words, and the particular rhythm that makes his speeches unmistakable.

Understanding what makes a voice “Trump” requires attention to three real‑world cues: pitch ranges, flap‑like pauses, and emphatic bursts. A successful model must capture these subtleties to pass an ear‑catching test. Think of a person who recognizes the laughter of a friend because of their unique laugh; the algorithm identifies the spectral fingerprints embedded in human speech, turning data into an audible signature.

Because the voice is generated, each clip that world’s users share feels as if it was produced by the real Donald Trump. That’s why the technology is both celebrated and feared. Celebrated for its ability to create witty content, feared for its potential to poison public discourse. The boundary between “fun” and “dangerous” is thin and often depends on intent and transparency.

The rise of skilled voice generation also spurred new terminology. Deepfakes become the shorthand for fake audio that looks real. However, Joel Kyes, the voice‑clone engineer behind several open‑source models, argues that calling everything a deepfake is misleading, because the creation process across platforms varies significantly.

- Data capture: hours of speeches, interviews, debates as training fodder.

- Model architecture: state‑of‑the‑art transformer or recurrent neural networks.

- Generated output: full‑sentence audio that can be rendered live or saved.

How Does Trump Voice AI Work?

At its core, the system deceives the ear. It accuses a few and answers the whole. The process starts with collecting raw audio, then moves into deep‑learning training, and ends with the delivery of a final synthetic waveform. Each stage is riddled with challenges—a lack of balanced dataset, over‑fitting, compliance with ethical guidelines.

- Audio ingestion: Thousands of clips of Trump’s speeches from both televised debates and private rallies form the raw data. This dataset is curated, ensuring high quality and removing background noise that could mislead the model.

- Feature extraction: The model converts raw audio into frequency‑domain features, capturing spectral envelopes, pitch, and energy. Sophisticated algorithms mark the subtle speeds and pauses that make a voice distinctive.

- Neural network training: Several hundred epochs of training adjust internal weights so that the network learns to “speak in Trump’s style.” The learning objective is to minimize the RMSE between generated audio and target samples. Practically, the model creates a mapping from text to a worked audio waveform.

- Synthesis: The trained model accepts plain text as input, tokenizes it, and runs it through the decoder, which produces a waveform that is then post‑processed with denoising and speed‑normalization to match Trump’s natural cadence.

When executed perfectly, the final product is unrecognizably human. But the system is only as good as its training data. Inadequate samples or uneven speech coverage can produce artifacts. Users must calibrate intensity or add background music carefully to avoid misleading audience or violating copyright law.

Why this matters: a technically polished synthetic voice could bypass standard fact‑checking protocols. Because it mimics a trusted source, false statements or partisan rumors can spread faster than unimpressed humor. The deliverable is a betrayal of authenticity unless accompanied by appropriate labeling and context.

Key Technical Terms Explained

Random forests, Markov chains, and BERT resemble jargon from language processing, but inside a voice‑clone, they shape how speech is modeled. For those who prefer non‑technical, think of it as an advanced voice recorder that can rewrite what you typed into a nearly perfect imitation of a target voice. The subtle differences – like a slightly larger throat opening – are what differentiate a true clone from a simple re‑play of an old clip.

| Feature | Description | Why Matters |

|---|---|---|

| Pitch Variation | Manages which frequencies are dominant in speech. | Allow voice to sound natural instead of robotic. |

| Timing & Pauses | Captures human rhythm. | Maintains recognizability as a policymaker. |

| Prosody Modeling | Imprisons how words rise or fall in tone. | Helps to inject emotional nuance. |

Popular Uses of Trump Voice AI

Because it can generate a full speech instantly, users repurpose Trump voice AI across several domains. Below are field‑established and emerging use cases that highlight opportunities and pitfalls.

- Entertainment & Meme Culture: A talent in TikTok to create jokes, answer absurd questions, or “sing” with Trump’s vibe. The humor comes from juxtaposing the voice with ordinary topics.

- Marketing & Advertisement: Brands launch parody ads that mimic a political figure’s tone for a comedic edge. This practice gains fast attention but often triggers scrutiny.

- Education & Demonstration: Teachers illustrate the science of speech synthesis or debate ethics by showing how AI can replicate a public figure. Such become hands‑on labs for educators.

- Journalistic Investigation: Fact‑checking teams use the tool to spot deepfakes, replicating model‑based detection and producing flame‑tests.

- Cybersecurity & Voicemail Scams: Malicious actors produce automated robocalls that impersonate Trump, trying to manipulate voters or extort money.

- Political Persuasion: Activist groups create rally speeches or press releases in real time to stimulate apathy or bias.

While most use cases belong to harmless parody, the “edge” lies in how authorities can struggle to separate real from synthetic. Even a strategic meme can influence opinions if it appears to come from a credible source. Hence, the layered impact of this technology across the ecosystem.

Examples illustrate the range: In 2024, a campaign group produced a three‑minute audio of an “unscheduled ” Trump announcement about taxes, which led to a viral response on Twitter. Though labeled viral, many users believed it to be genuine and spread it further, undermining trust in official channels.

Its Rise in the Meme Economy

The fact that a single sentence can go viral if it’s delivered in a recognizable voice shows how human attention is manipulated. People react strongly to the familiar cadence. In the digital economy, voice memes become content currency. The cost of creating a meme via AI is a few clicks, not months of production.

Popular Platforms and Tools

When choosing a platform to create Trump voice AI, you’ll find a mix of free tools, open‑source APIs, and premium commercial services. Each offers a different balance of fidelity, control, and licensing.

- ElevenLabs Voice Studio: The industry standard with easy UI, adjustable pitch, and voice masking options. Offers both free tier (short clips) and paid plans with higher quality.

- FineVoice Platform: Thousands of voices, but their Trump clone requires a subscription. Good for batch processing.

- TruVoice API: A flexible developer interface for embedding cloning in a video app. Requires API key and credit usage.

- HuggingFace Mirror: Free open‑source models. Great for educational labs, but requires local computing power and some hacking.

- Cartesia Reactor: Combines voice cloning with live video avatars. Offers real‑time Twitch streaming features in addition to offline output.

Because each platform handles licensing differently, creatives must read the fine print. The legal risk is higher when you do not own the rights to a copyrighted voice. Even parody can run afoul of intellectual property laws if the output is used for paid distribution.

- Key considerations for brand use: Availability of commercial licenses, customizable vocal parameters, and a fixed timelimit for clip output.

- For hobbyists and educators: Look for platforms that allow free access to more than 30 seconds of audio.

- Compliance: Confirm if the tool includes an authenticity watermark or a “generated by” statement to avoid false‑impression claims.

Benefits of Trump Voice AI

While the possible harm often overshadows the positive aspects, the tools also open creative pathways that were previously expensive or impossible. The benefits spill over into several sectors, each with its own use case.

- Creative Storytelling: Writers can layer Trump’s voice into narratives to add satire, humor, or political intrigue. The ease of production means more stories can be tested before publishing.

- Marketing Innovation: Ads run faster when a brand can prototype a voice line in minutes rather than weeks of recording sessions.

- Accessibility: Spoken words help students with dyslexia. By customizing voice parameters, learners can choose a narrator tone that appeals to them.

- Educational Experimentation: Instructors can demo machine learning concepts in a hands‑on way, engaging students with tangible outputs.

- Social Media Performance: Viral videos often rely on authenticity; a well‑timed voice can boost a post’s shareability.

Consider an educator who wants to present a historical speech. By generating that speech in Donald Trump’s voice using a high‑fidelity model, the professor can illuminate how the same text might have sounded if delivered by the former president. The creative richness outweighs the modest risk if proper licensing is followed.

Common Misconceptions

One propelling myth is that high-quality cloning can occur freely without consent. In reality, many tools impose usage restrictions, especially for political figures. Another confusion is that synthetic speech can only imitate informality. The truth is: advanced models handle formal speeches as well as casual chatter with equal fidelity.

Risks and Ethical Concerns

Despite its playfulness, deep voice cloning can sow fear and mistrust. The risk profile applies across targeted segments: voters, journalists, and the online community. Below are layers of each danger.

- Political Manipulation: The ease of delivering a political message in Trump’s voice means a non‑partisan actor could impersonate a candidate, skewing public opinion. Looking at 2024 primaries, bot accounts flood forums with synthetic audio praising a candidate that never gave the statement.

- Misinformation & Gamer Narrative: Bad actors can spin harmful narratives wrapped in convincing audio. For instance, a fabricated revelation about tax policy can spread faster when paired with a Trump voice, because of the inherent credibility.

- Reputational Damage: Individuals with abusive or extremist agendas can damage reputations by creating false audio that claims the speaker draws support from a claimed Trump audio.

- Legal and Privacy Issues: The technology can run afoul of rights. IP law protects the voice as a recognizable trait; commercial exploitation demands licensing in most jurisdictions.

- Social Damage: When fake audio gangers hack real accounts and post fabricated audio, the public loses confidence in the authenticity of online media.

Every risk becomes amplified as the technology standardizes and diffuses. Think about a single copy created by a small startup becoming a timestamp in a major news event. The ripple effect can last months, and if not addressed early, may tamper public trust permanently.

Case Study: 2025 Fake Robocalls

In early 2025, a thread on a political forum surfaced a set of robocalls that sounded exactly like Trump, promising early voting instructions. The calls triggered panic and resulted in a spike in uncertain ballots. Police later traced the calls to a small Canadian company that had no intention of political messaging. The incident highlighted the urgent need for distributed identity verification mechanisms.

Regulations, Laws, and Safeguards

Policy makers, tech giants, and civil society groups are all racing to ratify safeguards. The legal environment remains patchy, but a clear trajectory is emerging.

- Act on Voice Cloning (2024 Signature Act): Created by the U.S. Senate, it criminalizes the creation of realistic deepfakes used for fraud without consent from individuals. It draws direct references to Trump voice AI to set a precedent for political figures.

- EU Digital Services Act: Requires platforms that host voice content to add notices about synthetic media and encourage user verification. Members must provide an AI content filter or watermark.

- State‑level Sanctions: Nevada launched the “Ethical Voice Implementation System” (ELVIS) to protect artists and provide guidelines on licensing usage. The bill has been referenced by Texas, Colorado, and Michigan.

Where regulators operate over the internet, they aim to limit the propagation of illicit voice content through mandatory watermarking and click‑through verification. If developers add a watermark “Generated by ElevenLabs” in both audio and video, it signals authenticity. Enforcement remains a challenge because audio can be stripped. Yet, the combined legal and technical barriers shift the cost of deep‑fake creation upwards, thereby reducing its prevalence.

Proactive Measures for Developers

- Transparent Disclosure: Recorders must embed a meta‑tag within the MP3 tag or add an on‑screen disclaimer if a clip is AI‑produced.

- Fair Use Thresholds: Developers should offer licensing tiers for commercial usage and restrict arbitrary usage at free level.

- Copyright Recognition: Every copy of a voice requires the creator to respect the political figure’s rights. The platform can automate a booking system for license purchases.

These steps lower the legal void and provide user education about responsible consumption. Over‑time, effective policy, combined with well‑designed user expectations, can transform the technology from a threat to an asset.

The Future Landscape of Trump Voice AI

Predicting the next decade is always speculative, but a few patterns suggest a direction for voice cloning.

- Hyper‑Realism: Devices that require only a short audio sample (three minutes) will be able to produce near-photographic fidelity. These models will incorporate prosody, breathing, and emotional states with low error.

- Real‑time Conversion: Voice assistants will integrate deep learning to on‑line produce cloned voice in a conversation, opening new possibilities for live translation.

- Cross‑modal Integration: Combining voice cloning with computer vision to produce consistent facial animation in avatars. This synergy will push satire beyond what text alone allowed.

- Decentralized Governance: Cloud‑based collaborative learning networks will incorporate auditing protocols, allowing public oversight and preventing rogue generation.

- Ethical Self‑Regulation: Major AI vendors are to adopt independent ethics boards that audit each model’s compliance with the Act and the EU Digital Services Act. The trust meter will increase as these checklists warm client confidence.

One also sees the potential for parody to transform into tailored “personalized advertising campaigns” for political events. Marketers might harvest a resident speaker’s voice to generate dynamic content for each user segment. This combination of personalization and voice realism could boost campaign outreach, creating crisp, low‑cost creatives for unprecedented reach.

Real‑World Impact: A Case Analysis

To bring abstract points to life, let us explore a real scenario: A Small Town Elections 2025. A Democrat candidate needed to clarify a policy on healthcare. A local radio host created a short clip bridging the policy with Trump voice AI. The host misinterpreted “no,” as “Yes.” The clip was mistakenly shared on a local Facebook group. The town’s Facebook group had more than 7,000 members. The false proclamation spread within hours, leading to a mini‑scandal that took a week for the candidate’s office to debunk. The incident highlighted how a single synthetic voice can undermine political credibility.

From that event, we distill lessons:

- Always label synthetic content: The simplest step to prevent harm. A single line of disclosure prevented further damage.

- Check the source before spreading: Even trusted internal teams misidentified the audio as authentic.

- Use verification tools: Deploy open‑source detection models to confirm authenticity before publication.

As marketing teams begin to hold new voice generation models, training on verification processes becomes fundamental. These steps minimize miscommunication and uphold ethical marketing.

What to Do If You Want to Use Trump Voice AI

For creators who want to adopt voice cloning responsibly, the process is surprisingly straightforward.

- Choose your platform wisely: Pick a provider that offers automatic watermarking and a clear licensing mechanism.

- Verify your usage rights: Before you spend cash or time, confirm that the voice is free from IP restrictions for your intended use. Documentation matters.

- Label the content transparently: Place an audio watermark or an on‑screen “AI‑generated” banner for video content.

- Train your audience: If you’re distributing content externally, add an introductory sentence clarifying that the voice is synthetic.

- Invest in moderation tools: Use the platform’s built‑in methods to check that your content does not cross into harmful territory.

Think of the final clip as a tool – strong and versatile, but with the potential to mislead if used inappropriately. By following these five steps, you can integrate Trump voice AI into your workflow while maintaining trust and legality.

Conclusion: Balancing Fun and Responsibility

At this juncture, the debate is far from settled. While a new audio technology offers tremendous potential for creativity, it also provides a weapon capable of eroding public trust. The reward process is a tightrope between innovation and responsible use. You, as a content creator or even a consumer, must navigate it, knowing both the shine and the shadows that come with Trump voice AI.

For creators, use it sparingly, and never for the purpose of deception. For regulators, make watermarking mandatory and enforce licensing checks. For the wider public, recognize the signs of fabricated audio and verify before believing or sharing. The intersection of AI voice technology and political discourse is a fertile ground where several stakeholders must cooperate to raise the bar for ethics and accuracy.

When you get ready to experiment, remember that your voice can preserve or destroy trust. A well‑crafted clip might earn laughs, but an unchecked one might trigger a political firestorm. Choose wisely, test responsibly, and ask for permission when in doubt.

Frequently Asked Questions (FAQs)

What exactly is Trump voice AI?

Trump voice AI is a type of deep learning voice clone that can convert any typed or spoken text into an audio file that sounds like former President Donald Trump. It relies on large models trained on hours of public speeches, debates, and interviews to learn Trump’s cadence, pitch, and speaking style. The end result is an almost indistinguishable synthetic voice that feels native to the user. The benefit is instant content creation across social media, marketing, or education – but with an increased risk for misinformation if used irresponsibly.

Can I develop this technology myself?

Building a credible voice clone requires high‑quality data and a sophisticated model architecture. You would need a clean, nonstop audio dataset of Trump’s speech, substantial GPU resources for training a transformer or RNN model, and a big expertise in signal processing. The architecture must be tuned for prosody, breathing patterns, and pauses. While you could technically replicate a prototype, practical implementation involves significant costs and risk of infringing on intellectual property. Most creators’ best path is to choose a commercial tool that offers licensing and watermarking.

How are regulatory frameworks shaping the use of Trump voice AI?

In the United States, the 2024 Senate Voice Cloning Act renews governed phone calls, counter deepfakes used for fraud, and restricts non‑licensed usage. The EU’s Digital Services Act asks platforms to flag synthetic content and allow users to verify authenticity, while states like Nevada, Michigan, and Texas require user licenses for personalized voice clones. Enforcement remains uneven, especially for short‑form or offline usage, but combined, the thresholds are tightening, demanding transparency and written consent from voice owners.

Is there a safe way to release a Trump‑style voice clip?

Yes. Providers that comply with policy typically embed an audible marker (e.g., “generated by ElevenLabs”) into the audio or show an on‑screen watermark in video format. Toggling a visible label, adding a short textual statement, and ensuring the clip is categorized as parody or satire all reduce the chance of the clip being taken at face value. Always cross‑check the license agreement: if you intend commercial distribution, you must secure the license and pay any required royalties.

What are the consequences of distributing a fake Trump audio without proper labeling?

From a legal perspective, the act can be illegal if you impersonate the speaker without consent or the fact that the content isn’t genuine is meant to mislead. States can impose civil liability or criminal penalties. Publicly, you risk brand damage, reputational harm, and loss of trust. In political contexts, it can influence opinions and voting behaviors, an outcome monitored by lawmakers who may add stricter restrictions or penalties following a breach. Ethically, respecting user trust is crucial, as human bias can hijack your audience’s perception.