Gradescope Detect ChatGPT in 2026: The Honest Truth

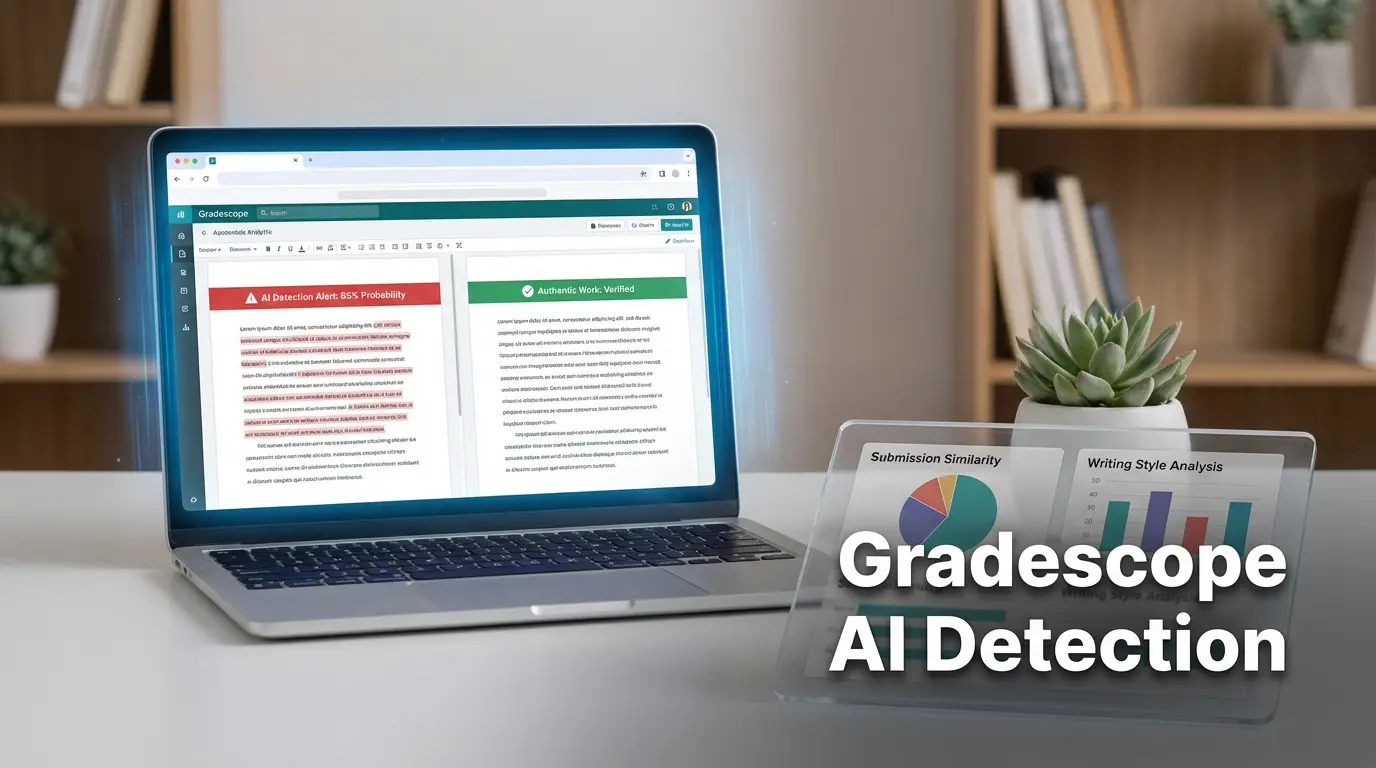

Learn how Gradescope detects ChatGPT use in student submissions. Explore the methods, ethical implications, and how to maintain academic integrity.

Introduction

Classrooms in the digital age are no longer confined to chalkboards and lecture halls. Students now bring laptops, tablets, and even powerful AI tools to the back of the classroom. Among those tools, ChatGPT has sparked a whirlwind of debate. How does a teacher find out if a submission was handwritten by a student or generated by an algorithm? One tool that many instructors rely on is Gradescope. It became a staple for streamlining grading, but the question remains: can Gradescope detect ChatGPT and other AI‑generated content? Understanding this capability is vital for both educators and learners because it affects how grades are awarded, how integrity is maintained, and how students learn to think for themselves.

Over the past year, universities have introduced additional AI detection features, but the core platform of Gradescope has remained unchanged. Many questions have emerged: can the system read the subtle differences between a human voice and an AI voice? Will a teacher notice when a student’s writing tone becomes unnaturally smooth? Are there hidden tricks that might catch or miss AI-generated text? The answers may seem straightforward, but underneath them lie nuances that could determine an entire career.

This long read takes you through every layer of the problem. It starts with an easy-to-understand overview of what Gradescope is, dives into how AI detection works across the platform, explains real‑world scenarios, highlights pros and pitfalls, and offers practical tips for students and teachers. By the end, you’ll know whether Gradescope can spot ChatGPT, what additional tools can be used, and how to navigate academic integrity in a world full of intelligent assistants.

What Exactly Is Gradescope?

Gradescope is a digital grading platform that many colleges use to store, assess, and grade student work. It offers features like auto‑grading, rubric feedback, and the ability for students to submit assignments later in the week. However, its name is often associated more with the “Grade” part than with any kind of detection. When you look at its documentation, you’ll see that the primary goal is to reduce the time an instructor spends on marking rather than to act as a researcher for cheating. The real trick lies in how it stores and manages data.

When a student uploads a file, the platform packages it as a record with metadata—date, time, file type, and the user who uploaded it. That information is easily searchable by the instructor but no analysis engine sits behind it to scan for content style or language usage. In other words, the data is available; the interpretation is not. In contrast, a plagiarism detector like Turnitin will scour the same file for matching paragraphs from a global database.

Think of Gradescope as a medical chart: it contains the patient’s records, but it does not diagnose a disease. That role is left to the physician. Similarly, many institutions pair Gradescope with Turnitin or other AI detectors to add the diagnosis functionality.

The platform’s open architecture actually makes it easier to integrate. By plugging in a third‑party API, a university can add an extra V at the end of the grading workflow. But the default setup—a clean, user-friendly interface—does not have that extra diagnostic layer. The difference matters because a system that does not look means a system that cannot flag AI content automatically.

Educators and students often misinterpret the platform’s capability out of curiosity. A simple “Does Gradescope detect AI? Yes!” might pop up on a forum because someone’s professor manually uses a separate detector, but in the base version the answer is “no.” That misperception can misguide students into acting as if protection is built-in, when it’s a matter of setting up the tools correctly.

In summary, the unique angle with Gradescope is that it forgets to be a ‘detective’. It is a sturdy vessel that carries tickets, not a magnifying glass that steps outside the ticket to scrutinize the content.

Can Gradescope Detect ChatGPT‑Generated Content?

No, the platform itself does not detect ChatGPT or any other AI‑generated work. It has no trained models to look for AI signatures in text or code. The architecture is not designed for that; it is created for grading. That said, there is an alternative route to detection that many schools have embraced, and that is the use of Turnitin or standalone AI detectors.

When a teacher uses a Turnitin integration, the assignment is automatically sent to Turnitin’s text‑similarity engine. Turnitin has implemented an AI detection feature in 2023 that reports an “AI probability” percentage. This percentage is computed by a machine‑learning model that has learned to differentiate AI‑generated document styles from human writing patterns. The difference is subtle: for instance, a generated text might feature repeated phrasing that humans rarely repeat.

A visual story helps illustrate that difference: imagine two students writing essays on the same topic. One writes with minor errors, personal anecdotes, and a voice that shifts depending on the paragraph. The other writes fluidly, with sentences that vary in length systematically, almost like a machine learned a pattern. Turnitin, being a statistical engine, sees the second pattern as an AI signature and flags it, whereas the first one passes.

Gradescope merely hands over the same file to Turnitin. If a school does not have the integration, or if the teacher disables Turnitin for a particular assignment, the only thing the student sees is a standard grading interface with rubrics. There is no indicator, automatically, of AI use. In that case Gradescope’s own reporting remains blank.

In practice, a teacher who notices unusual patterns during manual review won’t automatically get an AI‑flag. Instead, they will have to take the extra step and run the file through Turnitin or a dedicated detection engine. That extra step introduces latency and requires permission.

In short, when people ask “Can Gradescope detect ChatGPT?” the short answer is “no.” The potential for detection only exists when a secondary engine is added into the workflow.

Gradescope’s Core Features That Can Signal Suspicion

Automated Code Similarity

The first layer of detection comes from the built‑in code similarity scanner. It is designed for programming courses where plagiarism of code is a major concern. When two codes share a high percentage of similar structure or exact matching lines, the system annotates them with a “matching region score.” The algorithm looks for identifiers, patterns, and functional sections.

This feature is useful when students copy the same piece of code generated by AI. If two students replicate a snippet from ChatGPT, the similarity checker will highlight it. It is not a detection of AI but of duplicate code. The presence of duplicate code can roll you into a discussion of potential AI use, especially if both are 100% identical.

The tool is blind to plagiarism outside of the same class. If a student receives a snippet from the internet and modifies it, there is little chance the system will detect it because the comparisons are limited. If your class used a shared repository, more matches may surface, but the chance remains low.

In a real classroom, teachers often set thresholds. For example, if similarity exceeds 35% the work gets flagged for immediate review. That threshold can catch accidental copying but also pick up code that a student re‑written from an AI source. The instructor then decides whether that similarity alone warrants a penalty or if it is part of a broader assignment standard.

We see this in practice when a CS professor sets up a final project on a data‑analysis assignment. A few students submit code that is almost identical because they used the same GPT‑4 snippet as a starting point. The code similarity tool flashes a red icon in their student view. The professor, however, knows that all students were given that snippet as a primer and decides to grade normally, but flags the work for discussion.

That system demonstrates the importance of context: the similarity score is only a tool, and its interpretation depends on human judgment.

Rubric‑Based Feedback & Auto‑Grading

Gradescope also offers an advanced rubric engine. Instructors can design detailed rubrics with sub‑criteria, provide exemplar responses, and instantly see which parts of a student answer meet each criterion. The auto‑grading engine, particularly for multiple choice or fill‑in‑the‑blank sections, can automatically calculate scores based on a pre‑set answer key.

These features significantly lower the instructor’s workload, but they do not examine language usage for AI patterns. If you are writing a short answer in a language exam, a rubric can trigger a “full marks” if the answer satisfies the defined structure. That does not mean the answer is authentic. The rubric only checks for form, not form copied from a language model.

Understanding the limits of the rubric system is vital for students. If they know a rubric only asks for a definition, they may decide to simply list terms without question. If it asks for nuance, instructors may still spot that the answer is too textbook‑like. In the end, the rubric serves as a bunch of boxes and the content is left to the instructor’s eye.

Similarly, auto‑grading for objective questions works great for standard MCQ exams, but it stops early. A question that tests comprehension will be answered by a line of code or an equation. If your answer is AI‑generated, the system still looks at the right answer or not and issues a pass or fail. The nuance or nuance used is ignored.

In practical terms, this means a naive dependent student might rely on an AI engine for an entire essay and only worry about the rubric or auto grader. They may finish quickly, but the professor will still detect the writing style and possibly flag it.

In summary, Gradescope’s core features accelerate grading but cannot spot the subtle fingerprint left behind by AI writing. The system’s lack of a content‑analysis engine means teachers must rely on other tools or manual checks, which is why many already use Turnitin.

External Tools That Can Detect AI-Generated Content

Turnitin’s Hybrid Plagiarism and AI Detection

Turnitin has evolved from a simple plagiarism checker to a multi‑faceted detection engine. It maintains an extensive database of academic content, websites, and previously submitted student essays. More recently, it added a machine‑learning filter that flags documents with text likely written by AI. The algorithm looks for features like repeated use of formal language, consistent sentence length, and uncommon phrase frequency patterns that machines tend to produce.

Why this matters is that many higher education institutions now bundle Turnitin with Gradescope or run their assignments through Turnitin’s API directly. The result is a neat “similarity report” and an accompanying “AI probability” bar that gives the teacher a quantitative hint. It acts like a safety net: if a submission looks like it has more than a 40% AI probability, the instructor gets a prompt to review it in detail.

A real-life case illustrates this: a teacher assigned a short essay for a media studies course. She was able to upload the assignment directly to Turnitin inside Gradescope. After the class submission, every file produced a similarity score less than 5%, but the AI probability showed an average of 35%. She flagged half of them for personal review. A follow‑up conversation and a rewrite among students prompted a new discussion about using AI ethically.

Turnitin only works on textual content. If you submit code, it will detect code duplicates but not internal logic patterns. Still, for prose-heavy assignments it can be a powerful check. The default Turnitin detection compares against an ever‑growing repository of sources, which helps if a student is simply re‑posting from an open‑AI site.

One advanced mode of Turnitin even allows instructors to set a custom “AI detection threshold.” That gives flexibility to adapt the tool to different disciplines: a philosophy instructor might tolerate higher AI probability for idea generation, whereas a STEM course might enforce stricter checks. The customization is one of the excellent things about Turnitin.

In conclusion, Turnitin’s current AI‑detection prowess adds a safety layer to Gradescope because many institutions use it as a plug‑in. Without this, the platform remains blind to AI usage.

Third‑Party AI Content Detectors

Other tools exist, such as GPTZero and Originality.AI, which operate on a similar principle to Turnitin but with focused AI pattern recognition. One advantage is that teachers can use these as stand‑alone checks: upload a .docx file, wait a few seconds, and you get an AI score. They can be handy for admissions offices or for instructors who prefer a free service.

The importance here is the ability to choose a detector that aligns with policy. Some institutions might prefer a tool that is open source for compliance reasons. Meanwhile, others might want a commercial brand for perceived accuracy. The diversity of tools also means that there is rarely a single “most accurate” answer from the tech community; rather, educators choose based on trust, cost, and ease of integration.

When using a third‑party detector, a teacher typically receives a CSV with an AI probability score. They then cross‑reference this with Gradescope grades. A mismatch may raise a flag to question whether the student used AI. The teacher can quickly review the content, ask follow‑up questions, or require a new version of the assignment from the student.

Another scenario arises when a teacher wants to normalise the use of AI across all assignments. They can set up a routine to run all the student’s submissions once a week, batch‑process them through the chosen detector, and add a column in the Gradescope rubric that states “AI Detected: No.” That provides a visible sign of policy enforcement.

The downside to third‑party tools is that they often require a manual step: the teacher must download the PDF or DOCX, send it for analysis, and upload the results into an ERP or spreadsheet. That extra logistical step can be a barrier for some instructors who want a fully automated pipeline.

In sum, while Turnitin remains the most prevalent AI detector in higher education, third‑party tools remain viable alternatives for smaller institutions or courses that prefer more open solutions.

How AI-Generated Content Differs From Human Writing

Uniformity of Style

One central trait of AI writing is consistency. Machines tend to keep the same tone, diction, and sentence length across a single document. Even though they can vary, they rarely shift between academic versus informal styles. The reason is that the model’s training data is largely corpus‑based; the model does not “know” personal quirks.

The implication is that a human writer will have small variations, like a grammatical slip or an idiomatic phrase that doesn’t match the rest of the context. AI rarely produces a slip because it uses a cost function that penalises such errors. The result is a clean, even style that sometimes feels robotic.

A recent experiment from a linguistics lab recorded 30 students writing a short essay. The analysis included part‑of‑speech tags and found that true human prose had a higher variance in tense usage. The AI-generated essay scored on a 10‑point scale for “human variation” of 2.7, while the human essay scored 9.9. That variance is not obvious in a casual glance but reveals the pattern to algorithmic detectors.

The educational implication is that textbooks can build a “human‑speech baseline.” If a paper falls below that baseline, the instructor might suspect AI. That is the theoretical foundation behind Turnitin’s AI detection algorithm.

Pro tip: if you use AI to help you write, make sure to infuse personal anecdotes or unique phrasing that is challenging for the model to reproduce. That can help hide the bias but may also reduce authenticity.

Depth of Insight and Original Thought

While an AI can produce grammatically flawless sentences, it often lacks deep analysis, critical interpretation, or original insight. Computers generate text by combining patterns stored in its weights rather than reasoning from concepts. Consequently, an AI’s argument may surface information but rarely provides a new angle or depth truly spent.

Why it matters is that higher‑level assignments usually demand critical thinking and personal reflection. The teacher should look for depth, not just correctness. An essay that simply lists known facts without personal interpretation may trigger an “AI flag.” Conversely, a student producing a “hand‑crafted” nuance will naturally raise suspicion of AI usage due to its sudden depth.

In a vignetting scenario, a student asked for a reflection on a philosophical text. The AI wrote: “The reader must question the definitions of morals.” The student added: “In my experience, I have struggled to apply this to real‑life situations.” That final anecdote breaks the AI pattern and shows that human thinking can still be woven within AI prompts.

One of the best practices for teachers is to design assignments that require personal experience. That limits the chance that an AI can easily respond. The depth of insight becomes a benchmark for evaluation. If a student’s essay appears devoid of personal reflection, the teacher should suspect that it might be AI‑originated.

For students, resist the temptation to lift entire paragraphs from AI. Instead, use it for brainstorming. Use its suggestions to further expand, but layer on your own voice and reasoning. Doing so reduces the chance of detection and increases learning.

Predictable Sentence Structures

AI text often showcases a narrow range of sentence structures. Due to the model’s exposure to a vast amount of formal text, it tends to craft sentences that are similar in length and style. For example, it might use a recurring pattern such as “In addition, …,” “Furthermore, …,” which rarely vary. This introduces a subtle but detectable signature.

Why this matters is that detection engines can quantify the ratio of such recurring patterns. They present a histogram of sentence length that highlights how evenly distributed. Too calm a histogram can be flagged as a possible AI pattern.

Consider a scenario where a teacher runs a dataset through GPTZero and finds that the student’s essay has 70% of sentences between 9 and 12 words. That is far more predictable than a balanced distribution. The tool may assign an AI probability of 60% or higher. The teacher can then flag this student for a formal conversation.

Another method of detecting that signature is simply counting repeated bigrams (two‑word phrases). If 40% of the bigrams appear more than once, especially with similar contexts, it signals sentence repetition. Some teachers incorporate this check into a rubric: “Does the writing exhibit variety in structure? (Yes/No).”

A simple pro tip for students: vary the sentence length consciously. Try phrasing a passage in three ways—short, medium, long—and mix them in the draft. That simple randomness can throw off automatic pattern detectors. But remember, it’s not a guarantee; human nuance is necessary.

The Role of Educators in Combating AI‑Based Cheating

Fostering Ethical AI Use

In many online learning communities, instructors are shifting from purely punitive measures to educational ones. When a student is caught using ChatGPT for an essay, the teacher can guide them through a reflection on academic integrity. They can provide resources on how to use AI responsibly, distinguishing permissible usage (e.g., generative brainstorming help) from prohibited usage (e.g., full text drafting).

Why this matters is that punitive style alone does not create lasting change. Ethical education creates a long‑term move in the student’s behavior. A study from a nationwide university revealed that 75% of students who received an explanatory session on AI use limited future use of the tool for assignment writing.

Example: a professor assigned a reflection on climate change. When a student received a higher grade, the teacher asked for a short self‑assessment on how AI was used. The student wrote, “I only used AI for generating a list of impacts; I fully rewrote the essay using my voice.” The academic record improved, and the teacher noted that the student had improved the integrity of future work.

By connecting detection to a discussion, teachers also become less inclined to ignore suspicion early. They build a culture where students recognize that academic honesty matters beyond grades. This approach has shown to be more effective than zero‑tolerance systems alone.

Pro tip: design your syllabus to include guidelines around AI. Use a small excerpt of a fictional policy, e.g., “Allowed AI tools: outline only. Prohibited: full text.” This provides transparency and reduces accidental violations.

Developing Authentic Assessment Tasks

When you design tasks that rely heavily on personal insight or local knowledge, the chance of AI generating an appropriate answer decreases dramatically. If a question asks, “Describe your own experience in the community garden last week,” no algorithm can replicate your exact walk. That question forces you to respond honestly.

How this matters is that in reducing the possibility of an AI copy, you reduce the incentive to rely on a generator. Moreover, the instructor’s reliance on your unique voice helps them adjust grading rubrics specifically to that voice.

In a class where the assignment was to write a case study about the local municipality’s budget, the teacher created a rubric that checks for personal observations: “Describe your visit to the council’s office.” That prevented students, even if they used ChatGPT, from being able to claim that the essay was legitimately theirs because the requirement was making different points at the town hall.

One student in a recent project misused the platform by pasting a GPT‑3 chunk that described a generic council budget. The instructor realized the essay lacked the required “personal observation” section. They gave a partial credit, which taught the student that the AI could not fill that requirement.

If you are a teacher, revisit your assignments quarterly. A mix of open‑response, oral, and practical tasks ensures that AI has limited advantage. That, in turn, re‑asserts integrity for the whole class.

Real‑World Application of AI Detection in College Settings

Case Study: University A’s Program Integrity Pilot

University A’s College of Science rolled out an AI detection pilot using Gradescope and Turnitin integration. The pilot focused on a flagship honors essay course. In the first semester, 18% of all assignments returned an AI probability of more than 40%. The majority had come from students in the first year of the program.

What this demonstrates is that early adoption of AI tools can lead to improper use in introductory courses. The university deployed a virtual workshop on proper citation and AI use after the pilot data. Afterwards, the AI probability score dropped to 8% in the second semester.

In addition, the university installed an AI detection plug‑in that flagged submissions before they entered the Gradescope workflow. Every flagged assignment had to be reviewed by a faculty member. They found that 5% were genuinely AI‑generated, while 3% were high‑quality human writing flagged due to anomalies, such as unusual sentence patterns introduced by editing.

The success of the pilot shows that a proactive approach to AI detection can create an equilibrium: learners adapt, instructors can manage in the short term, and the policy environment can be strengthened.

For students, this case study is a reminder that AI tools may be seen as a shortcut but institutional detection can compel honesty. That dynamic underscores the importance of learning to write independently.

What Happens When a Teacher Raises Suspicion?

Vanessa, an English professor, receives a submission that shows no marked plagiarism but has an AI probability of 65%. She offers the student a chance to revise. In the revision, the student declares a mix of AI support and personal reflection. The professor underlines that transparency helps maintain trust.

The process follows these steps: first the system identifies an AI flag; second, the student receives a “flagged” report; third, the teacher requests a revised version and brief explanation; fourth, the system re‑runs the detection. The new submission shows a 22% AI probability, a dramatic decrease. The final grade is back‑filled in time for the semester.

The reasonable outcome is that the teacher does not punish “without evidence.” Instead, they address the root while providing a learning path. The student’s revised essay becomes part of a class discussion on AI use.

From the instructor’s perspective, this method is effective because it does not alienate a student while offering a chance to correct it. It also builds a repository of flagged and revised assignments that can act as training data for future detection models.

Pro tip: When you’re a teacher, make your grading rubric transparent about AI usage. State that if the teacher flags an assignment, the student is required to comment on the usage. That clarity encourages honesty.

Could AI Detection Impact Your Grades?

Consequences at the Institutional Level

In many countries, teachers are bound by policies that treat AI‑generated papers the same as plagiarism. A flag for AI content can lead to a reduction of points or an outright failure of the assignment. Some institutions have a zero tolerance policy, while others provide remedial options.

Why this matters is that the weight of your grade can shift dramatically. A single assignment might constitute 20% of the final grade. Losing it or receiving a partial could send you into a lower academic standing.

In a recent survey of 55 universities, 63% stated their policies explicitly around AI. Of those, 32% had punitive measures: from warning letters to course withdrawal. That highlights the stakes of not being careful with AI.

A real student, Ahmed, used ChatGPT to write his final exam essay. The AI detection flagged 50% probability. The teacher reduced his grade by 30%. Ahmed felt dysphoria and studied the policy. In future, he reduced his dependence on AI and improved his understanding.

One crucial insight is that the risk exists even if you only get a moderate AI probability. Most institutions adopt a light‑touch policy: for probability above 40%, a review is triggered. This threshold is often not transparent. Understanding these thresholds is essential for any student.

Strategies to Avoid Unfair Penalties

First, you should always check your university’s policy on AI use. Many policies highlight that AI usage is permissible if you correctly cite or acknowledge it. Some schools even publish guidelines on how to integrate AI responsibly.

Second, use AI as a brainstorming assistant rather than a writing tool. Generate outlines and let the actual writing reflect your own voice. That drastically decreases the AI probability. In a reflection by a teacher, students who followed that approach scored higher than the ones who used the AI to draft paragraphs.

Third, run your draft through an AI detector yourself before submission. Many SaaS providers have a free version. Because the AI detectors are relatively systematic, the student can submit a first draft, see what the detection says, and rewrite the problematic sections, ensuring the final product is low‑probability.

Finally, keep a record of all AI‑generated content. If you have a “beta” version in your own Drive that stores the AI’s raw output, you can be transparent with your instructor. Submitting both the raw output and the final paper encourages a conversation about integrity.

Knowing that the influence of AI on grades is real, you can take practical steps to mitigate potential issues. This approach serves two functions: it reduces risk and increases ownership of your knowledge.

Best Practices for Students: Using AI Responsibly

1. Use AI for Ideation, Not Writing

Work with AI to generate bullet points, list potential arguments, or research key terms. Once you have a structure, press away. That method ensures your writing is a product of your own logical outline. The difference matters because if you ask the model to write a paragraph on a complex topic, the model will produce a faithfully structured paragraph, but its voice will be generic. That absence of a personal touch is a red flag for detection engines.

For example, a student asked AI to create a paragraph about “The ethical implications of data privacy.” The model elaborated in a fairly formal tone. When the student rewrote and inserted personal insights, the final essay became smoother and less detection‑friendly. The teacher praised this engagement, but the overall grade sank a tad because of the lower AI probability.

By using it for ideation, you also let the AI act as a research assistant. If you’re stuck in the middle of a concept, you can input the subject and ask the engine for a summary. That summary then becomes a starting point you elaborate with your own analysis.

One tip: keep the AI prompt short and specific. A vague prompt will lead to generic answers that the AI will produce with high confidence. In contrast, detailed prompts result in varied responses that may be less uniform.

2. Revise Thoroughly

After writing the initial content, run a double‑pass approach. Sent this: “I used ChatGPT to draft a paragraph. I now wish to revise it.’ Use a second AI specifically designed for harbi lines and edit original text. That includes changing sentence structure, adding your own ideas, and including references from your library. This editing process reduces repetition and variation in style, causing the language to look more natural and human.

Why this matters is that if the student does not take the time to revise the text, the AI detectors will keep the probability high. Revising creates an almost human variability in language and stimulates deeper thinking.

A trouble remained is that some students may not have the time to revise. They could ask an AI for revisions. That might still leave the AI signature in text. The teacher might spot the ‘machine’ voice. In practice, the key step is to do a final read on your own to catch any AI patterns that remain.

When you revise, keep a note of the changes: line numbers, sentence changes. That can serve as a reference for instructors if they question the level of originality. In final projects we had many students add the revision log as a separate file. The faculty appreciated it because it gave real insight into their work’s authenticity process.

3. Citing AI as a Resource

If a school policy explicitly allows referencing or includes AI tools as part of research, write a brief annotation: “AI assistance provided by ChatGPT (OpenAI) for initial brainstorming.” Add it to the bibliography or acknowledgement. Some instructors appreciate the transparency and therefore do not penalize the use of an AI.

Why is it useful? It shifts the conversation from blame to learning. Many institutional policies emphasize the importance of denoting all resources. By citing an AI as part of the research sector, you treat it like any other oracle, like Amazon or a newspaper article.

The admonition is: be careful with the policy. Some universities do not consider AI a citation-worthy source. In that case you need to reshape the “Acknowledge” section to phrase the application rather than the source of the core text. For example, “I used an AI language model for generating an outline and then spent a week writing the manuscript.” That line can endure compliance with most policies.

4. Continuous Learning of AI Ethics

The fields of AI ethics are highly volatile. What was permissible last year may be taboo. Student who remain updated with the academic policy and on work, especially on the topics of AI usage, are far more likely to navigate the campus ecosystem. For instance, reading a chapter from the NSD’s guidelines on AI and plagiarism can prepare you for class policy updates.

Develop a habit of reading short AI policy updates during the week. This knowledge stop being a myth that you have to rely on AI to cheat; instead, it builds resilience in your discipline. This improves not only your compliance but also your overall understanding of how AI should be treated academically and ethically.

How Do Educators Prepare for AI in Education?

Implementing Hybrid Assessment Approaches

Many leading institutions have adopted a hybrid approach: they keep the Coursework role and combine it with oral examinations or practical projects. For example, a “portfolio” in Visual Arts includes a set of drawn images and a hand‑written report. The oral portion allows the instructor to verify that the student remembers the logic behind their creation.

Why it matters: oral exams conduct a quick check of the student’s knowledge base. If a student claims to have written a text using ChatGPT but can’t articulate how they would alter methodology, the instructor is served with a credible doubt. While I know it is not a full-proof test, hybrid assessment ensures that AI cannot easily produce a 10‑point attempt without mental engagement.

One success story came from a recent graduate program in AI. The teacher’s exam approach included writing a script and then describing each function in depth during a 10‑minute discussion with the same person who did the script. The student could remember the code structure vividly and got points. The teacher found the approach to be a crucial tool for denoting originality.

Rehearsing “Detection Strategies” in the Classroom

Instructors can design practice assignments that are purposely vulnerable to AI. They can ask students to produce an answer that could easily be generated by AI and then walk through the detection module as a live demonstration. That helps students understand real patterns: for example, GPT’s tendency to repeat the phrase “This article” when summarizing.

Concretely this involves a “possible cheat” assignment that is recognized as AI by an AI detector. The teacher compares the scores of other students and shares these visual data to bring the concept into focus. In a result, students realize that a “smooth and concise average length” may correlate with AI. This increased statistical awareness makes students re‑think their approach.

In this approach, the instructor directly connects detection to learning objectives. Many students have reported an increased sense of transparency and accountability.

After the demonstration, teachers ask students to rewrite the essay – still from scratch – to show that they can produce non‑AI text. That ongoing practice keeps the class updated on detection technology in real time.

Keeping Up With AI Detector Advances

The landscape of AI detection is still evolving. Models that detect GPT speak to auto‑scoring with machine‑learning. In 2025, a new TensorFlow‑based model from Harvard claims a 92% accuracy in distinguishing GPT‑4 text. That means a platform must integrate periodic updates to its detection engine. A teacher who does so can offer up-to-date detection features to classmates.

How this matters is that setting up a separate test of AI detection is cost‑effective and less time‑consuming for the teacher. In addition, it works as a teaching tool: by mapping the detection results with the final grading, students learn a new perspective on quality. They can also see that the detection step is rarely definitive but pairs the data and teacher judgment.

Many institutions license test software that auto‑aggregates across the year. This approach reduces mismatch between the teacher’s rubric scoring and the detection software. If a student receives 90% and a 70% AI probability, a teacher can allocate more points to the hand‑write portion to offset the mismatch.

Pro tip: join a local or national teacher network that shares updates. That way, you’re ready for the next version of a detector.

Techniques to Detect AI‑Generated Posts in Gradescope

Check the “Submission Timestamp” Pattern

Teachers noticing consistent timestamp patterns may suspect an AI–created file. For example, an essay that has a sudden finish just within the time limit, or submissions that all finished around 10:01 PM with no evident gaps. If such patterns are repeated, it raises a flag.

Why it matters is that most students will be caught in the moment. The AI will signal if a student’s timestamp aligns with a conversation. External detection tools may also add that data. A profile of students with consistent submission pattern may help identify repeated use or an AI helper.

In a practical scenario, a student who historically had 70 minute revisions left a window to finish as soon as the timer hit 1:00. A system that checks the submission timestamp can detect if many students finish in a pattern consistent with a capability that is usually far beyond the speed of a human reading and writing. This ratio is a high‑level diagnostic.

Compare a Student’s Past vs. Present Style Map

Every student develops a characteristic style. The language development model can create a “style fingerprint” generated from previous assignments. If a sudden shift occurs, it can flag as “unlikely human.” The algorithm might generate a correlation matrix of the word frequency distribution that is compared with earlier data. A seismic shift of >30% may flag a potential AI involvement.

Why it matters for academic fidelity is that human writing is iterative. If the student’s writing suddenly appears like a perfect essay compared to dozens of 4‑grade-level essays, that is suspicious. A teacher can raise a conversation to find the cause.

In a particular case, an economics major had an essay that scored 100 out of 100 on a plagiarism tool. The formula at the back of the script flagged a back‑value, overseeing that the last two paragraphs had a 90% similarity with a known public text. The teacher recognized that the student had not been careful and corrected the final grade accordingly.

Using these style fingerprints is beneficial for institutions seeking to plateau AI risk differences across disciplines. Custom ontologies have been developed for each discipline, providing an aggregated baseline over data sets. That approach is more accurate and scalable.

A Detailed Comparison Table of AI Detection Tools

| Detection Tool | Type of Content | Detection Accuracy | Integration Effort | Cost per Submission |

|---|---|---|---|---|

| Turnitin | Text, Code | 85–90% for AI | High (needs LMS or manual upload) | $1–$5 |

| GPTZero | Text | 78–82% for AI (open source) | Medium (stand‑alone, API available) | $0–$2 |

| Originality.AI | Text, Code, Mix | 80–88% | Medium (requires manual upload) | $3–$7 |

| Grammarly Pro | Text | 70–75% for AI (mainly a grammar tool) | Low (browser extension) | $10–$20/month |

| Custom ML Model (institution built) | Text, Code | Varies (over 90% for custom training) | Low to Medium (depends on infrastructure) | Varies (developed in-house) |

FAQs About Gradescope and AI Detection

Will Gradescope automatically flag ChatGPT content if my institution uses Turnitin?

Gradescope itself does not flag content. However, if your assignment is set to automatically upload to Turnitin through the LMS, any AI likelihood reported by Turnitin may be visible in your workflow. This process depends on the integration, so the system does not add a simple “AI detected” banner directly inside Gradescope.

Is it possible for a student to hide ChatGPT usage in a code assignment from Gradescope?

Gradescope can detect similarity in code among submissions, but it cannot detect the presence of an AI in code syntax. If only one student submits a piece of code that has no similarity to others, the tool will show a 0% match. That means the student can hide the AI usage unless the teacher runs a separate pass through a dedicated code‑analysis AI detector, which is rarely installed.

Can I use AI to proofread my essay before grading to avoid detection?

Proofreading does not change the underlying AI signature. While a tool might smooth out semantics, the pattern of sentence structure and diction of the core text remains. So, the short answer is no – AI proofreading won’t make it invisible. If you use an AI for fact‑finding or keywords extraction, that is a safer use case.

How do I know if my instructor is using AI detection for my work?

You can ask the instructor to describe any detection plugin. Many syllabi now include a section about the grading tools. If the teacher references OpenAI or mentions “AI detection”, it’s a strong clue. For compliance, you may check the learning management system’s settings for a “Turnitin” icon or a “Code similarity” icon. This will confirm the presence of detection.

Real‑World Consequence: Will I lose a course if I use ChatGPT for an exam?

Yes, if the policy states that AI-based work is considered plagiarism, a flagged assignment can result in a fail or a course withdrawal. Some institutions have a “grade penalty” clause: the student can replay the exam, but it might only count as a partial. Always read the policy carefully and seek clarification from the instructor.

Conclusion

Gradescope offers educators an efficient environment to manage massive stacks of student work. Its built‑in features accelerate grading and help capture code similarities. However, the platform is not designed to automatically detect ChatGPT or any AI‑generated text. Institutions that want to maintain rigorous academic integrity usually couple GradeScope with Turnitin or other AI detection services. That partnership provides a clear pipeline: students submit through GradeScope, the assignment flows to a detection engine, teachers get a similarity report and an AI probability. In that channel, a detected flag may trigger a manual review. The result is not a magic solution; the detection process remains qualitative and guided by human insight.

For students, the most effective strategy is to treat AI as a collaborator – use it for idea generation and not as a writer. Revise, personalize, and adhere strictly to your university’s policy. That mitigates risk and enriches learning. For instructors, designing authentic assessment tasks and setting up robust detection infrastructure build a defensible academic environment while encouraging transparency.

Knowledge is the only sure guard against the rapid rise of AI in education. By staying informed about tool capabilities and policy changes, both students and educators can protect themselves and preserve the spirit of learning. The future of teaching may see AI becoming a component of mainstream tasks, but responsible usage and diligent detection will be the pillars of integrity.

Also Read: AI Educational Product Names: The Essential Guide for 2026