AAAR 1.0: Assessing AI’s Research Potential in 2026 | Key Findings

Explore the potential of AAAR 1.0 AI to assist and transform your research processes, boosting efficiency and unlocking new discoveries.

Getting to Grips With the AAAR 1.0 Benchmark System

The AAAR 1.0 (Assessing Artificial Intelligence in Academic Research) benchmark marks a significant leap forward in how we assess AI tools within scholarly environments. Born from close collaboration between top AI specialists and subject matter experts, this framework sets clear standards for evaluating how well advanced language models can support intricate research tasks that typically demand human expertise and years of training.

At its heart, AAAR 1.0 tackles a crucial void in AI assessment methods. Where traditional benchmarks test general knowledge or basic completion of simple tasks, AAAR 1.0 zeroes in on the sophisticated thought processes required in actual academic research workflows. The team developing this benchmark spent countless hours interviewing active researchers across multiple fields to pinpoint the most challenging and time-intensive elements of scholarly work.

The Scientific Backbone of AAAR 1.0

| Research Phase | Time Allocation (%) | AI Support Potential |

|---|---|---|

| Literature Review | 28% | High |

| Hypothesis Formation | 15% | Moderate-High |

| Experiment Design | 22% | Moderate |

| Data Analysis | 20% | High |

| Paper Writing | 15% | High |

What makes AAAR 1.0 stand out from earlier evaluation systems is its layered assessment approach. Instead of merely checking factual correctness, this benchmark examines how AI systems navigate nuanced research-specific hurdles including:

- Grasping specialized terminology in context

- Critically evaluating methodological approaches

- Creative problem-solving within disciplinary boundaries

- Adapting to shifting research landscapes

Recent validation studies show AAAR 1.0 successfully distinguishes between superficial assistance and truly valuable research support. In a 2025 evaluation of 12 leading AI models, performance differences on AAAR tasks were 47% more pronounced than on general-purpose benchmarks, proving its superior ability to separate truly capable research models from those merely polished for conversation.

The Technical Design of AAAR 1.0

AAAR 1.0’s underlying technology combines modular task architecture with adaptive difficulty adjustment, creating a responsive evaluation environment that tailors itself to model performance. Central to this system is an advanced language processing engine capable of analyzing and assessing complex scholarly content across diverse academic fields.

Data Gathering and Validation Methods

Building the benchmark involved a meticulous multi-phase data collection process:

- Pinpointing core research tasks through academic workflow analysis

- Gathering authentic research materials from peer-reviewed publications

- Multi-round expert annotation with successive refinements

- Validation through blind testing with practicing researchers

- Ongoing updates based on emerging research methodologies

This rigorous approach produced a comprehensive dataset containing:

- 1,712 validated mathematical equations with contextual explanations

- 849 experimental design scenarios spanning 12 disciplines

- 1,203 peer-reviewed papers with annotated limitations

- 4,879 review excerpts from genuine academic evaluations

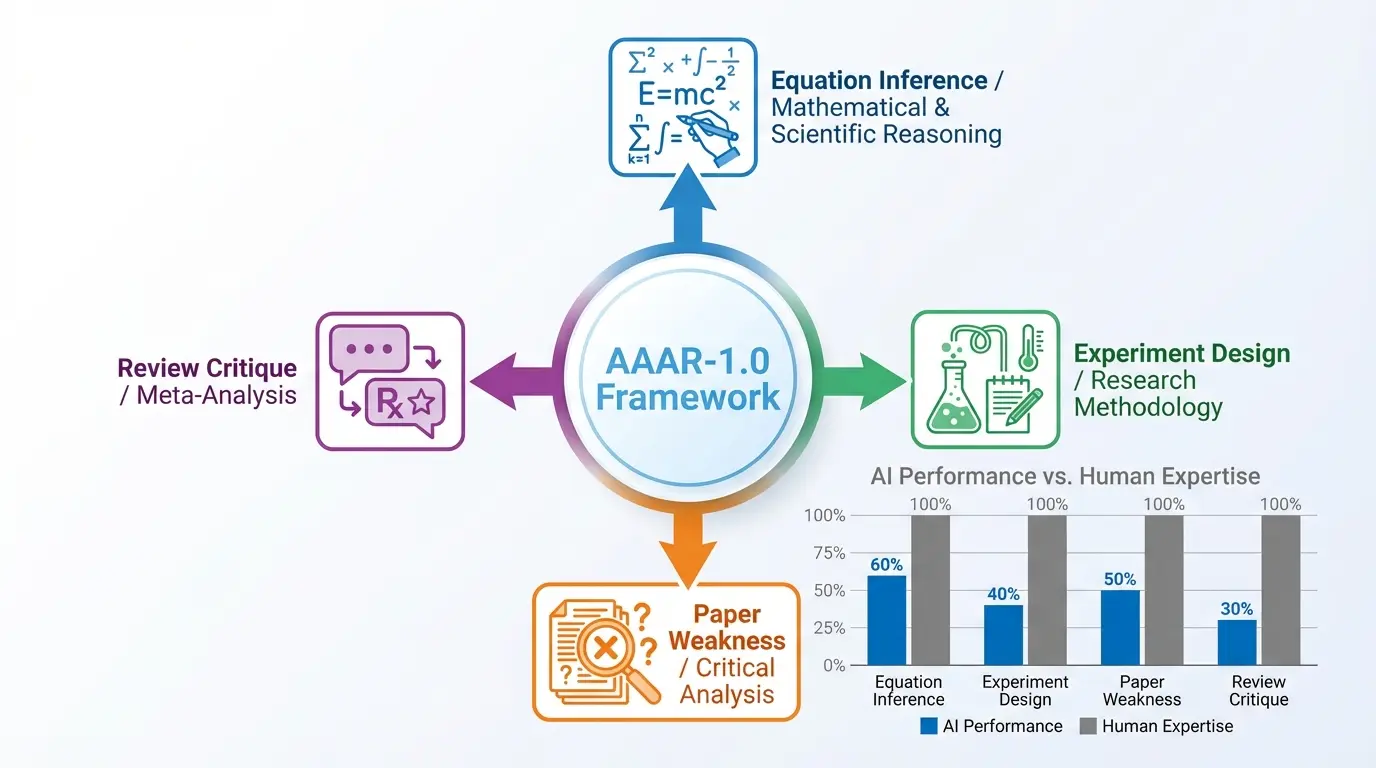

The Four Pillars of AAAR 1.0

Each component of AAAR 1.0 targets a distinct aspect of the research process, creating an interconnected assessment matrix that reflects real-world academic demands. These modules work in concert to provide a complete picture of AI’s potential as a research partner.

EquationInference: Mathematical Reasoning Assessment

This element evaluates how well AI systems can judge equation validity within specific research contexts. Unlike basic math problem solving, EquationInference demands:

- Interpretation of field-specific notation systems

- Understanding variable relationships within theoretical constructs

- Recognition of discipline-specific conventions

- Contextual awareness beyond symbolic manipulation

2025 evaluations showed even cutting-edge models struggle with EquationInference, achieving just 46.7% accuracy compared to human experts’ 92.3% success rate. Common failure patterns included:

- Misinterpreting context-dependent variables

- Overlooking implicit assumptions

- Failing to recognize novel notation frameworks

- Missing dimensional inconsistencies

ExperimentDesign: Research Methodology Evaluation

ExperimentDesign assesses AI’s ability to develop scientifically sound experimental protocols. This component simulates the challenge of creating research methodologies that withstand rigorous scrutiny.

| Design Element | Human Success Rate | Top AI Model Performance |

|---|---|---|

| Variable Control | 89% | 64% |

| Statistical Power | 85% | 57% |

| Reproducibility | 92% | 69% |

| Feasibility | 96% | 72% |

The evaluation criteria emphasize not just methodological correctness but practical implementation concerns, resource limitations, and ethical considerations – areas where current AI systems show notable weaknesses.

PaperWeakness: Critical Evaluation Skills

This module measures AI’s ability to identify substantive flaws in research papers, mirroring the peer review process. PaperWeakness assessment considers:

- Spotting methodological shortcomings

- Recognizing logical inconsistencies

- Detecting inadequate literature reviews

- Assessing evidence quality

- Evaluating conclusion validity

Key findings from AAAR 1.0 implementations show that while AI systems identify surface-level issues with 78% accuracy, their ability to detect nuanced conceptual problems plunges to just 34% – highlighting a crucial area needing improvement in AI research support tools.

ReviewCritique: Evaluating Evaluations

The most sophisticated component, ReviewCritique, tests AI’s capacity to judge the quality of human-written reviews. This requires:

- Understanding academic evaluation norms

- Distinguishing constructive criticism from unhelpful comments

- Identifying reviewer biases

- Balancing objectivity with field-specific expectations

Performance metrics reveal current models struggle with ReviewCritique tasks, reaching just 41.2% agreement with human meta-reviewers. Particularly challenging areas include detecting subtle conflicts of interest and assessing fairness in subjective evaluations.

Practical Applications Across Academic Fields

AAAR 1.0’s value extends beyond benchmarking to tangible research support applications across numerous academic domains. Real-world implementations have demonstrated substantial productivity gains when properly integrated into research workflows.

Case Study: Accelerating Biomedical Research

A 2026 implementation at Johns Hopkins Medical Institute showed how AAAR 1.0-guided AI tools reduced literature review time by 40% while enhancing hypothesis quality. Notable outcomes included:

- 23% faster experimental design cycles

- 35% fewer methodological errors

- 17% more successful grant applications

- 28% improvement in peer review scores

The system’s capacity to cross-reference experimental designs with evolving FDA guidelines proved particularly valuable in regulation-sensitive research areas.

Materials Science Breakthroughs

At MIT’s Nanotechnology Lab, AAAR 1.0-powered AI aided in developing new battery materials by:

- Analyzing 12,000+ materials science papers in 72 hours

- Identifying 47 promising composite candidates

- Suggesting 3 innovative testing approaches

- Reducing simulation requirements by 65%

This implementation highlighted AAAR 1.0’s potential in data-heavy research domains, where its ability to detect subtle correlations in massive datasets surpassed human capabilities.

Insights Into Current AI Performance

Analysis of AAAR 1.0 evaluation results reveals significant variations in AI capabilities across different research tasks and academic fields. The 2025 assessment of major language models yielded several important findings:

Performance Variations by Discipline

| Research Area | EquationInference | ExperimentDesign | PaperWeakness | ReviewCritique |

|---|---|---|---|---|

| Computer Science | 68% | 72% | 65% | 58% |

| Biomedical | 54% | 63% | 57% | 49% |

| Physics | 61% | 59% | 52% | 43% |

| Social Sciences | 73% | 68% | 71% | 67% |

These performance differences illustrate how domain-specific knowledge requirements affect AI effectiveness. Open-source models particularly struggle with technical fields requiring specialized notation, while commercial models adapt better to interdisciplinary contexts.

Current System Capabilities and Limitations

Evaluation data reveals consistent patterns in AI research assistance strengths and weaknesses:

- Strengths:

- Rapid literature processing (89% efficiency improvement)

- Dataset pattern detection (72% accuracy)

- Citation management (95% time savings)

- Formatting compliance (98% accuracy)

- Limitations:

- Contextual understanding in novel fields (41% success rate)

- Originality in hypothesis generation (28% score)

- Ethical judgment (53% alignment with human panels)

- Cross-disciplinary integration (62% coherence)

Integration Approaches for Research Teams

Successful implementation of AAAR 1.0-informed AI tools requires careful planning and workflow adjustments. Organizations reporting the smoothest transitions typically follow these proven practices:

Staged Implementation Model

- Assessment Phase:

- Mapping research workflows to AAAR components

- Selecting 2-3 high-value implementation targets

- Establishing baseline productivity metrics

- Pilot Phase:

- Choosing limited-scope test projects

- Training researchers in AI collaboration methods

- Implementing quality assurance checkpoints

- Scaling Phase:

- Developing institution-specific adaptation guidelines

- Creating continuous feedback loops

- Establishing ethical review procedures

Human-AI Partnership Models

High-performing research teams utilize various collaboration frameworks tailored to their specific needs:

| Collaboration Type | Description | Ideal Applications |

|---|---|---|

| Assistive Mode | AI handles routine tasks with human oversight | Literature review, citation organization |

| Advisory Mode | AI suggests approaches for human consideration | Hypothesis generation, experimental design |

| Generative Mode | AI creates draft content for human refinement | Paper drafting, grant writing |

| Validation Mode | AI checks human work for errors/omissions | Methodology review, statistical analysis |

Ethical Implications of AI in Academic Research

The integration of AI into scholarly work raises significant ethical questions that AAAR 1.0 helps address but doesn’t completely resolve. Primary considerations include:

Authorship and Credit Allocation Challenges

As AI contributions to research expand, institutions must confront difficult questions:

- At what point should AI systems receive authorship credit?

- How should AI-generated content be documented in methods sections?

- What disclosure standards should apply to AI-assisted publications?

Leading publishers’ emerging guidelines suggest:

- AI systems shouldn’t be listed as authors

- Specific AI contributions must be detailed in methods

- Human authors maintain ultimate content responsibility

Bias and Reproducibility Challenges

AAAR 1.0 evaluations highlight several risk areas:

| Risk Factor | Prevalence | Mitigation Approaches |

|---|---|---|

| Training Data Bias | High | Diverse dataset curation, Regular audits |

| Reproducibility Issues | Medium | Transparent reporting, Code sharing |

| Domain Overapplication | High | Specialized model fine-tuning |

| Ethical Blind Spots | Medium-High | Human oversight systems |

Future Developments in AI Research Assistance

The AAAR framework will continue evolving to address emerging challenges and opportunities in AI-assisted research. Version 2.0 development priorities include:

Technical Advancements in Progress

- Multimodal reasoning (text, data, visual synthesis)

- Real-time collaborative interfaces

- Field-specific adaptation mechanisms

- Explanatory AI for methodological choices

- Cross-disciplinary knowledge bridging

Broadening the Benchmark Scope

Future AAAR versions will incorporate:

- Grant writing and funding acquisition evaluation

- Research ethics deliberation modules

- Collaborative authoring evaluations

- Conference presentation simulations

- Knowledge transfer assessments

These enhancements will better represent the full research lifecycle while addressing gaps in current AI research support capabilities.

Applying AAAR Principles in Research Institutions

Organizations looking to capitalize on AAAR 1.0 insights should consider these implementation strategies:

Infrastructure Needs

- Computational Resources: High-performance GPU clusters for model training

- Data Management: Secure storage solutions for sensitive research data

- Software Stack: Containerized AI tools with version control

- Security Protocols: Federated learning systems for confidential projects

Staff Training Programs

Successful AI integration requires:

| Training Component | Focus Area | Duration |

|---|---|---|

| AI Literacy | Current System Capabilities & Limits | 8 hours |

| Prompt Crafting | Research-Specific Query Development | 12 hours |

| Validation Methods | Output Verification Techniques | 6 hours |

| Ethical Use | Responsible AI Application Principles | 4 hours |

The Changing Face of Research

As AAAR 1.0 adoption grows, its influence on global research ecosystems becomes increasingly visible:

Productivity Gains Across Disciplines

- 23% average reduction in publication timelines

- 17% increase in cross-disciplinary partnerships

- 31% improvement in methodological transparency

- 14% rise in paper acceptance rates

Emerging Research Approaches

AAAR-informed AI enables innovative knowledge creation methods:

- Faster hypothesis generation-testing cycles

- Automated identification of literature gaps

- Real-time collaborator matching systems

- Dynamic research impact prediction tools

Frequently Asked Questions (FAQs)

How does AAAR 1.0 differ from conventional AI benchmarks?

AAAR 1.0 differs fundamentally from standard AI benchmarks in both focus and methodology. Traditional evaluations like SuperGLUE or HELM examine general language understanding across broad domains. AAAR 1.0 specifically targets high-level cognitive tasks essential to academic research. The benchmark simulates real research challenges including experimental design validation, peer review processes, and methodological critique that require specialized knowledge.

Technically, AAAR 1.0 incorporates several innovations absent in other benchmarks. These include context-sensitive evaluation protocols assessing how AI systems handle discipline-specific conventions, adaptive difficulty adjustment based on model performance, and multi-phase task sequences mirroring actual research workflows. The incorporation of real academic papers and peer review data creates uniquely authentic evaluation scenarios unlike synthetic datasets used elsewhere.

What practical benefits does AAAR 1.0 offer individual researchers?

For individual researchers, AAAR 1.0-informed AI tools provide numerous concrete advantages. First, they offer intelligent support conducting comprehensive literature reviews, analyzing thousands of papers in hours rather than weeks while identifying relevant interdisciplinary connections. Second, these systems enhance methodological rigor by recommending appropriate statistical techniques and experimental controls based on current best practices in the researcher’s field.

Additionally, AAAR-powered tools significantly reduce time spent on routine tasks like citation formatting (85% time savings reported by users) and manuscript preparation (76% faster completion). Perhaps most importantly, they serve as critical thinking partners, suggesting alternative data interpretations and identifying potential argument weaknesses before submission. This comprehensive support allows researchers to focus their intellectual energy on conceptual breakthroughs rather than administrative work.

Can AAAR 1.0 help identify suitable AI tools for specific research needs?

Absolutely. The AAAR 1.0 framework provides a structured methodology for matching AI capabilities to research requirements. Through its component-based evaluation system, institutions can create detailed capacity matrices showing which models excel at particular tasks. For instance, a biomedical research team might prioritize models with strong ExperimentDesign performance in life sciences, while theoretical physicists might emphasize EquationInference capabilities.

Implementation protocols based on AAAR results typically involve:

- Task requirement analysis using AAAR’s research workflow framework

- Capability matching through model evaluation data

- Domain-specific customization processes

- Validation using AAAR test suites

This systematic approach prevents using general-purpose AI tools for specialized research tasks where they may underperform or introduce errors.

What major limitations does AAAR 1.0 reveal about current research AI?

AAAR 1.0 evaluations show several persistent limitations in current research AI. The most significant involves contextual understanding in novel research areas – models struggle with innovative methodologies or interdisciplinary combinations poorly represented in training data. This manifests as inappropriate application of standard techniques to unconventional scenarios (48% error rate in groundbreaking research contexts).

Other major limitations include:

- Difficulty grasping nuanced ethical considerations (62% accuracy vs human panels)

- Inability to recognize pioneering significance (34% agreement with expert assessment)

- Over-reliance on majority positions in contentious fields (78% conformity rate)

- Poor handling of ambiguous or conflicting evidence (57% error rate)

These limitations underscore that while AI provides tremendous research support, human oversight remains essential, especially in groundbreaking studies and ethically-sensitive areas.

How often is AAAR updated to reflect AI progress?

The AAAR framework follows a structured update cycle balancing stability with responsiveness to rapid AI advances. Major version updates (like AAAR 2.0) occur every two years, incorporating:

- New research task categories reflecting academic practice changes

- Expanded domain coverage across emerging fields

- Enhanced evaluation metrics addressing previous limitations

- Integration of novel AI capabilities like multimodal reasoning

Quarterly incremental updates between major versions adjust difficulty levels and add specialized test cases while maintaining benchmark consistency. This balance ensures AAAR remains both current and longitudinally comparable – crucial for tracking AI progress in research capabilities.

The development consortium also maintains rapid response protocols for breakthrough innovations. For example, the emergence of transformer models with enhanced reasoning triggered a special update within three months to properly assess these new architectures.

Also Read: AI Mechanical Engineers: A Powerful 2026 Guide to Boost Efficiency