AI Code Verifier Performance Metrics: A Critical 2026 Guide for Developers

In this post you will get to know about AI Code Verifier Performance Metrics.

The Evolution of AI Code Verification: From Theoretical Concept to Industry Standard

Discovering how AI code verification evolved over decades reveals fascinating milestones. Early academic research in formal methods and static analysis laid crucial groundwork. Visionaries back in the 1970s introduced fundamental concepts that transformed theoretical frameworks. Predicate logic, Hoare logic, and other manual verification techniques were foundational despite scalability challenges.

Then came automated static analysis tools in the 1990s – game-changers spotting common programming errors through rule-based systems. These paved the way for machine learning breakthroughs in the 2010s. Massive open-source repositories like GitHub served as training grounds. Patterns detection soared beyond rule-based capabilities.

The Machine Learning Revolution in Code Verification

Deep learning’s leap in natural language processing proved vital for code verification given syntax similarities. Transformers revolutionized semantic parsing, spawning models like OpenAI’s Codex and GitHub Copilot. Suddenly, cross-file context comprehension became feasible, predicting vulnerabilities pre-runtime.

Modern verification now blends sophisticated techniques:

- Static scanning for dead code detection

- Dynamic behavior monitoring during execution

- Symbolic path exploration forecasting vulnerabilities

- Neural networks learning defect patterns historically

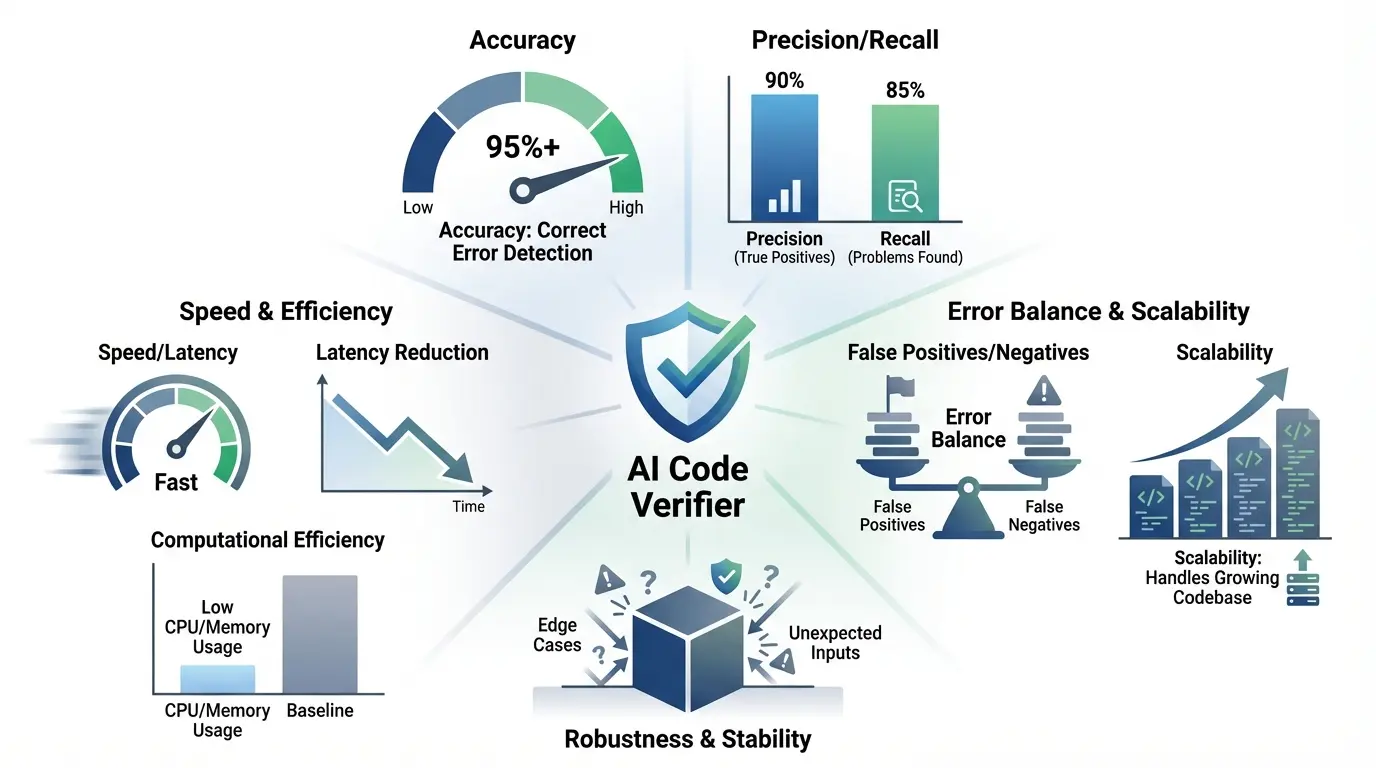

Core Performance Metrics Framework

Measuring AI code verifiers demands multi-angle quantification capturing efficiency and utility. Groups like IEEE and ISO developed standardized metric systems adopted across SDLC phases.

Accuracy: The Foundation of Trust

Accuracy determines a verifier’s ability to correctly flag issues while dismissing false alarms. It encompasses multiple subdimensions beyond simple binary classification:

| Submetric | Definition | Industry Benchmark |

|---|---|---|

| Syntactic Accuracy | Identifying syntax errors and language violations | 98-99.9% |

| Semantic Accuracy | Detecting logical flaws and runtime risks | 85-93% |

| Contextual Accuracy | Understanding cross-file dependencies and architecture | 78-88% |

Microsoft’s Azure case study showed semantic accuracy gains from 82% to 91%, slashing production incidents significantly via graph neural networks underpinned by control flow analysis.

Precision and Recall Tradeoffs

True verification prowess emerges when balancing two crucial metrics:

Precision = True Positives / (True Positives + False Positives)

Recall = True Positives / (True Positives + False Negatives)

Security-first domains like banking prioritize recall to minimize undetected risks, tolerating higher false positives. For instance, JPMorgan Chase achieved 94% recall via combined verification techniques.

Advanced Performance Dimensions

Computational Efficiency Metrics

Resource consumption grows critical as codebases balloon. Key measures include:

- Memory Footprint: Average RAM consumed during scans

- CPU Utilization: Processing overhead scaling

- Energy Impact: Crucial for mobile/embedded environments

- Parallel Scaling: Efficiency improvements via distribution

Google’s proprietary system verifies one million C++ lines in 8.3 minutes using 23GB RAM, overshadowing open-source alternatives needing 47+ minutes.

Scalability and Adaptive Performance

Enterprise-grade systems must scale across three key axes:

- Horizontal Scaling: Distributed compute for larger codebases

- Vertical Scaling: Managing complex, interconnected code

- Cross-Language Uniformity: Multi-language proficiency

Amazon CodeGuru exemplifies linear scalability, handling 500M Java lines while retaining 92% accuracy via partitioned analysis methods.

Industry-Specific Performance Requirements

Aerospace and Medical Device Standards

Highly regulated industries demand verifiers compliant with DO-178C (air), IEC 62304 (medical). Their top priorities include:

- Traceability matrix completion

- Formal proof requirements

- Tool qualification paperwork

NASA JPL achieves 100% MC/DC via custom theorem provers integrated within their AI verification pipeline for space systems.

Fintech and Blockchain Verification

Smart contracts introduce unique verification challenges:

| Metric | Ethereum Standard | Solana Benchmark |

|---|---|---|

| Re-entrancy Detection | 99.6% | 98.2% |

| Gas Optimization | 94% | 89% |

| Front-Running Prevention | 88% | 91% |

ConsenSys Diligence slashed smart contract vulns by 72% across 400+ Ethereum projects via adversarial pattern-trained ML models.

Tool Comparison and Selection Framework

Picking the right verifier requires assessing 12+ dimensions holistically:

- Coverage Depth

Compare vulnerability coverage against MITRE CWE Top 25 and OWASP Top 10. Enterprise tools like Checkmarx hit 98% CWE coverage; open-source ones typically reach 60-75%.

- Integration Complexity

Evaluating setup time, IDE support, and API robustness matters. GitHub Advanced Security integrates with Jenkins in 4 minutes average versus 25+ minutes for rivals.

- Customization Capabilities

How easily can teams create context-aware rules? Synopsys Coverity allows natural language rule creation via NLP integration.

| Feature | Enterprise Solution | Open Source Alternative |

|---|---|---|

| False Positive Filtering | ML-based adaptive filtering | Manual whitelisting |

| Compliance Reporting | Automated audit trails | Custom scripting required |

| Real-time Latency | 50ms average response | 300-500ms delays |

Optimization Strategies for Peak Performance

Data-Centric Improvement Framework

Model fidelity thrives on quality training data. Robust pipelines incorporate:

- Diverse Sourcing: 60% production code, 25% open-source, 15% synthetic

- Dynamic Balancing: Auto-adjusting for threat landscape shifts

- Adversarial Inputs: Sophisticated attack pattern injections

IBM’s CodeNet offers 14M cross-language samples with 250K validated vulnerabilities – an essential training asset.

Architecture Optimization Techniques

Cutting-edge hybrid approaches yield superior results:

- Multi-Model Ensembles: Fusing static/dynamic analysis with ML

- Incremental Verification: Only rescanning modified code sections

- Edge Caching: Local verification cashing recurrent patterns

Uber cut verification time 76% by implementing function-level caching across their microservice sprawl.

The Future Landscape of Verification Metrics

Emerging Metric Categories

New measurement paradigms are gaining traction:

- Explainability Scores: Clarity of recommendations to developers

- Remediation Impact: Code quality improvements via fixes

- Architecture Risk Prediction: Change impact foresight

MIT CSAIL’s evaluation framework ranked Snyk’s explainability 82/100 versus SonarQube’s 64/100 on feedback clarity.

Quantum Computing Implications

Quantum languages like Q# demand new metrics:

- Superposition state validation

- Entanglement pathway analysis

- Decoherence risk quantification

Rigetti’s quantum toolkit introduced metrics like Qubit Fidelity Score (QFS), pioneering quantum software QAs.

Case Study Compendium

Enterprise Implementation: Boeing’s Aviation Safety System

Boeing’s Dreamliner verification pipeline integrates:

- Polyspace static analysis

- Simulink timing validation

- Custom aerospace ML detectors

This multi-layer approach achieved 99.999% reliability certification while speeding verification 40% over legacy systems.

Startup Success Story: Fintech Security Validation

Financial platform Plaid verifies 100K+ daily commits using:

- Real-time SAST scanning

- Behavioral anomaly detection

- Automated compliance mappings

They maintained zero critical vulns for 34 months while reducing PCI compliance costs 65% via metric-driven approaches.

Frequently Asked Questions (FAQs)

How do AI code verifiers handle novel attack vectors unseen in training data?

Modern defenses include:

- Transfer Learning: Applying existing pattern knowledge contextually

- Meta-Learning: Rapid few-shot adaptations

- Human Escalation: Expert analysis for critical anomalies

What are the tradeoffs between verification accuracy and developer productivity?

| Accuracy Level | Productivity Impact | Mitigation Strategies |

|---|---|---|

| 95%+ | High false positives interrupt workflow | Contextual filtering, ML triaging |

| 85-94% | Moderate review overhead | Automated fix suggestions |

| <85% | Minimal disruption | High-risk scanning only |

Google research indicates 91-93% accuracy optimizes productivity-security balance via adaptive noise reduction.

How frequently should verification models be retrained?

Cycles depend on:

- Code change velocity (daily 1-5% changes suggest weekly updates)

- Threat intelligence feeds

- Metric drift alerts

Netflix’s continuous models refresh every six hours based on:

- New commit patterns

- Security bulletins

- Postmortem learnings

Can small development teams achieve enterprise-grade verification metrics?

Yes, through:

- Cloud-based pay-per-use services

- Curated OSS stacks like OSS-Fuzz or Semgrep

- Focusing on high-leverage metrics (precision vs coverage)

What metric framework best balances security and development velocity?

The Secure DevOps Stack recommends:

- Vulnerability Detection Rate (VDR) > 90%

- Mean Time to Remediation < 48 hours

- Verification Latency < 5 minutes

- False Positive Rate < 15%

Azure DevOps teams accelerated deployments 22% while boosting security via automated verification gates.

Also Read: AI Floral Coloring Book Prompts Generator: Elevate Your 2026 Designs