AI Governance Maturity Model Medium: A Practical 2026 Guide

Learn how anAI Governance Maturity Model Medium can guide your organization from basic principles to advanced, ethical AI practices. Start your journey to responsible AI.

Getting to Grips with AI Governance Maturity Frameworks

The Bedrock of Responsible AI Stewardship

Think of AI governance maturity frameworks as your organization’s personal GPS for navigating the complex world of artificial intelligence implementation. These structured guides help companies like yours systematically manage risks, ethical dilemmas, and regulatory requirements as AI adoption expands. The approach borrows from proven capability maturity models in software development, but adds special sauce to handle AI’s unique challenges.

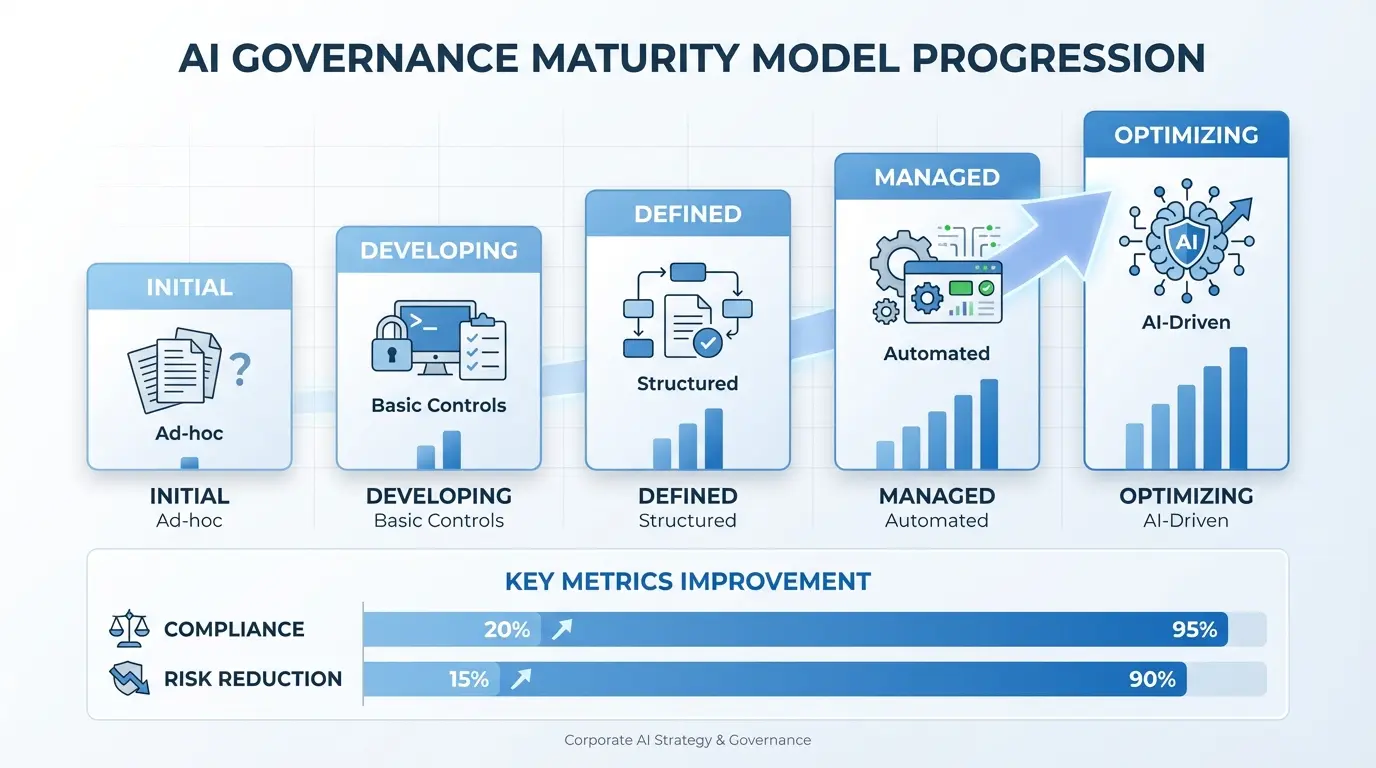

At its heart, an AI governance maturity model creates clear yardsticks across multiple aspects of your AI operations. We’re talking about policy creation, risk handling, operational safeguards, monitoring capabilities, and cultivating the right organizational mindset. The Medium maturity level acts as a crucial bridge where scattered practices evolve into standardized, repeatable processes.

Industry data from respected research firms shows companies reaching Medium maturity cut AI-related mishaps by nearly half compared to beginners. This sweet spot lets businesses scale their AI applications while staying compliant with groundbreaking regulations like the EU AI Act and industry-specific rules.

| Maturity Stage | Policy Development | Risk Handling | Tech Implementation |

|---|---|---|---|

| Basic (Ad Hoc) | No formal policies | Firefighting issues | Manual record-keeping |

| Medium | Standard templates | Proactive checks | Semi-automated tools |

| Advanced | Dynamic policy engine | Predictive analytics | Full automation |

The Evolution Journey of Governance Frameworks

AI governance models have matured alongside three distinct waves of artificial intelligence adoption:

- Pioneering Days (2010-2015): Early AI work focused mainly on technological capability rather than governance. Research labs and tech trailblazers set up initial ethical guardrails.

- Corporate Embrace (2016-2020): Widespread business use exposed governance gaps, pushing standards bodies like ISO and IEEE to create initial frameworks.

- Rulemaking Era (2021-Present): Major regulations like the EU AI Act and U.S. executive orders forced organizations to systematize their governance approaches.

Must-Have Pieces of AI Governance Frameworks

Any robust AI governance maturity model needs these seven building blocks:

- Clear Accountability: Defined ownership of AI systems throughout their lifespan

- Risk Grading System: Tiered impact-based approach

- Documentation Standards: Consistent model cards and dataset tracking

- Monitoring Systems: Ongoing performance checks

- Human Oversight: Intervention points for critical decisions

- Compliance Checks: Audit trails matching regulatory needs

- Ethical Safeguards: Bias spotting and correction methods

The Middle Ground: Making AI Governance Operational

Spotting a Medium-Maturity Organization

Companies reaching medium maturity have moved governance from theory to practice. Three key shifts happen here:

1. Governance gets baked into development rather than tacked on later

2. Cross-team committees replace siloed decision-making

3. Numbers-based metrics replace subjective assessments

A recent industry study found that 7 in 10 medium-maturity companies saw ROI on AI investments in just 18 months, compared to fewer than 1 in 4 at lower levels—proving good governance pays dividends.

Team Structures That Work at Medium Maturity

Getting organizational design right is crucial. Three models deliver results:

| Structure Type | Best Fit | Pluses | Minuses |

|---|---|---|---|

| Central Board | Heavily regulated sectors | Uniform standards | Potential delays |

| Distributed Model | Global firms | Local adaptation | Coordination headaches |

| Expert Hub | Tech-first companies | Knowledge sharing | Resource heavy |

Healthcare giant Mayo Clinic offers a real-world success story. Their blended approach—central policy-setting from legal teams plus local clinical review boards—cut approval times for diagnostic AI tools by 40% without compromising safety.

Scaling Processes Effectively

Transitioning to medium maturity means standardizing across five key areas:

- Risk Evaluation: Implementing three-tier impact classification

- Model Building: Required docs including data lineage tracking

- Deployment Approvals: Stage-gated reviews matching risk levels

- Performance Tracking: Automated alerts with human verification

- Issue Response: Clear escalation paths and fix protocols

Financial services leaders show how it’s done. One major bank created standard model templates with 32 required fields for customer-facing AI, slashing audit prep time dramatically.

Your Step-by-Step Journey to Medium Maturity

Phase 1: Laying the Groundwork (First 3 Months)

The setup phase focuses on three essentials:

A. Current Situation Analysis: Comprehensive audit of existing AI systems across departments

B. Policy Framework: Core documents including:

- AI Governance Charter

- Risk Classification System

- Model Documentation Standards

- Ethical Usage Principles

C. Governance Structure: Creating cross-functional committee with reps from:

- Legal/Compliance

- Data Science

- Product Leadership

- Cybersecurity

- Business Operations

Phase 2: Rolling Out Processes (Months 4-9)

With structures in place, bring seven key processes to life:

| Process | Owner | Tools Needed | Success Measures |

|---|---|---|---|

| Risk Classification | Compliance Lead | Impact Assessment Framework | 100% New Models Classified |

| Model Documentation | Data Science Lead | Standard Templates | 85% Completion Rate |

| Pre-Launch Review | Governance Committee | Workflow Automation Tools | <60 Day Cycle Time |

| Post-Launch Monitoring | Operations Team | Performance Dashboard | <5% Metric Drift/Month |

Phase 3: Optimization Mode (Months 10-18)

Now refine processes through automation and analytics:

- Automate Governance Steps: Bake governance checks into development pipelines

- Add Predictive Power: Use ML to forecast compliance risks and model trouble spots

- Create Feedback Systems: Formal processes for learning from audits and incidents

- Benchmark Progress: Regular comparisons against industry standards

IBM’s experience highlights this well. Through focused 120-day improvement sprints, they achieved medium maturity across all units in under 15 months without slowing AI development.

Tech That Powers Medium Maturity

Essential Tool Stack

Reaching medium maturity requires smart tech investments:

- Model Registries: Central hubs tracking versions, docs, and approvals

- Automated Monitoring: Real-time alerts for data drift and performance dips

- Governance Platforms: Systems managing reviews and audits

- Explainability Tools: Techniques making AI decisions understandable

Picking the Right Vendors

Selecting governance tech requires weighing eight factors:

| Selection Criteria | Importance | Evaluation Method |

|---|---|---|

| Integration Capabilities | 25% | API Documentation Review |

| Compliance Coverage | 20% | Regulatory Mapping Exercise |

| Customization Flexibility | 15% | Hands-on Testing |

| Total Cost | 15% | 5-Year Cost Projection |

| Vendor Health | 10% | Financial Stability Check |

| User Experience | 10% | User Testing with Teams |

| Scalability | 5% | Architecture Review |

Resources like NIST’s AI Risk Guide provide valuable starting points before evaluating commercial solutions.

Industry-Specific Governing Challenges

Financial Services Focus

Banks face unique governance hurdles requiring custom approaches:

- Model Validation: Align with regulatory expectations for model risk

- Decision Transparency: Need to explain credit decisions clearly

- Monitoring Frequency: Daily checks for customer-facing systems

Healthcare Governance Hotspots

Medical AI demands stringent controls:

- Clinical Validation: Rigorous testing matching clinical trial standards

- Patient Consent: Clear explanations for AI-assisted diagnostics

- Adverse Reporting: Integration with regulatory monitoring systems

The Mayo Clinic framework stands out, requiring clear documentation linking AI decisions to clinical evidence and training data details.

Tracking Your Progress

Key Metrics That Matter

Measure success through quantitative and qualitative indicators:

| Metric Type | Sample KPIs | Tracking Frequency |

|---|---|---|

| Policy Coverage | % AI systems fully documented | Quarterly |

| Process Efficiency | Average review time | Monthly |

| Risk Management | % models current on risk assessments | Bi-Weekly |

| Compliance Health | Audit findings severity score | After Audits |

| Business Impact | ROI from governed AI projects | Yearly |

Assessing Your Maturity Level

Conduct thorough evaluations using several methods:

- Doc Review: Audit policies and process artifacts

- Stakeholder Chats: Qualitative input from 10+ roles

- Process Observation: Shadow reviews and monitoring work

- Tool Analysis: Check tech against requirements

- Sector Comparisons: Score against industry benchmarks

Roadblocks You Might Hit

Cultural Pushback

People challenges often prove toughest:

- Innovation Concerns: Address with clear guidelines on acceptable use cases

- Resource Worries: Show ROI through reduced incident costs

- Skill Gaps: Build tiered training programs for different roles

Technical Headaches

Legacy systems complicate governance:

- Data Silos: Create enterprise catalog with governance tags

- Model Creep: Set up registry with retirement rules

- Tool Overload: Establish tech standards before new purchases

Regulatory Whiplash

Shifting rules demand adaptability:

- Regulatory Radar: Dedicate team to track legal changes

- Modular Policies: Build framework for quick updates

- Compliance Buffer: Exceed current rules where possible

Beyond Medium Maturity

Third-Party Risk Management

Extend governance to external providers through:

- Vendor Vetting: 50-point evaluation covering security and ethics

- Contractual Safeguards: Mandatory performance SLAs

- Ongoing Monitoring:

Audit Alignment

Independent verification strengthens governance:

| Audit Focus | Medium Maturity Needs | Evidence Types |

|---|---|---|

| Policy Follow-through | 90% compliance for high-risk systems | Sample testing |

| Doc Completeness | All required fields filled | Template checks |

| Monitoring Effectiveness | <72 hour issue detection | Incident logs |

| Training Completion | 85% role certification | Learning system reports |

Looking Towards Advanced Governance

Gearing Up for Higher Maturity

Organizations approaching advanced stages should prioritize:

- Predictive Governance: ML models forecasting compliance issues

- Automated Enforcement: Built-in guardrails in dev environments

- Executive Reporting: Dynamic dashboards for leadership visibility

Sustainable Improvement Practices

Long-term success requires embedding enhancement habits:

- Quarterly Check-ins: Measure against internal and external benchmarks

- Post-Mortem Process: Formal analysis of governance slips

- Industry Engagement: Contribute to standards bodies

Answering Your Top Questions for AI Governance Maturity Model Medium

How does medium maturity differ from basic AI governance?

Medium maturity marks the shift from reactive to proactive governance. While basic governance sets initial rules, medium maturity ensures consistent application through standardized processes. Companies at this level show measurable risk reduction via structured assessments and controls across AI lifecycles.

Key differences include person-independent processes, risk-tiered governance, and systematic monitoring. Crucially, medium maturity distributes governance responsibility beyond siloed compliance teams to operational roles across departments.

How do regulations impact medium maturity implementation?

Regulatory frameworks provide essential boundaries but need tailoring. The EU AI Act’s risk tiers directly influence governance needs—high-risk applications demand tighter controls. However, true medium maturity exceeds compliance checklists via ethical frameworks.

Smart implementations align governance with multiple regulations. A medium-maturity bank might blend GDPR data rules, EU AI Act transparency needs, and Fed model validation standards into unified workflows.

What pitfalls trip up medium maturity implementations?

Three common missteps emerge:

- Process Overkill: Bureaucracy slowing development

- Tool Obsession: Tech implementations without process redesign

- Stakeholder Sidestepping: Excluding developers from governance design

Winners pilot processes on limited use cases first, gather team feedback, and refine before company-wide rollout. Balancing standardization with flexibility proves critical—especially when matching review rigor to risk levels.

Can smaller firms achieve governance parity with bigger players?

Yes, through smart prioritization. Medium businesses should focus first on high-value/high-risk AI uses rather than enterprise-wide governance. Three strategies help:

- Open-Source Leverage: Use tools like TensorFlow Extended with built-in governance

- Strategic Outsourcing: Partner for specialized needs like bias audits

- All-in-One Platforms: Simplify with integrated solutions

What staffing comes with medium maturity adoption?

Effective implementations need these dedicated roles:

| Role | FTE Needs | Key Duties |

|---|---|---|

| Governance Lead | 1.0 | Program oversight and policy creation |

| Compliance Analyst | 0.5 | Regulatory alignment and audit support |

| Technical Architect | 0.5 | Tool implementation and automation |

| Training Specialist | 0.2 | Role-based ethics and process education |

Most organizations share roles initially before dedicating full-time resources as governance scales. Executive sponsorship and cross-functional governance team reps prove critical.

Also Explore: Blender Unwrap Model AI Guide: Effortless UV Mapping in 2026