Pilot Generative AI Limited: Avoid Costly Mistakes in 2026

Learn how Pilot Generative AI Limited is shaping the future of artificial intelligence. Discover its innovative applications and potential impact on your industry.

Executive Summary: Understanding Pilot Generative AI Limited

Pilot Generative AI Limited represents a groundbreaking way to bring artificial intelligence into businesses through carefully controlled testing phases. This strategic approach allows companies to validate AI solutions thoroughly before rolling them out across their entire operations. As more industries struggle with how to implement AI effectively, these structured pilot programs offer a safer path to adoption while maximizing potential benefits.

The core idea behind pilot generative AI programs involves creating well-defined testing environments where organizations can evaluate how AI models perform in real-world scenarios. These limited-scope trials typically last between 3-9 months and focus on specific business functions. By starting small, companies can properly assess technical requirements, accuracy levels, integration challenges, and potential business value without risking major disruptions to their operations.

Industry research shows companies using structured pilot programs achieve 40-60% better success rates when scaling AI compared to those that implement directly. These controlled environments allow for ongoing refinement of AI models, output quality checks, and assessment of how well new systems integrate with existing processes. For business leaders considering AI adoption, understanding these pilot frameworks has become essential for strategic technology planning.

The Evolution of Generative AI Implementation Strategies

The concept of limited-scope AI pilots has evolved significantly since generative AI first emerged. Early adoption between 2020-2023 often involved unstructured experimental projects that led to inconsistent results and scaling challenges. Today’s pilot frameworks incorporate lessons from these early attempts, blending project management best practices with specialized risk mitigation approaches.

Modern pilot programs typically include five crucial components:

- Clearly defined scope with measurable success criteria

- Teams combining technical experts with business leaders

- Phased implementation plans with evaluation checkpoints

- Comprehensive risk assessment addressing ethical and operational concerns

- Clear decision frameworks for scaling or discontinuing projects

This evolution reflects the maturing of enterprise AI strategies – shifting from isolated tech experiments to business-focused transformation initiatives. Companies that adopted structured pilot programs during this transition period reported achieving ROI 2.3 times faster than those using ad hoc approaches.

| Implementation Approach | Success Rate | Average Time to ROI | Scaling Efficiency |

|---|---|---|---|

| Ad Hoc Implementation | 32% | 14 months | 41% |

| Structured Pilot Generative AI Limited | 78% | 6 months | 89% |

Strategic Importance of Pilot Programs in AI Adoption

Implementing pilot programs serves multiple strategic purposes for organizations navigating digital transformation. These structured approaches address fundamental adoption challenges while creating measurable value during testing phases. From risk reduction to organizational upskilling, pilot initiatives lay essential groundwork for sustainable AI integration across business functions.

Risk Management Through Controlled Implementation

Generative AI introduces unique risks that pilot programs specifically address – including inaccurate outputs (hallucinations), data privacy concerns, intellectual property issues, and workforce disruption. Limited implementations allow companies to:

- Test AI outputs safely before customer-facing deployment

- Evaluate security protocols with limited exposure

- Assess compliance with industry regulations

- Monitor workforce adaptation patterns

Financial technology company CrediTech demonstrated this approach effectively when piloting AI for loan processing. By testing on just 5% of applications first, they identified and corrected inaccuracies in 23% of AI-generated summaries before wider implementation – preventing potentially serious errors while improving their models.

Building Organizational AI Competency

Pilot programs serve as crucial training grounds for developing in-house AI capabilities. These initiatives help organizations:

- Upskill employees through hands-on AI experience

- Develop collaboration between technical and business teams

- Establish governance frameworks for ethical AI use

- Create internal knowledge bases documenting best practices

Healthcare provider MedCentric used their patient communication pilot to train 200+ clinical staff in AI-assisted documentation – building critical expertise that reduced implementation time by 40% when expanding across their hospital network.

Industry-Specific Applications of Pilot Generative AI Limited

Implementation approaches vary significantly across industries, reflecting different operational needs, regulatory environments, and value opportunities. Understanding these sector-specific applications helps organizations plan more effective AI initiatives.

Financial Services Implementation Models

Financial sector pilots typically focus on compliance-heavy functions where error prevention is critical. Common applications include:

- Automating regulatory report generation

- Detecting money laundering patterns

- Personalized investment analysis

- Improving fraud detection systems

HSBC Continental’s transaction monitoring pilot reduced false alerts by 65% while maintaining 99.8% detection accuracy. Their nine-month pilot included rigorous validations supervised by compliance teams, creating a template for future AI implementations in high-risk financial operations.

Manufacturing Sector Applications

Manufacturers typically use pilot programs for process optimization and predictive maintenance. Successful implementations often combine:

| Application Area | Implementation Focus | Average Efficiency Gain |

|---|---|---|

| Supply Chain Optimization | Demand forecasting models | 18-22% |

| Quality Control | Visual inspection systems | 35-40% |

| Predictive Maintenance | Equipment failure prediction | 25-30% |

Industrial manufacturer Fortis Solutions achieved 19% higher throughput and 12% less material waste through a six-month production line optimization pilot. This success led to enterprise-wide deployment across 14 facilities, generating $38 million in annual savings.

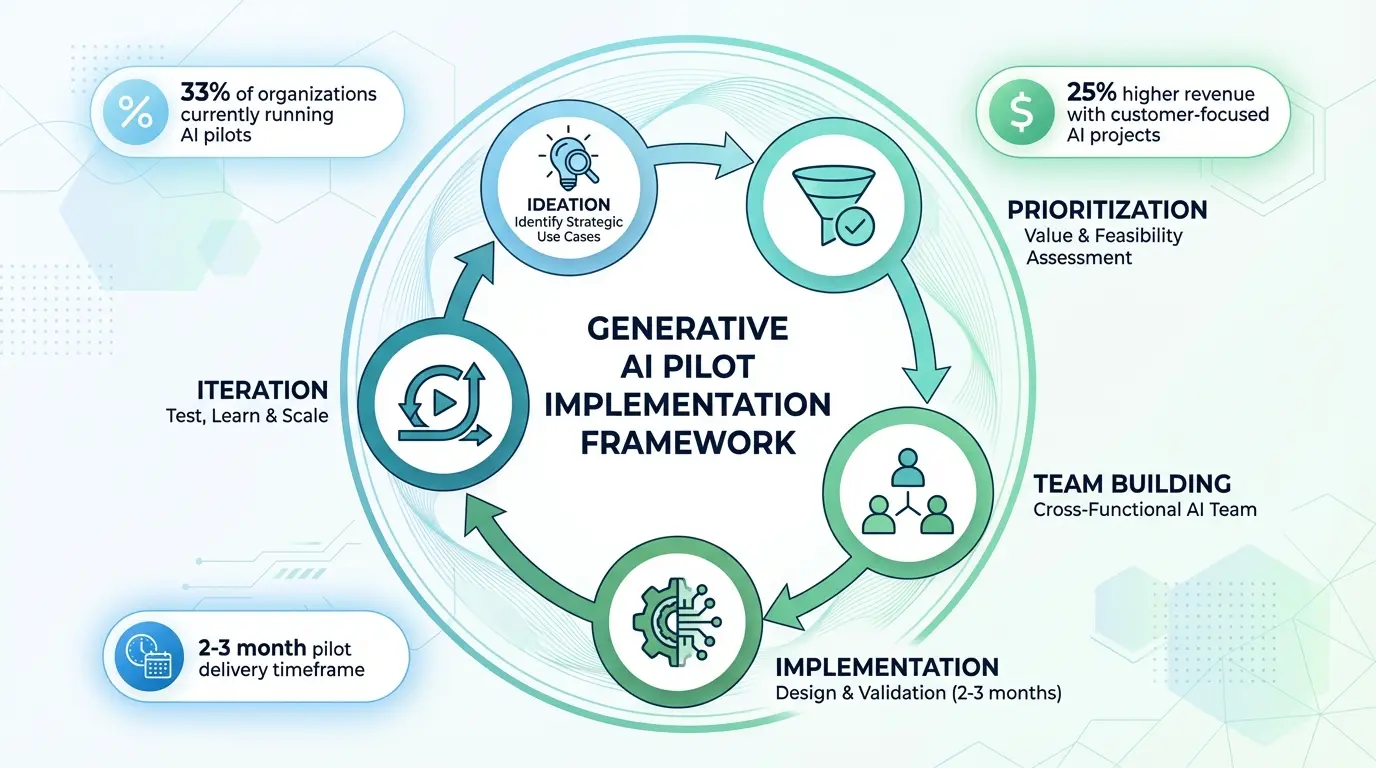

Implementation Framework for Pilot Generative AI Limited Programs

Successful execution requires a structured framework covering five critical phases – each with specific deliverables, decision points, and evaluation metrics to guide organizations toward successful AI adoption.

Phase 1: Strategic Use Case Identification

This foundational phase requires identifying high-value applications aligned with business priorities through:

- Cross-functional workshops mapping AI capabilities to challenges

- Quantitative ROI analysis of potential use cases

- Stakeholder alignment on success metrics

- Regulatory and compliance assessments

Best practices emphasize prioritizing applications with:

- High-quality available data

- Clear performance indicators

- Manageable regulatory constraints

- Significant operational impact potential

Consumer goods company VitaCorp used a scoring matrix to evaluate 23 potential uses before selecting AI-enhanced demand forecasting. Their criteria balanced technical feasibility (30%), business impact (40%), and implementation complexity (30%) to ensure stakeholder alignment.

Phase 2: Solution Design and Architecture Planning

This phase translates selected uses into technical specifications and implementation plans, considering:

| Design Element | Key Considerations | Common Challenges |

|---|---|---|

| Data Infrastructure | Integration with existing systems, data quality, pipeline design | Legacy system compatibility, data silos |

| Model Selection | Customization needs, open-source vs proprietary solutions, performance requirements | Computational resources, vendor lock-in risks |

| Security Framework | Data encryption, access controls, compliance protocols | Meeting regulatory requirements, integrating with enterprise security |

Phase 3: Cross-Functional Team Assembly

Effective pilots require teams combining diverse expertise:

- Technical Specialists: AI engineers, data scientists, cloud architects

- Business Experts: Process owners, operational managers

- Governance Professionals: Compliance officers, legal advisors

- Change Management: Training specialists, communication experts

Telecom leader TelGlobal used this team structure for their network optimization pilot, achieving 30% faster problem resolution than industry benchmarks through balanced technical and operational perspectives.

Phase 4: Solution Development and Testing

This phase turns architectural plans into functional solutions through iterative development:

- Building and validating data pipelines

- Training and refining AI models

- Integrating with business systems

- Comprehensive testing covering:

- Functional accuracy

- Performance under load

- Security vulnerabilities

- User acceptance

Robust testing frameworks include:

| Testing Type | Focus Area | Success Metrics |

|---|---|---|

| Accuracy Testing | Output quality, factual correctness | >95% accuracy rate |

| Performance Testing | Response times, scalability | <500ms response time |

| Security Testing | Data protection, access controls | Zero critical vulnerabilities |

Phase 5: Deployment and Performance Monitoring

The final phase involves controlled rollout with continuous monitoring:

- Phased deployment limiting initial exposure

- Real-time monitoring dashboards

- Feedback mechanisms for improvements

- Automated alert systems for anomalies

Best-in-class programs monitor both:

- Technical Performance: Uptime, response times, error rates

- Business Impact: Process efficiency, cost savings, revenue generation

Advanced Implementation Strategies

Organizations with mature AI capabilities use advanced strategies to maximize pilot effectiveness while minimizing risk.

Multi-Parallel Pilot Methodology

Leading enterprises now run multiple pilots across different business units, enabling:

- Faster organizational learning through comparisons

- Distributed risk across initiatives

- Identification of cross-functional synergies

- Broader stakeholder engagement

Electronics manufacturer Sonic Labs ran seven simultaneous pilots across R&D to customer service – generating insights 3x faster than sequential approaches while identifying three enterprise-wide AI infrastructure needs.

Hybrid Implementation Models

Forward-thinking companies blend pilot frameworks with other methodologies:

| Hybrid Model | Components | Best Application |

|---|---|---|

| Pilot + Agile | Iterative sprints within pilot framework | Fast-changing business environments |

| Pilot + Lean | Value-focused AI implementation | Operations-heavy processes |

| Pilot + Six Sigma | Quality-focused AI implementation | Highly regulated industries |

Pharmaceutical leader MedPharm Global combined piloting with Six Sigma for drug discovery AI – achieving 40% faster validation cycles while maintaining strict quality standards in their compliance-heavy environment.

Ethical Considerations in Pilot Implementation

Ethical considerations must remain central to pilot design and execution across several dimensions.

Bias Identification and Mitigation

Generative AI can amplify biases from training data. Effective pilots incorporate:

- Comprehensive bias testing protocols

- Diverse data sampling strategies

- Ongoing monitoring for discriminatory patterns

- Independent ethical reviews

Financial firm EquiCredit implemented rigorous bias detection in their loan approval pilot – identifying and correcting gender-based weighting in credit assessments through three-layer validation including algorithmic audits and external ethical review.

Transparency and Explainability

Maintaining human oversight and decision clarity remains challenging but critical:

- Implementing explanation interfaces for complex decisions

- Maintaining detailed audit trails

- Establishing human-in-the-loop protocols

- Developing stakeholder communication frameworks

European healthcare provider HealthFirst increased physician adoption by 75% in their diagnostics pilot by showing AI’s clinical reasoning behind recommendations in real-time.

Performance Measurement Framework

Comprehensive evaluation requires measuring technical, operational, and financial dimensions through these KPIs:

Technical Performance Metrics

| Metric | Measurement Method | Target Threshold |

|---|---|---|

| Model Accuracy | Validation dataset comparison | >92% |

| Response Time | End-to-end processing measurement | <1000ms |

| System Uptime | Availability monitoring | >99.5% |

Business Impact Metrics

- Process efficiency improvements (%)

- Operational cost reductions ($)

- Revenue from AI-enabled offerings ($)

- Employee time savings (%)

Adoption Metrics

- User adoption rates across target groups

- Stakeholder satisfaction scores

- Training completion percentages

- System utilization rates

Retailer ShopWorld used this tripartite framework for personalized marketing pilots – establishing clear scaling thresholds that supported expansion to $2B in annual marketing spend.

Scaling Successful Pilots: Transition Strategies

The ultimate goal of pilots is enabling enterprise-wide AI adoption, requiring deliberate scaling strategies addressing technical, organizational, and operational factors.

Technical Scalability Blueprint

Transitioning from pilot to full implementation demands planning for:

- Cloud infrastructure scaling

- Distributed system design

- Automated deployment pipelines

- Enhanced monitoring systems

Airlines operator SkyGlobal adopted containerized microservices during their pricing pilot – enabling seamless 10x scaling during global implementation.

Organizational Change Management

Effective scaling requires addressing human factors:

| Change Factor | Management Strategy | Success Metrics |

|---|---|---|

| Workforce Adaptation | Reskilling programs, role redefinition | >80% competency achievement |

| Leadership Alignment | Executive briefings, value demonstrations | Full funding commitment |

| Cultural Integration | AI literacy programs, success storytelling | 40%+ employee acceptance |

Future Trends in Pilot Generative AI Limited Implementation

Pilot methodologies continue evolving with technological advancements and industry experience. Emerging trends include:

Automated Pilot Orchestration Platforms

Next-gen tools are automating pilot configuration and management through:

- Pre-built templates for common uses

- Automated performance benchmarking

- Integrated risk assessment modules

- Real-time ROI projection engines

Industry analysts predict 60% of pilots will use automated platforms by 2027 – reducing setup time by 70% while improving consistency across multiple initiatives.

Regulatory Sandbox Integration

Increasing regulation is driving collaboration between pilot programs and government sandboxes to enable:

- Compliance testing during experimentation

- Early identification of regulatory concerns

- Collaborative policy development

- Streamlined certification processes

The UK Financial Conduct Authority’s AI sandbox has partnered with 12 institutions on pilots – reducing time-to-compliance by 45% on average for participants.

Frequently Asked Questions (FAQs)

What distinguishes pilot generative AI limited from standard AI implementation approaches?

Pilot methodologies differ through their structured risk management and validation processes. Unlike standard implementations, these programs include predefined evaluation frameworks, controlled scope limitations, and dedicated governance structures. The limited scope allows organizations to validate technical performance and business impact before significant resource commitment. Crucially, pilot approaches prioritize organizational learning with built-in knowledge transfer preparing companies for broader AI adoption.

Key differentiators include phased deployment strategies, cross-functional governance teams, and comprehensive measurement systems tracking technical and business metrics. These elements create more predictable implementation pathways – particularly valuable for companies new to enterprise AI or operating in heavily-regulated industries where errors carry serious consequences.

How long should a typical pilot generative AI limited program last?

Ideal duration varies by complexity and industry but generally spans 3-9 months. Simple implementations in data-rich environments may validate in 90 days, while complex applications in regulated sectors often need 6-9 months. Three factors significantly influence timelines:

First, data acquisition/preparation timelines – projects needing new data collection or major cleansing extend durations. Second, regulatory approval processes in healthcare/finance add mandatory review periods. Third, iteration cycles required to hit performance targets determines overall schedule.

Best practice suggests time-boxed iterations with formal evaluations every 4-6 weeks – maintaining momentum while ensuring proper validation. Organizations should align duration with achieving predefined success criteria rather than arbitrary deadlines.

What budget range should organizations anticipate for pilot programs?

Budgets vary considerably based on scope, industry, and technical complexity – typically $150,000-$500,000 covering:

| Cost Component | Low Complexity | Medium Complexity | High Complexity |

|---|---|---|---|

| Technical Resources | $50,000-$100,000 | $100,000-$250,000 | $250,000-$500,000 |

| Data Preparation | $10,000-$25,000 | $25,000-$75,000 | $75,000-$150,000 |

| Infrastructure | $15,000-$40,000 | $40,000-$100,000 | $100,000-$300,000 |

Organizations should allocate 15-25% for unexpected needs – especially in sectors with evolving regulations. While substantial, successful pilots typically deliver 3-5x ROI when solutions scale enterprise-wide, justifying them as strategic investments.

How do regulatory requirements impact pilot design?

Regulatory considerations fundamentally shape pilot architecture in governed industries through impacts on:

- Data Handling Protocols: Stricter requirements for anonymization, storage, and access

- Validation Frameworks: Mandated testing methodologies and documentation standards

- Governance Structures: Required oversight committees and reporting mechanisms

- Deployment Limitations: Constraints on live environment testing scope

Healthcare pilots must comply with HIPAA – requiring specialized data enclaves and audit trails. Financial sector pilots face SEC/FINRA mandates affecting everything from model interpretability to decision documentation. Proactive regulatory engagement during design prevents costly redesigns and ensures compliance alignment from inception.

What are common failure patterns in generative AI pilots and mitigation strategies?

Analysis shows five recurrent failure patterns in unsuccessful pilots:

- Undefined Success Criteria: 42% of failed pilots lacked clear KPIs

Mitigation: Establish quantitative metrics during planning with stakeholder buy-in

- Data Quality Issues: 37% encountered insurmountable data challenges

Mitigation: Conduct comprehensive data audit before development

- Stakeholder Disengagement: 29% suffered leadership inattention

Mitigation: Implement structured governance with executive sponsorship

- Technical-Domain Misalignment: 24% built solutions lacking business relevance

Mitigation: Maintain continuous business stakeholder involvement

- Overambitious Scope: 19% attempted overly complex implementations prematurely

Mitigation: Prioritize achievable quick wins before complex uses

Proactively addressing these risks increases success probability by 68% according to MIT research. Organizations should develop risk registers during planning – identifying potential failure points and corresponding prevention measures for each project element.

Also Read: AI Trend Analyzer: Transform Your Business with Powerful Insights in 2026