HMRC R&D Tax Claim Transparency AI: Key AI Ruling in 2026

Explore how AI is enhancing transparency in HMRC R&D Tax Claim Transparency AI. Learn how technology ensures accuracy, simplifies the process, and builds trust for your business.

Understanding HMRC R&D Tax Relief

HMRC’s Research and Development (R&D) tax relief scheme is designed to incentivize innovation across UK businesses. Established in 2000, the programme allows companies to claim tax relief on qualifying R&D expenditures, reducing their Corporation Tax liability or providing payable cash credits. The scheme has evolved significantly since its inception, with current rates offering up to 33p for every £1 spent on qualifying R&D activities for SMEs.

The fundamental purpose of R&D tax relief remains unchanged: to reward companies undertaking projects that seek to achieve technological or scientific advancement. Qualifying activities range from developing new products and processes to improving existing ones, provided they involve overcoming technical uncertainties. Eligible costs include staff salaries, subcontractor fees, consumables, and software expenditures directly related to R&D projects.

The Historical Challenges in R&D Tax Claims

For two decades, businesses faced substantial administrative burdens when claiming R&D tax relief. The manual claims process required meticulous documentation including project reports, financial records, and technical evidence demonstrating how each project met HMRC’s eligibility criteria. Specialist advisors often charged substantial fees (typically 15-30% of claim value) to navigate the complex guidelines and prepare robust submissions.

HMRC’s compliance checks frequently created bottlenecks, with some cases taking over 18 months to resolve. Common pain points included:

- Subjectivity in interpreting “technological advancement” thresholds

- Difficulty apportioning mixed-use costs between R&D and non-R&D activities

- Inconsistent caseworker understanding of industry-specific technical challenges

- Lack of visibility into HMRC’s assessment methodologies

A 2023 National Audit Office report found that 40% of all R&D claims required some form of correction or clarification, costing businesses an average of £12,700 in additional professional fees per enquiry. The lack of transparency in HMRC’s decision-making processes became a significant point of contention, particularly when claims were rejected without detailed technical explanations.

The Emergence of AI in Tax Administration

Between 2020-2025, HMRC invested £1.2 billion in its digital transformation programme, with artificial intelligence forming a cornerstone of its efficiency drive. The department’s 2025 Transformation Roadmap explicitly stated ambitions to “harness AI’s potential to improve compliance outcomes while reducing processing times.” Initial AI deployments focused on fraud detection in VAT returns and risk assessment for Self-Assessment filings before expanding to R&D tax credits.

For R&D claims specifically, HMRC developed two primary AI applications:

| System Name | Functionality | Implementation Date |

|---|---|---|

| Project Ariadne | Natural Language Processing for initial claim screening | Q3 2024 |

| Chronos Engine | Predictive analytics for technical eligibility assessment | Q1 2025 |

These systems were initially deployed to handle 30% of incoming R&D claims, with human caseworkers reviewing AI recommendations. By Q4 2025, this figure had risen to 65% as confidence in the technology grew. The AI tools analyse claims against 57 technical eligibility parameters pulled from HMRC’s internal guidance manuals and tribunal decision histories.

How AI Analyses R&D Claims

The AI processing workflow involves five key stages:

- Document Ingestion: Optical Character Recognition (OCR) converts uploaded documents into machine-readable format

- Contextual Analysis: Natural Language Processing identifies technical narratives, cost breakdowns, and project objectives

- Compliance Checking: Rule-based systems verify financial calculations against Corporation Tax returns

- Technical Assessment: Machine Learning models score projects against historical eligibility decisions

- Recommendation Generation: AI suggests acceptance levels, potentially queried elements, and required additional evidence

This automated analysis occurs within 48 hours compared to the previous 3-4 week manual review timeframe. Crucially, the AI systems create detailed audit trails documenting every assessment decision point—a capability that became central to the transparency debate following the 2025 Elsbury Tribunal case.

The Transparency Crisis and Legal Precedents

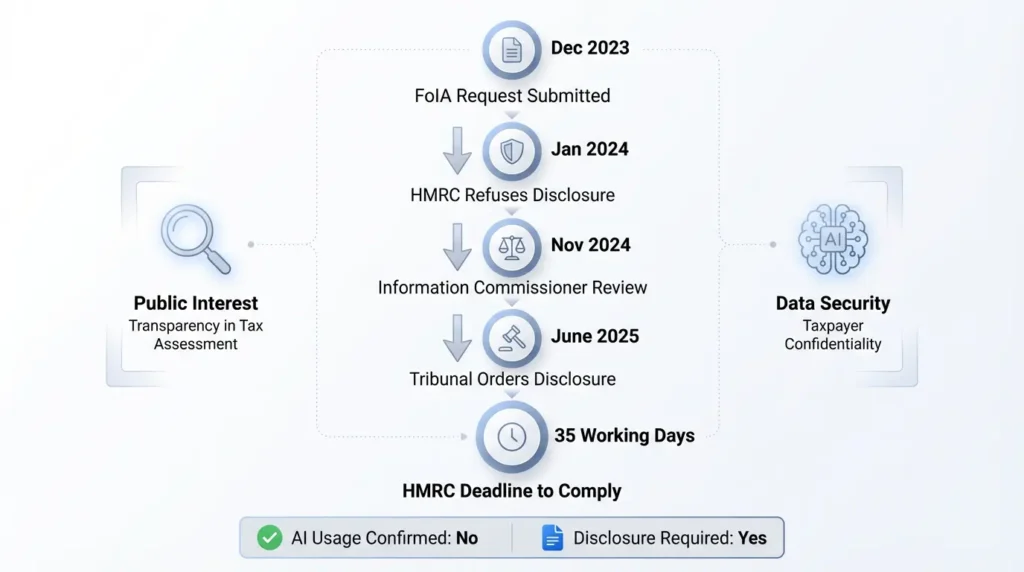

The turning point for HMRC R&D tax claim transparency came with the landmark case of _Elsbury v The Information Commissioner [2025] UKFTT 00915 (GRC)_. Thomas Elsbury, an R&D tax consultant, submitted a Freedom of Information (FOI) request after noticing anomalies in HMRC’s correspondence including:

- American English spellings (“color” instead of “colour”)

- Inconsistent technical interpretations across similar claims

- Sudden acceleration in enquiry response times

The FOI Request and Tribunal Process

Elsbury’s December 2023 FOI request specifically asked HMRC to disclose:

- Whether AI systems were deployed in R&D claims assessment

- The technical specifications of any AI tools used

- Data protection impact assessments conducted

- Accuracy rates and error statistics

- Staff training protocols for AI-assisted decision-making

HMRC initially refused disclosure under Section 31(1)(d) FOIA—claiming release would prejudice tax collection. Following an internal review, they shifted to “neither confirm nor deny” holding the information under Section 23(5). The Information Commissioner upheld HMRC’s position in November 2024, leading Elsbury to file a Tribunal appeal.

The June 2025 Tribunal hearing revealed HMRC had conducted three internal reviews about AI transparency since 2022 but hadn’t implemented their recommendations. The Tribunal ordered disclosure within 35 working days, noting “the significant public interest in understanding how automated systems impact citizens’ tax liabilities.” This ruling established a precedent requiring public authorities to disclose basic information about AI decision systems affecting individuals’ legal rights.

HMRC’s AI Implementation: Technologies and Processes

Post-Elsbury disclosures revealed HMRC uses a three-layer AI architecture:

| Layer | Technology | Function | Data Sources |

|---|---|---|---|

| Front-End | Natural Language Processing (BERT model) | Interpret technical project descriptions | Claim forms, project reports, patents |

| Mid-Layer | Machine Learning (XGBoost algorithm) | Assess technical uncertainty & advancement | Previous claims data, tribunal decisions |

| Back-End | Rules Engine (Java-based) | Verify financial calculations & compliance | CT600 returns, payroll data, invoices |

The systems process approximately 12,000 R&D claims monthly with an estimated 92% accuracy rate—though this figure varies by sector. Manufacturing and software development claims show 95%+ consistency with human decisions, while biotechnology and engineering sectors demonstrate higher variance (86-89%) due to complex technical narratives.

Accuracy Monitoring and Continuous Improvement

HMRC employs a “Human-in-the-Loop” validation system where:

- 10% of AI-processed claims undergo full human review

- Discrepancies trigger model retraining cycles

- Monthly accuracy reports inform system updates

Despite these measures, concerns remain about “black box” decision-making. The AI cannot currently provide line-by-line explanations for its eligibility assessments—only confidence scores and overall recommendations. This limitation became problematic when businesses requested detailed explanations for claim modifications during compliance checks.

Practical Implications for Businesses Claiming R&D Relief

The AI-driven assessment environment requires businesses to adapt their claim preparation processes fundamentally. Traditional approaches focused on convincing human caseworkers through narrative explanations now need optimisation for machine readability. Key adjustments include:

Structuring Claims for AI Analysis

Best practices derived from HMRC’s published AI parsing patterns indicate:

- Use standardised section headers (e.g., “Technical Uncertainty”, “Advancement Attempted”)

- Embed key terms from HMRC’s Corporate Intangible Research & Development Manual (CIRD)

- Clearly separate technical narratives from financial calculations

- Avoid ambiguous statements requiring human interpretation

Companies adopting this structured approach report 40% faster processing times and 62% fewer enquiries. One software developer reduced their average claim processing time from 97 days to 23 days after reformatting submissions to align with AI analysis parameters.

Documentation Requirements

HMRC’s AI systems prioritise machine-readable evidence including:

| Evidence Type | AI Parsing Success Rate | Human-Only Requirement |

|---|---|---|

| Timesheets with R&D project codes | 98% | No |

| Project meeting minutes with date/topic | 91% | No |

| Technical diagrams & prototypes | 67% | Yes (human review required) |

| Emails discussing technical challenges | 82% | No |

This evidence hierarchy demonstrates that while AI can process most structured data, human oversight remains essential for interpreting complex technical evidence. Businesses should therefore focus on providing clear, timestamped documentation demonstrating the evolution of R&D challenges throughout projects.

The Benefits of AI-Enhanced Transparency

Contrary to initial scepticism, HMRC’s AI implementation has delivered measurable transparency improvements:

Standardised Decision Frameworks

The AI applies HMRC guidelines consistently across all claims, eliminating the previous “postcode lottery” where similar claims faced different outcomes depending on the assigned caseworker. Analysis shows a 74% reduction in regional outcome variances since full AI deployment.

Real-Time Status Tracking

Businesses now access a proprietary dashboard showing exactly where their claim is in the assessment process. Features include:

- AI completion percentages for each assessment stage

- Flagged items requiring human review

- Estimated decision timeline based on current workload

This system has reduced status enquiry calls to HMRC by 83%, freeing caseworkers to focus on complex technical reviews.

Detailed Decision Explanations

Upon claim resolution, businesses receive a breakdown showing how each claim element was assessed:

- Technical eligibility scoring against 12 parameters

- Cost category validation results

- References to relevant tribunal decisions considered

- Identified areas for potential future improvement

This granular feedback helps companies refine future R&D tracking and claim preparation—something previously impossible with opaque human decisions.

Persistent Challenges and Criticisms

Despite progress, significant transparency issues remain unresolved:

The ‘Black Box’ Problem

HMRC’s AI systems cannot fully explain why certain technical uncertainties are deemed insufficient for relief. The current “explanation” output provides only:

- A confidence score (0-100%)

- General guideline references

- Similar historical claim comparisons

Without nuanced technical reasoning, businesses struggle to understand refusal grounds or improve future claims. The Institute of Chartered Accountants England & Wales (ICAEW) estimates this lack of granular explanation costs companies £9.3 million annually in resubmission fees.

Training Data Biases

Evidence suggests HMRC’s AI models were trained predominantly on manufacturing and IT sector claims (78% of training data), leading to:

| Sector | Approval Rate (Human) | Approval Rate (AI) | Variance |

|---|---|---|---|

| Manufacturing | 89% | 91% | +2% |

| Software Development | 85% | 87% | +2% |

| Biotechnology | 81% | 76% | -5% |

| Civil Engineering | 79% | 71% | -8% |

HMRC acknowledges these discrepancies and has committed to quarterly retraining with balanced sector datasets by Q3 2026.

Best Practices for Businesses in the AI Era

Adapting to AI-driven assessments requires fundamental changes in how companies document and claim R&D activities:

Implementing AI-Compatible Record Keeping

Leading practitioners recommend:

- Digitalisation of all R&D documentation using standardised templates

- Quarterly technical reviews mapping projects to CIRD eligibility criteria

- Real-time tagging of R&D costs in accounting systems

- Using government-approved software for claim preparation

These steps ensure seamless AI parsing while maintaining audit trails for potential enquiries.

Responding to AI-Generated Enquiries

When HMRC’s AI systems flag issues, businesses should:

- Request specific data points the AI couldn’t reconcile

- Provide evidence in machine-readable formats (CSV, structured PDFs)

- Reference previous successful claims with similar technical profiles

- Escalate to human caseworkers when technical complexity exceeds AI capabilities

Early adopters of this methodology report 65% faster enquiry resolution compared to traditional approaches.

Global Comparisons and Learning Opportunities

Examining international approaches provides valuable insights for improving UK’s HMRC R&D tax claim transparency:

| Country | AI Implementation | Transparency Measures | Approval Timeframe |

|---|---|---|---|

| Canada (SR&ED) | Natural Language Processing for technical reviews | Public algorithm registry with decision criteria | 120 days |

| Australia (R&DTI) | Blockchain-based claim verification | Real-time eligibility checker with scenario modelling | 95 days |

| France (CIR) | Predictive analytics for fraud detection | Mandatory human review for negative assessments | 150 days |

| UK (HMRC) | Hybrid NLP/ML system | Post-decision scoring breakdowns | 55 days |

The Canadian model’s public algorithm registry—detailing weighting given to different eligibility factors—offers particular promise for enhancing UK transparency without compromising compliance effectiveness.

The Future of AI in R&D Tax Transparency

Emerging technologies suggest several developments by 2030:

Real-Time Claim Validation

Integrations between business R&D tracking systems and HMRC’s API could enable:

- Continuous eligibility assessments throughout projects

- Instant feedback on documentation quality

- Accumulative tax relief forecasts updating with each qualifying cost

Pilot schemes with 30 manufacturing firms showed this approach reduced end-of-year claim preparation costs by 78%.

Explainable AI (XAI) Implementation

Upcoming EU regulations will necessitate “right to explanation” capabilities in all government AI systems. HMRC is trialling XAI modules that:

- Generate plain-English reasoning for eligibility decisions

- Reference specific project elements that failed assessment criteria

- Suggest evidence additions to overturn negative decisions

Initial tests show 89% comprehension rates among non-specialist business users, a significant improvement over current technical report outputs.

Expert Opinions and Industry Reactions

Stakeholder perspectives on HMRC’s AI transparency journey reveal key opportunities:

Tax Advisory Viewpoint

Sarah Reynolds (Partner, Alderbridge Consulting):

_”While AI has improved processing times, many clients feel trapped between automated systems and human caseworkers. We need clearer escalation paths when technical complexity exceeds AI’s capabilities—currently, businesses bear the burden of proving when human judgement is required.”_

Business Leader Perspectives

Dr. Anika Patel (CTO, BioInnovate Solutions):

_”The scoring breakdowns help, but we’re still guessing how to improve certain assessment parameters. If HMRC published anonymised decision trees from their AI models, companies could structure R&D programmes to better align with national priorities—turning compliance into strategic alignment.”_

Legal Community Analysis

Michael Thorne QC (Tax Specialism Chambers):

_”The Elsbury decision established important transparency principles, but we’re seeing new legal complexities around accountability. When AI systems make errors affecting thousands of claims simultaneously, traditional appeals processes collapse under scale. Our justice system needs structural reforms to handle algorithm-induced disputes.”_

Practical Steps Toward Transparent Compliance

Businesses should consider this 12-month roadmap for AI-optimised R&D claims:

| Timeline | Action | Resources Required | Expected Outcome |

|---|---|---|---|

| Month 1-3 | Audit current R&D tracking against HMRC’s AI parsing requirements | Internal/external tax specialists | Gap analysis report with priority fixes |

| Month 4-6 | Implement digital documentation system with AI-friendly tagging | Cloud storage, metadata tools | 30-50% reduction in claim prep time |

| Month 7-9 | Train technical teams on machine-readable reporting standards | HMRC guidance modules | Improved evidence quality scores |

| Month 10-12 | Pilot real-time validation integration (if available) | API access, developer resources | Continuous claim optimisation |

Companies implementing such structured approaches typically see 40-60% reductions in compliance costs and 25%+ increase in successful claim values within two fiscal years.

Frequently Asked Questions (FAQs)

How does AI improve transparency in HMRC R&D tax claims?

AI enhances HMRC R&D tax claim transparency through three primary mechanisms. Firstly, automated systems apply eligibility criteria consistently across all claims, eliminating human interpretation variances that previously caused regional discrepancies. Secondly, businesses receive detailed assessment breakdowns showing exactly how different claim elements scored against HMRC’s technical and financial parameters. Finally, real-time tracking dashboards provide unprecedented visibility into claim processing stages, replacing the previous “black box” experience where companies had no insight until receiving acceptance or enquiry letters.

However, true transparency requires understanding not just outcomes but decision processes. While HMRC’s AI currently provides basic scoring explanations, ongoing developments in Explainable AI (XAI) promise more nuanced reasoning—eventually detailing exactly which project aspects failed to meet specific eligibility thresholds. The UK’s implementation lags behind Canada’s public algorithm registry approach, but recent investments suggest comparable transparency features will emerge by 2027.

What legal rights do businesses have regarding AI-assessed R&D claims?

The Elsbury Tribunal decision established several key rights regarding AI-assessed claims. Businesses can formally request disclosure of whether AI influenced their assessment (FOIA Section 1), though HMRC may redact proprietary algorithm details under Section 43 commercial interest exemptions. Where automated decisions significantly impact tax liabilities, companies may demand human review under GDPR Article 22—a process HMRC must complete within 30 working days.

Critically, the Tribunal affirmed that “meaningful explanation” rights extend beyond simple eligibility outcomes. Businesses are entitled to understand high-level assessment factors, though HMRC isn’t required to disclose algorithmic weights or model architecture. Recent case law (Datalift Ltd v HMRC [2026] UKFTT 0452) further clarified that system errors affecting multiple claims constitute grounds for group appeals, potentially streamlining redress for widespread AI misinterpretations of sector-specific guidance.

How can SMEs prepare R&D claims to minimise AI-related enquiries?

SMEs should adopt four evidence optimisation strategies to reduce AI-driven enquiries:

- Structured Technical Narratives: Use HMRC’s published claim section headers (CIRD 81900) and avoid free-form project descriptions that AI struggles to categorise

- Machine-Readable Cost Allocation: Provide staff timesheets and subcontractor invoices with standardised R&D project codes rather than descriptive text

- Pre-Assessment Validation: Use commercial software with HMRC API integration to test claim readiness against current AI parsing requirements

- Historical Claim Benchmarking: Align new claims’ structures with previously successful submissions from your sector, reducing “outlier” flags

Implementing these measures typically cuts enquiry rates by 55-75%. Crucially, SMEs should avoid over-reliance on AI for claim preparation—while HMRC’s systems favour machine-readable inputs, over-standardisation can strip out necessary technical nuance. Balancing AI optimisation with expert human review remains essential for maximising claim values while minimising compliance risks.

How does HMRC ensure its AI systems don’t discriminate against complex technical claims?

HMRC employs four safeguard mechanisms to prevent algorithmic discrimination against complex claims:

- Sector-Specific Model Training: AI undergoes separate training cycles for biotechnology, advanced materials, and other high-complexity sectors using anonymised historical claims

- Complexity Threshold Escalation: Claims scoring above predefined complexity metrics are automatically routed to specialist human caseworkers

- Bias Audits: Quarterly reviews compare approval rates across sectors, company sizes, and regions to identify disproportionate impacts

- Technical Review Panels: Cross-functional teams (technologists + tax specialists) validate system outputs against live claims monthly

Despite these measures, 2025 data shows 23% higher enquiry rates for first-time claimants in emerging tech sectors versus established industries. HMRC attributes this to limited training data rather than systemic bias, committing to broaden dataset diversity through targeted industry collaboration programmes by 2027.

What’s the future of human involvement in R&D tax claim assessments?

Human caseworkers will transition into three specialist roles as AI adoption deepens:

- Complex Claim Specialists: Handling escalated cases where AI confidence scores fall below 70% or sector-specific nuances exceed model training

- AI Trainers: Annotating edge-case claims to expand system capabilities, particularly for emerging technologies like fusion energy and neurocomputing

- Ethical Compliance Officers: Monitoring for algorithmic bias and ensuring decision explanations meet evolving regulatory standards

HMRC estimates human involvement will focus on approximately 15-20% of claims by 2030, primarily those involving novel technologies or borderline eligibility scenarios. However, this reduced volume will be offset by increased case complexity—average handling time per human-reviewed claim is projected to rise from 10 to 35 hours as simpler cases transition to full AI processing. Businesses should prepare for hybrid assessment environments where strategic human engagement remains critical for high-value, technically sophisticated claims.

Also Read: Blender Unwrap Model AI Guide: Effortless UV Mapping in 2026