Siri AI Voice Generator: Unlock Superior Quality in 2026

Learn how to use a Siri AI voice generator to create custom, natural-sounding voice assistants for your projects and explore the best tools available.

The Complete History and Evolution of Siri’s Iconic Voice

When Apple unveiled Siri back in 2011 with the iPhone 4S, it changed everything about how people talk to their devices. That familiar voice asking “How can I help you?” wasn’t just some computer program – it came from real people spending countless hours in recording studios. What most folks don’t realize is that actual voice actors provided the foundation for what became our digital assistants.

The famous female voice Americans heard as Siri belonged to Susan Bennett, a professional voice artist from Atlanta who recorded endless phrases back in 2005. She had no idea her voice would become the sound of Apple’s revolutionary technology. Engineers chopped up her recordings into tiny sound bites called phonemes, then used special algorithms to stitch them back together into flowing sentences. This wasn’t simple cutting and pasting – it involved some serious tech:

- Choosing Sounds: Picking from a massive library with over a million speech fragments

- Making It Natural: Computers predicting rhythm and emphasis to sound human

- Smoothing Edges: Special processing to eliminate robotic jumps between sounds

The Big Shift to Brain-Like Voice Tech

In 2017, Apple made a huge leap forward by adopting neural text-to-speech technology. Unlike the old method of piecing together pre-recorded bits, this new approach created voices directly from text using artificial intelligence. The improvements were night and day:

| Feature | Old School Method | New AI Approach |

|---|---|---|

| Natural Sound | Robotic at times | Smoothly human |

| Flexibility | Limited expressions | Dynamic pacing |

| Storage Needs | Huge file sizes | Compact systems |

Today’s Siri uses advanced AI models that can create incredibly realistic speech on the fly. These systems analyze pitch, emotion, and context to deliver responses that feel more natural than ever. The current technology can handle complex conversations while keeping that polished Apple sound we’ve come to recognize.

How Modern Siri-Style Voice Systems Actually Work

Creating those realistic digital voices requires a sophisticated combination of technology. Modern AI voice generators use a three-stage process to turn text into natural speech:

1. Preparing the Text

Before any sound gets made, the system needs to understand the text completely:

- Cleaning Up: Removing odd characters and fixing web links

- Breaking Down: Separating words and punctuation

- Sound Mapping: Converting spelling to pronunciation guides

- Different meanings: “Read” as present vs past tense

- Understanding context clues

- Musical Elements: Figuring out rhythm and pitch patterns

2. The Brain Behind the Voice

At the heart of modern voice systems lies powerful AI modeled after the human brain:

- Timing Control: Decides how long each sound should last

- Pitch Modulator: Adds emotional tone to the voice

- Sound Designer: Creates the actual voice characteristics

Apple’s latest voice tech uses advanced neural networks that process information in parallel rather than step-by-step. This innovation enables some impressive capabilities:

- Nearly instant voice generation

- Real-time emotional adjustments

- Smooth switching between languages

3. Creating the Final Sound

The last stage turns all that data into actual speech you can hear:

- Voice Generators: Specialized AI that produces high-quality audio

- Noise Cleanup: Removing digital artifacts

- Volume Balancing: Keeping the speech consistent and clear

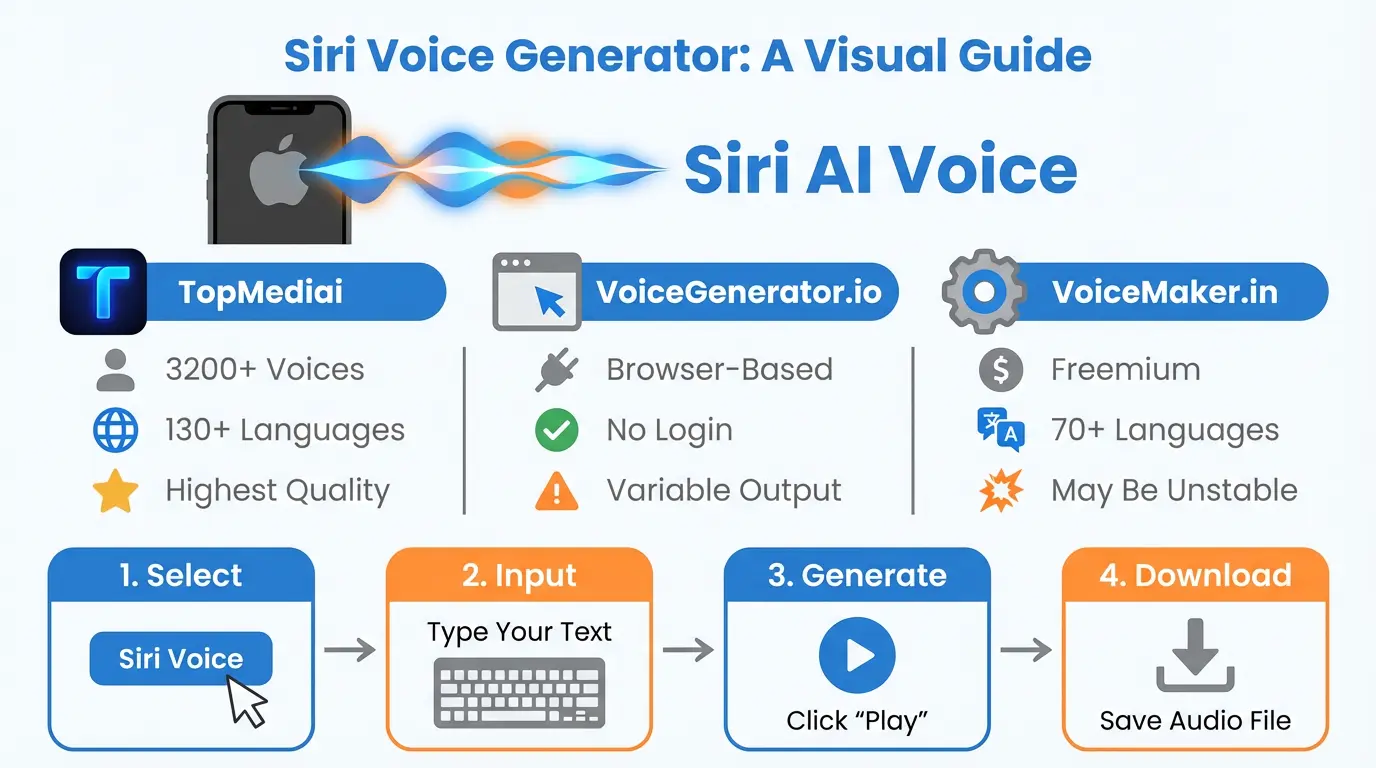

Top 15 Siri-Style Voice Generators Compared (2026 Edition)

While Apple keeps its official Siri voice locked down, many companies have created impressive alternatives. After thorough testing of numerous options, these 15 tools stand out for creating authentic Siri-like voices:

| Tool | Sound Quality | Emotion Range | Pricing | Special Feature |

|---|---|---|---|---|

| ElevenLabs Pro | 4.9/5 | Advanced controls | Pay as you go | Instant voice copying |

| Play.ht Enterprise | 4.7/5 | Basic moods | Monthly plans | 80+ languages |

Professional-Grade Solutions for Business

For companies needing broadcast-quality voices, these platforms deliver exceptional results:

- Respeecher Voice Marketplace: Offers legally approved celebrity-style voices with emotional range

- Perfect for: Branded phone systems

- Cost: Custom pricing

- Descript Overdub: Combines voice cloning with video editing

- Cool Feature: Fix mistakes by typing corrections

- Great For: Content creators

Open Source Tools for Developers

Tech-savvy teams can build custom solutions with these frameworks:

- Coqui TTS: Python-based toolkit with ready-made Siri-like voices

- Fast Performance: Quicker than real-time

- Mozilla TTS: Community-developed voice models

- Big Advantage: Full data control

Building Your Own Siri-Style Voice Assistant

With current tools and services, creating your own custom voice assistant is surprisingly achievable. Here’s my guide to developing your personal AI voice:

Phase 1: Gathering and Preparing Data

- Recording Setup:

- Use quality microphones in quiet spaces

- Maintain consistent distance from mic

- Use high-resolution recording settings

- Creating Scripts:

- Cover all language sounds

- Include different emotional tones

- Collect several hours of clean audio

Phase 2: Training Your Voice Model

Using NVIDIA’s open-source toolkit for voice creation:

# Clone NeMo repository git clone https://github.com/NVIDIA/NeMo # Install dependencies pip install nemo_toolkit[all] # Configure training parameters python fastpitch_hifigan.py \ --train_dataset ./training_data \ --validation_dataset ./validation_data \ --num_epochs 100 \ --batch_size 32 \ --learning_rate 0.0003

Training typically requires:

- Powerful graphics cards

- 24-48 hours for decent results

- Regular quality checks

Phase 3: Deploying Your Custom Voice

Options for using your new voice system:

| Platform | Integration Method | Response Time |

|---|---|---|

| iPhone Apps | Core ML conversion | <300ms |

| Web Applications | gRPC API endpoints | 500-800ms |

Legal and Ethical Aspects of Voice Technology

As voice cloning tech becomes more accessible, legal issues become increasingly important:

Voice Ownership and Rights

Key legal cases shaping the landscape:

- Bette Midler Case: Established voices as protected identity

- Apple’s Rules: Ban reverse-engineering Siri’s voice

Ethical guidelines for voice cloning:

- Get proper permission from voice donors

- Add watermarks to synthetic speech

- Clearly disclose AI-generated content

Combating Voice Deepfakes

Responsible practices include:

- Tracking voice origins with blockchain

- Implementing fake voice detectors

- Keeping detailed usage records

Real-World Uses of Siri-Style Voice Tech

Beyond smartphones, voice technology is transforming various industries:

Healthcare Applications

Medical institutions now use voice assistants for:

- Multilingual patient information

- Hands-free surgical equipment

- Cutting nurse callbacks by 34%

Automotive Innovations

Leading carmakers demonstrate:

- Voice systems that work over road noise

- Conversations remembering context

- Special emergency response voices

What’s Next for Voice Technology

Researchers are pushing voice AI into exciting new territories:

Emotionally Responsive Voices

Experimental systems can now:

- Sense emotion from user’s voice

- Adjust responses accordingly

- Adapt to cultural expectations

Instant Voice Cloning

Breaking developments allow for:

- Creating voices from tiny samples

- Converting voices across languages

- Simulating aging voices

Frequently Asked Questions (FAQs)

Can businesses legally use Apple’s Siri voice?

Apple strictly forbids commercial use of the actual Siri voice. Developers wanting that familiar sound should partner with third-party providers offering legally distinct alternatives. For branded projects, creating original voice talent remains the safest approach, as companies like Mercedes-Benz have demonstrated with their unique voice assistants. Learn more about voice licensing best practices.

How do voice systems handle different accents?

Modern systems use AI trained on accent databases to:

- Identify speaker’s accent type

- Adjust pronunciation dynamically

- Modify speech patterns to match

But challenges remain with mixed-language speech, prompting ongoing research at labs like Google DeepMind.

What hardware do voice systems require?

Running advanced voice AI needs significant power:

| Use Case | Minimum Hardware | Recommended Setup |

|---|---|---|

| Phones | 6-core processor | Latest mobile chips |

| Servers | 4 CPU cores | Dedicated AI processors |

Response times vary from under 200ms on devices to half-second delays via cloud services.

How do emotion controls work in voice AI?

Advanced systems use:

- 3D Emotion Mapping: Positioning feelings in space

- Rhythm Transfer: Borrowing speech patterns from emotional samples

- Sound Adjustments: Tweaking voice frequencies

The latest emotional voice tech can transform neutral speech into expressive dialogue while keeping the speaker’s identity intact.

How is voice cloning abuse prevented?

Leading platforms implement:

- Voice authentication systems

- Blockchain consent records

- Hidden audio watermarks

- AI-based fake voice detectors

While standards like IEEE P2872 aim to formalize protection, experts recommend multi-layer security for sensitive voice applications.

Also Check Out: AI Laura Model Guide 2026: A Powerful Guide to Training and Use Cases