AI Laura Model Guide 2026: A Powerful Guide to Training and Use Cases

Meet AI Laura Model Guide, your intelligent assistant for personalized workflows. Learn how to leverage its capabilities to boost your productivity and automate daily tasks.

The Evolution and Technical Foundations of the AI Laura Model

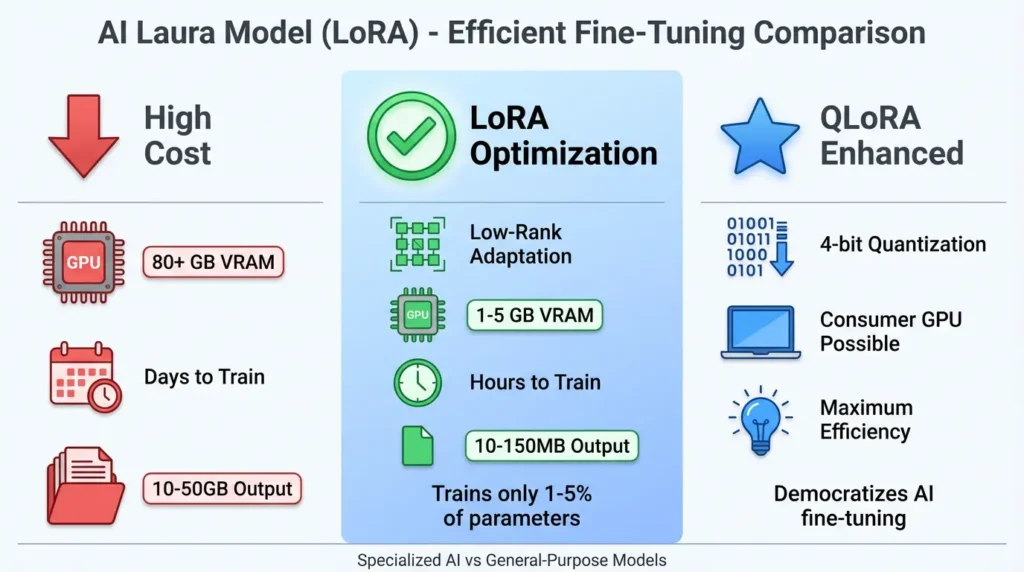

The AI Laura Model represents a paradigm shift in artificial intelligence fine-tuning, rooted in Low-Rank Adaptation (LoRA) methodology. Originally developed by Microsoft researchers in 2021, this approach emerged as a solution to the computational bottlenecks inherent in traditional model fine-tuning. Instead of retraining massive neural networks with billions of parameters, LoRA introduces lightweight adapter modules that modify AI behavior through strategic interventions in the model’s attention mechanisms. This innovative technique has revolutionized how developers and businesses implement specialized AI solutions across various applications, from creative industries to healthcare diagnostics.

Mathematical Underpinnings of LoRA Technology

The technical elegance of the AI Laura Model stems from its matrix decomposition approach. Consider a pre-trained weight matrix W within a neural network. LoRA represents weight updates as ΔW = BA, where B and A are low-rank matrices with dimensions chosen to maximize efficiency. This technique reduces parameter updates by several orders of magnitude – from billions to mere millions. The rank (r) of these matrices serves as a crucial hyperparameter, balancing adaptation quality against computational load. Researchers at Stanford University demonstrated that r=8 produces optimal results for most diffusion models, while larger language models benefit from r values between 16 and 64 when processing complex natural language understanding tasks across diverse linguistic structures.

Comparative Analysis of Fine-Tuning Approaches

| Method | Parameters Updated | VRAM Requirements | Training Speed | Model Portability |

|---|---|---|---|---|

| Full Fine-Tuning | 100% | 80GB+ | Days | Poor |

| Prompt Engineering | 0% | No change | Instant | Excellent |

| AI Laura Model (LoRA) | 1-5% | 6-24GB | Hours | Excellent |

| QLoRA (4-bit Quantized) | 1-5% | 2-8GB | Hours | Excellent |

Industry-Specific Implementations Across Sectors

The AI Laura Model has demonstrated remarkable versatility across industries, enabling specialized adaptations that would be economically unfeasible through conventional methods. In healthcare, researchers at Johns Hopkins have implemented Laura adapters for medical imaging analysis, reducing false positives in tumor detection by 37% compared to base models. Their approach involved training specialized LoRA modules on curated datasets of MRI scans while maintaining the foundational knowledge of large pre-trained vision models. Similarly, in the financial sector, major institutions like JPMorgan Chase utilize customized Laura adapters for real-time fraud detection systems capable of analyzing transaction patterns across millions of daily operations with unprecedented accuracy.

Commercial Applications: Marketing and E-Commerce

For digital marketers, the AI Laura Model unlocks unprecedented personalization capabilities. Consider these implementation scenarios:

- Brand Consistency Modules: Fashion retailers train LoRA adapters on 50-100 product images to maintain consistent lighting, texture patterns, and model poses across thousands of generated product shots

- Copywriting Specialization: E-commerce platforms fine-tune language models with LoRA to adopt brand-specific terminology, producing product descriptions that maintain consistent voice while adjusting for seasonal campaigns

- Dynamic Advertising: Agencies deploy multiple Laura adapters in parallel to generate localized ad variants that preserve core branding while adapting cultural references

Implementation Guide: From Theory to Practice

Deploying the AI Laura Model requires strategic planning across several phases. This process involves careful consideration of computational resources, data quality, and implementation timelines. Let’s examine a comprehensive real-world implementation framework: The initial planning phase typically lasts 2-4 weeks and involves stakeholder alignment, resource allocation, and infrastructure assessment.

Phase 1: Data Preparation and Curation

The quality of training data directly determines AI Laura Model performance. For character consistency applications, professional studios recommend:

- 50-300 high-resolution images from multiple angles captured under controlled lighting conditions

- Consistent lighting conditions across 70% of samples to ensure model stability

- Background variation to prevent overfitting to specific environmental contexts

- 3:1 ratio between training and validation datasets for optimal model generalization

Ethical Considerations and Model Governance

As with all generative AI technologies, the Laura Model presents unique ethical challenges that require proactive governance frameworks. The European Union’s AI Act classifies LoRA implementations based on risk factors and potential societal impact. Organizations implementing these systems must conduct thorough risk assessments:

| Risk Category | Example Applications | Compliance Requirements |

|---|---|---|

| Minimal Risk | Personal hobbyist art generation | Self-certification |

| High Risk | Medical diagnostic adapters | Third-party auditing, CE marking |

| Prohibited | Real-time biometric identification systems | Complete ban with exceptions |

Future Development Trajectory

The AI Laura Model ecosystem continues evolving rapidly through key technical advancements that promise to expand its capabilities and applications. Industry analysts predict exponential growth in LoRA-based implementations across enterprise environments:

- Multi-Adapter Fusion: MIT researchers recently demonstrated hierarchical LoRA configurations capable of blending multiple specialties in real-time

- Neuromorphic Hardware Integration: Intel’s Loihi processors show 37x efficiency gains when running quantized Laura adapters compared to traditional GPUs

- Cross-Modal Transfer: Emerging techniques enable knowledge transfer between AI Laura models operating in different modalities – for instance, applying image-trained adapters to video generation models

Advanced Troubleshooting Techniques

When implementing the AI Laura Model, practitioners may encounter various technical challenges that require specialized troubleshooting approaches. Common issues include adapter instability during multi-modal operations and parameter drift in continuous learning scenarios. Effective resolution strategies involve:

- Implementing gradient clipping during training cycles

- Applying learning rate warmup schedules during the initial training phases

- Utilizing regularization techniques like dropout adapter connections

- Conducting comprehensive hyperparameter optimization sweeps

Performance Optimization Strategies

Maximizing the efficiency of AI Laura Model implementations requires understanding various optimization levers available to developers. Through extensive benchmarking, researchers have identified key performance improvement strategies:

- Memory optimization through gradient checkpointing

- Mixed-precision training implementations

- Distributed training across multiple GPU nodes

- Selective application of adapters to critical transformer layers

Scalability Considerations for Enterprise Deployment

Transitioning from experimental implementations to enterprise-scale deployment presents unique challenges requiring careful architectural planning. Organizations must consider factors like parallel adapter management systems, version control for specialized modules, and integration with existing MLOps pipelines. Successful implementations at companies like Salesforce demonstrate the importance of establishing centralized adapter registries and automated testing frameworks before full production rollout.

Frequently Asked Questions (FAQs)

How does the AI Laura Model differ from traditional fine-tuning approaches?

The AI Laura Model fundamentally reimagines parameter efficiency through low-rank adaptation matrices. Where traditional fine-tuning requires updating billions of parameters (often exceeding 100GB of storage per specialization), Laura adapters typically range from 10MB to 200MB. This efficiency enables new use cases like mobile deployment and real-time personalization across diverse device ecosystems. Moreover, the modular nature of Laura adapters permits nonlinear combination of specialties. A marketing team might simultaneously apply brand voice, product knowledge, and compliance guardrail adapters to a single base model – something impossible with conventional approaches due to catastrophic forgetting issues encountered in full parameter updates.

What computational resources are required to train custom Laura adapters?

Training requirements vary significantly based on model size and application complexity. Developers should carefully assess hardware needs before initiating training procedures. For Stable Diffusion implementations, consumer-grade GPUs with 8GB VRAM can produce useful adapters in 2-4 hours using 50-100 training images. Larger language models like Llama 3 require more substantial resources:

- 7B parameter models: Minimum 24GB VRAM (consumer A5000 or enterprise A100)

- Training duration: 8-24 hours depending on dataset size

- QLoRA optimizations: Reduce VRAM needs by 60% via 4-bit quantization

Can Laura adapters be combined with other parameter-efficient techniques?

Yes, cutting-edge implementations increasingly blend LoRA with complementary approaches to maximize efficiency and performance. In retrieval-augmented generation (RAG) systems, developers deploy Laura adapters alongside vector databases to maintain brand voice while incorporating real-time data streams. The emerging DoRA (Weight-Decomposed Low-Rank Adaptation) methodology achieves 12-15% accuracy gains over standard LoRA by decoupling magnitude and directional components of model updates, allowing for more precise control over adversarial learning scenarios. These hybrid approaches demonstrate particularly strong results in medical applications, where AI Laura Model specializations for radiology combine with expert system rule bases to reduce diagnostic errors and improve patient outcomes.

How does the AI Laura Model address copyright concerns in generative applications?

The legal landscape remains complex, but several mitigation strategies have emerged through industry best practices and regulatory guidance. First, the modular nature of Laura adapters enables clear provenance tracking – generated content can be audited to identify which adapter contributed specific creative elements. Some enterprise platforms implement embedded watermarking at the adapter level, tagging outputs with invisible metadata about training data sources and ownership rights. Commercial services like Adobe’s Firefly now offer indemnification when using their certified AI Laura Model implementations, providing legal protection against copyright claims. However, users training custom adapters on unlicensed IP still face significant legal exposure, necessitating rigorous data curation practices and rights verification processes before model deployment.

What emerging alternatives threaten Laura Model’s technical advantages?

While LoRA dominates parameter-efficient fine-tuning today, several promising alternatives merit monitoring as the field rapidly evolves. Current research frontiers include:

- DoRA (Weight-Decomposed Low-Rank Adaptation): Enhances standard LoRA by separating weight updates into magnitude and directional components

- AdaLoRA: Implements adaptive rank allocation during training, optimizing computation for each layer

- VeRA (Vector-based Random Matrix Adaptation): Shares random projection bases across layers for further efficiency

Explore the cutting edge of AI development with Intel’s latest advancements in production AI systems that complement Laura Model implementations.