AI SaaS Product Classification Criteria: A Framework for 2026 Success

Learn the key AI SaaS product classification criteria to effectively categorize and evaluate solutions. Discover the essential factors like pricing models, technical architecture, and use cases.

The Critical Need for AI SaaS Product Classification

The rapid expansion of artificial intelligence in the Software-as-a-Service sector presents both exciting possibilities and complex hurdles. Businesses everywhere are scrambling to implement AI-driven tools, resulting in a crowded marketplace filled with applications claiming groundbreaking features – yet many companies lack a structured approach to assess these options properly. Establishing clear criteria for classifying AI SaaS products has become crucial, forming the basis for smart purchasing decisions, risk reduction, and strategic tech investments.

More than 60% of technology executives admit struggling to compare AI SaaS offerings because vendors use inconsistent labeling and exaggerate performance claims (2025 SaaS Industry Report). This confusion leads to real problems – wasted budgets, unsuccessful deployments, and regulatory penalties. Developing a multifaceted classification system that considers technical specifications, operational factors, and strategic fit provides the solution.

Transformative Business Impact of Proper Classification

When implemented correctly, AI SaaS classification delivers significant advantages across departments:

| Business Function | Impact of Proper Classification | Quantifiable Benefit |

|---|---|---|

| Procurement | 70% reduction in vendor evaluation time | $183,000 average annual savings (Enterprise IT Survey 2025) |

| Compliance | 92% improvement in regulatory alignment | 46% reduction in compliance-related fines |

| Implementation | 2.3x faster deployment cycles | $2.4M risk-adjusted value per implementation |

| ROI Realization | 68% higher ROI from AI investments | 17.4% average EBITDA improvement |

These improvements stem from classification standards that go beyond surface-level feature checks to assess architectural choices, data handling methods, and alignment with both current needs and future plans.

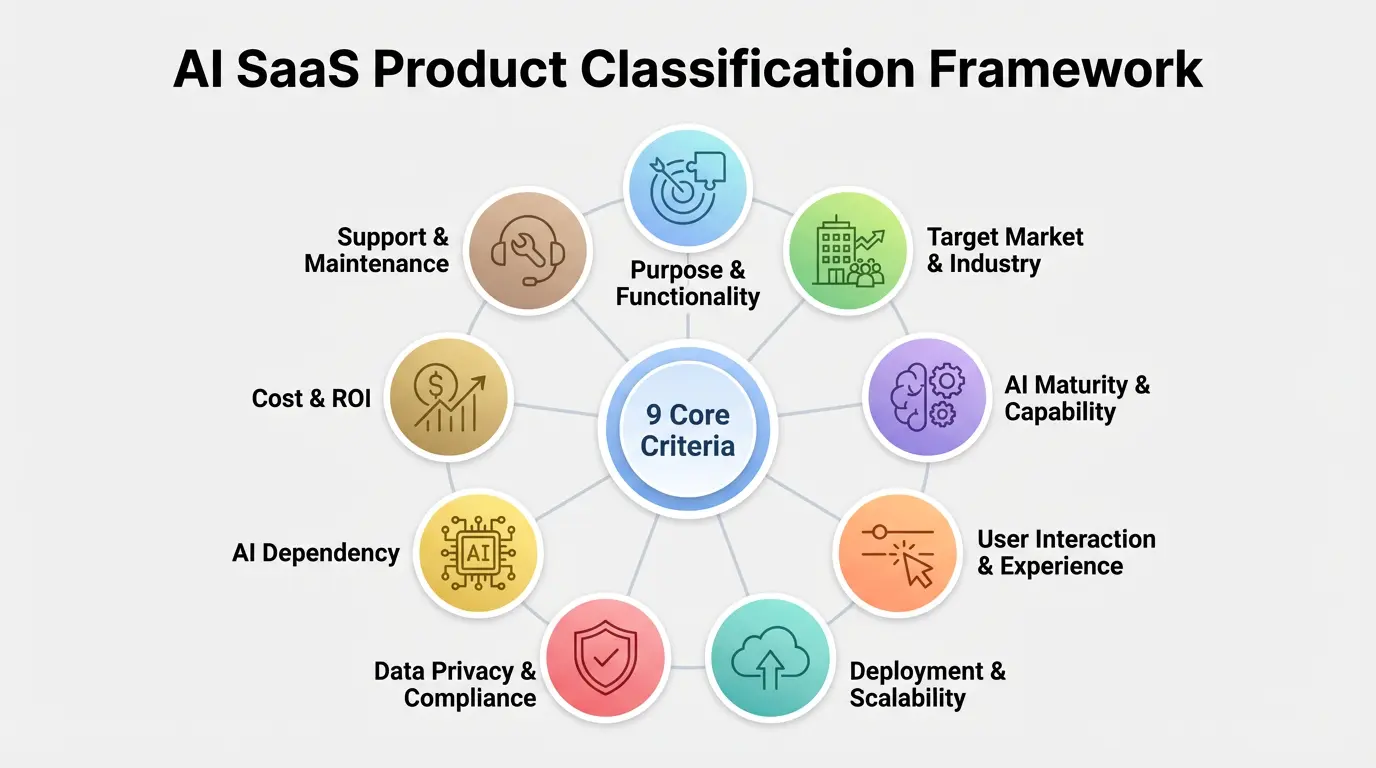

Comprehensive Framework for AI SaaS Classification

Successful classification involves examining products through seven connected lenses, each containing specific evaluation metrics. This approach improves on basic vendor assessments by including technical architecture reviews, operational impact analysis, and strategic fit examinations.

1. Core Functional Purpose and Capability Spectrum

The fundamental classification layer assesses the solution’s primary functions and technological approach. This evaluation must separate marketing claims from actual AI implementation depth.

Capability Analysis Methodology:

- Determine primary AI function (automation, prediction, content creation, etc.)

- Identify secondary supporting features

- Evaluate how deeply AI is embedded (surface-level enhancement vs core component)

- Assess machine learning model specialization (broad-use vs industry-specific)

Consider two Zendesk AI tools: one uses natural language processing for ticket routing (simple automation), while another employs deep learning for sales pattern recognition (advanced prediction). This functional difference directly affects setup complexity, integration needs, and projected outcomes.

2. Technical Architecture and Deployment Models

This infrastructure-focused classification layer examines deployment options and their operational consequences:

| Deployment Model | Best-fit Use Cases | Technical Requirements | Compliance Implications |

|---|---|---|---|

| Public Cloud | Startups, non-regulated industries | Low IT overhead | Limited control over data jurisdiction |

| Private Cloud | Mid-market enterprises | Moderate customization capabilities | Industry-specific certifications possible |

| Hybrid Cloud | Complex compliance environments | API gateway management | Mixed compliance jurisdiction management |

| Edge Computing | Real-time manufacturing/IoT | 5G infrastructure | Data sovereignty assurance |

Platforms like Salesforce Einstein now offer hybrid configurations that keep core AI processing in central clouds while enabling real-time decisions at network edges – creating classification challenges that demand sophisticated evaluation frameworks.

3. AI Maturity and Technological Sophistication

Classifying AI maturity involves assessing four crucial dimensions:

- Data Processing Depth: Basic analytics vs real-time stream processing

- Model Complexity: Regression models vs transformer architectures

- Learning Capacity: Static models vs continuous learning systems

- Adaptation Scope: Narrow task optimization vs broad contextual understanding

The market breaks into three clear tiers based on these measurements:

| Maturity Tier | Technical Indicators | Example Products | Average Annual Contract Value |

|---|---|---|---|

| Basic Automation | Rule-based systems, simple ML | Zapier, basic chatbots | $5,000-$20,000 |

| Advanced Prediction | Deep learning, RNNs | Gong, HubSpot AI | $50,000-$200,000 |

| Autonomous Operation | Reinforcement learning, agentic AI | Moveworks, C3.ai | $250,000-$2M+ |

Correct classification here directly affects implementation timelines, with basic tools averaging 6-week setups versus 9+ months for autonomous systems needing custom configuration.

4. Industry Specialization and Compliance Alignment

Sector-specific classification has grown more critical as regulations diverge across industries. Key differentiators include:

- Healthcare: HIPAA compliance, patient data handling certification, diagnostic validation

- Financial Services: Regulatory compliance, transparency thresholds, audit requirements

- Manufacturing: Industrial IoT integration, real-time processing speeds

- Retail: Personalization accuracy, demand forecasting models

The 2025 Omnibus AI Regulation Act introduced mandatory classification standards for medical AI tools, requiring specific validation processes for diagnostic versus administrative solutions. Similar legislation is developing in finance, making industry-specific classification knowledge essential for compliance.

5. Economic Model and Value Realization Framework

Modern classification systems must analyze pricing structures alongside value delivery methods:

| Pricing Model | Value Alignment | Risk Profile | Best-fit Organization Size |

|---|---|---|---|

| Per-user subscription | Low alignment | Buyer risk | Under 100 employees |

| Consumption-based | Medium alignment | Shared risk | 100-1,000 employees |

| Value-based pricing | High alignment | Vendor risk | 1,000+ employees |

| ROE-sharing model | Complete alignment | Partnership risk | Strategic enterprise partners |

Vendors like ServiceNow now include performance-based pricing adjustments in contracts – creating classification challenges at the intersection of technical capability and commercial innovation.

6. Implementation Complexity and Integration Ecosystem

This classification aspect evaluates:

- API maturity and documentation quality

- Pre-built connector availability

- Customization depth requirements

- Data pipeline complexity

Recent studies show advanced AI systems require nearly 12 times more integration resources than basic automation tools. Proper classification prevents deployment failures by matching products with organizational IT capabilities.

7. Ethical Framework and Governance Model

With most companies expressing AI ethics concerns, classification must assess:

- Bias Mitigation: Training data diversity reports

- Explainability: Model decision transparency

- Governance: Audit trail completeness

- Compliance: Regulatory certification status

Tools like IBM’s AI FactSheets offer standardized documentation for comparing vendor ethics approaches, enabling smarter classification decisions.

Advanced Classification Methodologies

Progressive organizations enhance basic criteria with predictive models anticipating future capability evolution:

Continuous Learning Capacity Assessment

Sophisticated classification evaluates learning architectures:

- Static Models: Fixed-capacity systems

- Periodic Retraining: Scheduled updates

- Online Learning: Continuous improvements

- Reinforcement Learning: Automated optimization

Platforms like Moveworks showcase cutting-edge continuous learning where conversational AI boosts resolution rates by 3-5% monthly without human input.

Multimodal Processing Capability Mapping

Advanced frameworks assess support for:

- Unstructured text analysis

- Image/video interpretation

- Sensor data processing

- Cross-modal insights

This spectrum separates single-purpose tools from solutions like Salesforce Einstein supporting multiple data types.

Composable Architecture Scoring

Leading businesses now evaluate:

- Microservices decomposition level

- API-first design maturity

- Component interoperability

- Low-code customization potential

ServiceNow’s latest offerings exemplify composable architecture, letting customers build custom solutions from modular AI parts.

Implementation Framework for Enterprise Adoption

Moving from classification theory to practice requires structured methodology:

Step 1: Requirement Gathering and Capability Mapping

- Document 3-year business goals

- Connect operational processes to AI capabilities

- Define technical constraints

- Establish compliance thresholds

Financial institutions like JPMorgan use AI maturity models to systematically identify gaps between operations and objectives.

Step 2: Market Scanning and Preliminary Classification

Apply multidimensional filters:

- Functional capability matrix

- Technical compatibility scoring

- Compliance alignment verification

- Economic model assessment

Step 3: Proof-of-Value Implementation

Structured validation:

- Define success metrics

- Establish controlled testing environment

- Concurrent vendor comparison

- ROI projection validation

Microsoft’s validation framework provides templates for testing multiple vendors simultaneously.

Step 4: Full Implementation Roadmapping

Final planning:

- Phase deployment by complexity

- Create cross-functional teams

- Implement optimization processes

- Develop enhancement roadmap

Future Evolution of Classification Frameworks

Emerging technologies will reshape AI SaaS classification:

| Technology Trend | Classification Impact | Timeline |

|---|---|---|

| Quantum Machine Learning | New performance benchmarks | 2027-2029 |

| Neuro-symbolic AI | Reasoning capability metrics | 2026-2028 |

| Edge AI Processors | Latency classification tiers | 2025-2027 |

| Federated Learning | Distributed intelligence scoring | 2026-2028 |

The 2025 EU AI Act will mandate new classifications for high-risk applications, including:

- Third-party auditing certification

- Real-time bias monitoring

- Adversarial testing documentation

Case Studies: Classification Framework Applications

Healthcare: PathAI Diagnostic Platform Selection

Challenge: Hospital network evaluating 14 AI pathology solutions

Approach: Custom classification matrix with weighted criteria:

- Diagnostic accuracy (40% weight)

- HIPAA compliance (25%)

- EHR integration (15%)

- Continuous learning (10%)

- Cost structure (10%)

Outcome: 68% faster evaluation identifying solution with 12% higher accuracy.

Financial Services: Global Bank AI Compliance Suite

Challenge: Balance regulations with fraud detection across 38 jurisdictions

Classification Framework:

- Regulatory coverage mapping

- Explainability thresholds

- Real-time metrics

- Audit capabilities

Result: 41% fewer false positives with $17M annual compliance savings.

Retail: Omnichannel Personalization at Scale

Challenge: Select AI balancing real-time personalization with privacy requirements

Key Criteria:

- Sub-100ms latency

- CCPA/GDPR compliance

- Unified customer profiles

- Predictive accuracy

Outcome: 9.3% higher conversions with zero privacy violations.

Frequently Asked Questions (FAQs)

What distinguishes AI-native SaaS products from AI-augmented solutions?

AI-native SaaS products fundamentally rely on artificial intelligence as their core technological foundation and value proposition. The AI capabilities aren’t merely features but rather the central architecture enabling the product’s primary functionality. Examples include OpenAI’s ChatGPT and C3.ai’s enterprise AI platform, where machine learning models form the product’s essential infrastructure rather than supplemental features.

In contrast, AI-augmented solutions represent traditional software products that have incorporated select AI capabilities to enhance existing functionality. Salesforce CRM illustrates this approach – while it leverages Einstein AI for predictive analytics and automation, the core CRM functionality could operate without these AI components. The distinction proves crucial for implementation complexity, with AI-native products typically requiring specialized data science resources versus AI-augmented tools maintaining more conventional implementation pathways.

How do classification requirements differ between B2B and B2C AI SaaS products?

B2B AI SaaS classification prioritizes integration capabilities, enterprise security protocols, and compliance documentation. Products serving business customers must demonstrate SOC 2 Type II compliance, detailed API documentation, and enterprise-grade SLA commitments. The classification process emphasizes scalability, administrative controls, and audit trail completeness to meet corporate procurement standards.

B2C solutions focus classification criteria on user experience metrics, accessibility compliance, and ethical AI considerations. Evaluation emphasizes algorithmic bias testing, intuitive user interfaces, and subscription flexibility. Unlike B2B’s emphasis on technical integration, consumer product classification heavily weights engagement metrics and personalization effectiveness.

What methodology proves most effective for evaluating AI compliance across jurisdictions?

The Global AI Compliance Matrix (GACM) has emerged as the industry-standard methodology, evaluating solutions against 187 regulatory requirements across 43 jurisdictions. This weighted scoring system assesses:

- Data sovereignty compliance (30%)

- Algorithmic transparency (25%)

- Bias mitigation documentation (20%)

- Audit trail completeness (15%)

- Adversarial testing protocols (10%)

Leading enterprises supplement GACM with dynamic monitoring systems like TrustArc’s AI Governance Platform, which provides real-time updates on regulatory changes affecting product classification. This combination creates a living compliance assessment that adapts as global AI regulations evolve.

How should organizations approach ROI projection for different AI SaaS categories?

ROI analysis requires distinct methodologies per product category:

| Product Category | Primary ROI Drivers | Measurement Timeline | Benchmark Metrics |

|---|---|---|---|

| Automation Tools | FTE reduction, error reduction | 3-6 months | Process cycle time |

| Predictive Analytics | Improved decision quality | 6-12 months | Forecast accuracy |

| Generative AI | Content production speed | 1-3 months | Output quality scores |

| Autonomous Systems | Continuous optimization | 12-18 months | Self-improvement rates |

Leading organizations employ value realization frameworks that combine quantitative metrics with qualitative capability assessments, recognizing that AI’s transformative potential often exceeds traditional ROI calculations.

What emerging standards will impact AI SaaS classification in 2025-2030?

Three key standardization initiatives will reshape classification practices:

- ISO/IEC 42001 Certification: Global AI management system standard requiring documentation of ethical AI development practices, risk management protocols, and governance structures for certification (effective Q2 2026)

- EU AI Act Classification Mandates: Four-tiered risk classification system (minimal, limited, high, unacceptable) with corresponding technical documentation and compliance requirements for market access in European Union countries (phased implementation 2025-2027)

- NIST AI Risk Management Framework: U.S. government guidelines for trustworthy AI systems, including standardized assessment methodologies for accuracy, security, and explainability (mandatory for federal contractors from 2026)

Additionally, IEEE’s P3119 working group is developing standardized AI capability scoring metrics to facilitate vendor comparisons across technical dimensions.

Also Read: AI Robots Elderly Care Development 2025: Promising Advances