Leading LLM Optimizers in AI Visibility Sector 2026: Essential Tools Compared

Explore the leading LLM Optimizers in AI Visibility Sector and learn how they are revolutionizing search engine strategies and content discoverability.

The indispensable role of LLM optimizers in today’s AI-driven business ecosystems

We’re witnessing a transformative era where organizations are fundamentally reshaping how they engage with data streams, customer interactions, and knowledge management systems through sophisticated language technologies. Yet beneath the surface of these powerful systems lies intricate complexity that creates unprecedented challenges in transparency and control mechanisms. Specialized optimization platforms have emerged as vital bridges across these visibility gaps, delivering purpose-built solutions for continuous performance enhancement across all dimensions of artificial intelligence operations. These sophisticated tools address critical requirements that conventional analytical approaches simply cannot satisfy, primarily due to the probabilistic frameworks, massive operational scales, and inherent unpredictability characterizing modern generative AI implementations.

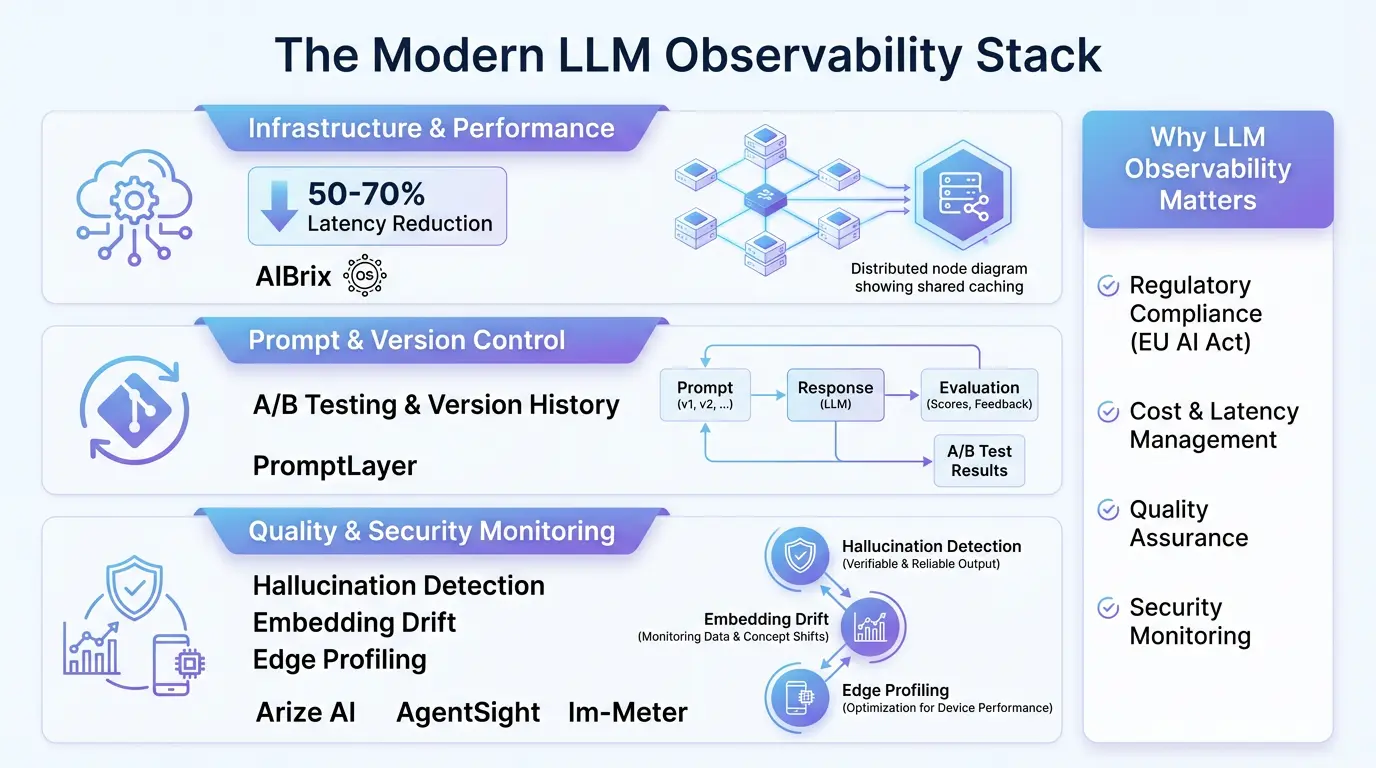

Traditional application monitoring approaches fall dramatically short when applied to language model ecosystems, as they fundamentally require analyzing semantic patterns and contextual relationships rather than simplistic performance metrics alone. The most advanced optimization suites seamlessly combine infrastructure-level monitoring capabilities with deep content quality evaluation systems, creating comprehensive visibility into both the technical operations and output quality of AI implementations. This dual-axis approach empowers organizations to maintain exceptional operational efficiency while simultaneously ensuring their artificial intelligence systems produce outputs that are consistently accurate, compliant with regulatory frameworks, and contextually aligned with business objectives at all times.

Grasping the multi-dimensional nature of AI visibility within language model frameworks

When we talk about comprehensive visibility in artificial intelligence systems, we’re referring to the multidimensional capacity to continuously monitor, deeply analyze, and intelligently interpret both the internal operational processes and external outputs of machine learning implementations. Within the specific context of large language models, this expansive visibility concept encompasses several critical layers of analysis:

- Infrastructure transparency: Monitoring GPU allocation efficiency, real-time latency measurements, computational resource utilization patterns, and contextual token processing economics

- Content quality oversight: Evaluating prompt-response coherence metrics, identifying hallucination frequency, measuring factual consistency rates, and assessing contextual relevance scoring

- Workflow process mapping: Tracing intelligent agent decision pathways, visualizing multi-stage interaction sequences, and analyzing autonomous operation patterns

- Regulatory compliance assurance: Maintaining immutable audit trails, documenting data provenance, ensuring adherence to evolving legal frameworks, and automating compliance reporting

Industry-leading solutions such as AIBrix and Datadog’s advanced observability platforms provide sophisticated instrumentation across all these critical dimensions through specialized monitoring architectures specifically designed for language model environments. For example, AIBrix’s innovative distributed key-value caching infrastructure delivers unparalleled insights into computational resource management, while Datadog’s comprehensive tracing capabilities provide crystal-clear visibility into complex multi-agent workflows and decision pathways.

The evolutionary trajectory of observability requirements in language model operations

Observability capabilities for language models have progressed through three distinct eras of technological advancement since their emergence in 2022:

| Development Phase | Time Period | Core Functionalities | Industry Transformation |

|---|---|---|---|

| Elementary Logging Systems | 2022-2023 | Basic prompt/response archival Minimal error tracking capabilities | Enabled preliminary diagnostics Limited to retrospective problem analysis |

| Performance Metrics Stage | 2023-2024 | Response latency tracking Operational cost analytics Rudimentary quality assessment | Significant cost optimization Enhanced response efficiency |

| Intelligent Monitoring Era | 2024-Current | Semantic drift identification Agent workflow intelligence Automated correction systems | Enabled predictive maintenance Compliance automation frameworks Ongoing optimization cycles |

Contemporary monitoring platforms now integrate sophisticated machine learning-driven anomaly detection systems that proactively identify emerging issues long before they manifest in operational disruptions. For instance, Arize AI’s cutting-edge embedding drift detection technology flags subtle semantic shifts in model behavior with remarkable 94% accuracy, typically detecting potential degradation days before human analysts might notice deteriorating output quality through manual monitoring processes.

The accelerating impact of global regulatory frameworks on adoption patterns

International regulatory developments have rapidly transformed advanced observability from a competitive advantage to an operational necessity. The European Union’s comprehensive AI Act mandates thorough documentation requirements for high-risk AI applications, while the United States Executive Order on Artificial Intelligence imposes rigorous testing and monitoring demands for foundation models. Language model optimization platforms directly address these expanding compliance requirements through several critical mechanisms:

- Immutable audit logs capturing all model inputs and outputs throughout operational lifecycles

- Automated compliance reporting frameworks generating regulatory documentation

- Real-time toxic content identification and filtration systems

- Complete decision provenance tracking across complex multi-agent ecosystems

Platforms like Galileo have responded to these regulatory challenges by developing specialized compliance modules that automatically generate audit-ready documentation packages, reducing compliance preparation timelines by an impressive 70% compared to traditional manual documentation processes while ensuring continuous adherence to evolving regulatory landscapes.

Core functional capabilities of premium language model optimization platforms

The most effective optimization solutions provide comprehensive functionality across six critical operational dimensions that drive enterprise AI success:

1. Advanced infrastructure optimization capabilities

Leading-edge platforms dramatically enhance computational efficiency through innovative architectural approaches. AIBrix’s revolutionary shared attention caching system exemplifies this capability through multiple breakthrough features:

- Eliminating redundant computation across distributed GPU clusters

- Implementing context-aware request routing protocols

- Automatically adjusting resource allocation based on dynamic performance targets

These sophisticated optimizations enable enterprises to handle 3-5 times more requests with identical hardware investments while maintaining exceptional 99.9% service level agreement compliance. Documented performance benchmarks demonstrate 50% improvements in throughput capacity and 70% reductions in latency metrics across production environments at major cloud service providers implementing these advanced optimization techniques.

2. Comprehensive content quality assurance systems

Top-tier solutions employ multi-layered content analysis frameworks to ensure unprecedented output reliability across diverse use cases:

| Detection Methodology | Technical Approach | Accuracy Benchmark | Platform Examples |

|---|---|---|---|

| Hallucination Detection | Automated fact verification using knowledge graphs | 89-93% | Arize AI, Galileo |

| Toxicity Filtering | Multi-model consensus classification systems | 97-99% | Datadog LLM Observability, Adobe LLM Optimizer |

| Consistency Validation | Cross-response similarity benchmarking | 91% | PromptLayer, Fibr AI |

The most recent innovation in this critical domain comes from Galileo’s breakthrough Luna Evaluation Suite, which utilizes specialized small language models to assess output quality at 97% lower operational costs compared to traditional GPT-4-based evaluation systems while maintaining exceptional 98% evaluation accuracy across diverse content types and subject matters.

In-depth platform analysis: comparing industry leaders

AIBrix: revolutionizing computational infrastructure management

AIBrix has cemented its position as the premier infrastructure optimization platform through continuous technological innovation. The platform’s 2025 architectural breakthroughs include several industry-first capabilities:

- Distributed attention caching spanning computational nodes

- Advanced LoRA management supporting over 1500 model variants

- Automatic GPU failure detection and intelligent workload rerouting

- Token-based autoscaling with ±5% performance target adherence

Enterprise implementations in financial services organizations have demonstrated 53% cost reductions while successfully processing 18 million daily requests at peak capacity. It’s important to note that AIBrix specializes exclusively in infrastructure optimization, requiring strategic integration with complementary platforms like Arize AI to achieve comprehensive visibility across all operational dimensions.

PromptLayer: enterprise-grade prompt lifecycle management

PromptLayer offers unparalleled capabilities for organizations where prompt quality directly impacts critical business outcomes and revenue streams:

- Advanced version control with Git-like branching and merging workflows

- Comprehensive A/B testing framework measuring 12+ quality dimensions

- Metadata-rich logging with customizable taxonomy support

- Seamless LangChain integration requiring minimal code changes

The platform’s searchable prompt repository enables organizations to identify performance patterns across thousands of historical interactions. At one enterprise SaaS provider, implementation of PromptLayer reduced prompt iteration cycles by 40% while simultaneously increasing conversion rates by 17% through systematic experimentation and optimization methodologies.

Adobe LLM Optimizer: comprehensive enterprise visibility solutions

Adobe’s entry into the optimization space combines deep integration with their Experience Cloud ecosystem alongside powerful AI traffic analysis capabilities:

| Capability | Functional Description | Business Value |

|---|---|---|

| AI Traffic Intelligence | Identifies LLM crawlers and analyzes agent access patterns | 500% citation increase (Adobe case study data) |

| GEO Scoring System | Quantitative brand positioning measurement in generative responses | 22% improvement in conversion funnel metrics |

| Content Gap Identification | Surfaces optimization opportunities across 18 visibility dimensions | 47% faster content optimization cycles |

The platform’s seamless deployment through Adobe Experience Manager makes it particularly valuable for enterprises with existing Adobe technology investments. However, the absence of transparent pricing structures presents adoption challenges for mid-market organizations evaluating the solution.

Galileo: evaluation-centric reliability engineering approach

Galileo has pioneered the AI reliability engineering movement with its evaluation-first platform design philosophy:

- Automated testing identifies 98% more edge cases than manual review processes

- Luna Evaluation Suite reduces evaluation costs by 97% per test

- Specialized agent metrics assess tool selection accuracy

- Production monitoring achieves comprehensive 100% trace sampling

While these specialized capabilities deliver exceptional value, implementation requires substantial code modifications compared to proxy-based alternatives. Enterprise clients report 80% faster iteration cycles but acknowledge significant learning curves during initial deployment phases that necessitate dedicated technical resources for successful adoption.

Specialized optimization tools for advanced implementation scenarios

AgentSight: security-focused autonomous agent monitoring

AgentSight addresses critical security vulnerabilities in autonomous agent deployments through innovative eBPF kernel-level monitoring technology:

- Correlates semantic intent with system-level actions

- Detects prompt injection attacks with 99.2% accuracy rates

- Prevents privilege escalation attempts in real-time operations

- Maintains <3% performance overhead at maximum throughput

Financial institutions implementing AgentSight have reduced security incidents by 73% while maintaining continuous compliance with stringent FINRA regulatory requirements. The solution’s unique value proposition lies in its ability to bridge the semantic-system divide that conventional security tools cannot effectively monitor or protect.

lm-Meter: edge deployment optimization toolkit

The lm-Meter toolkit provides unprecedented visibility into on-device language model performance characteristics with specialized metrics:

| Performance Metric | Measurement Focus | Optimization Impact |

|---|---|---|

| Token Decoding Efficiency | Clock cycle analysis per token | 17-22% latency reduction |

| Memory Bandwidth Utilization | Cache operational efficiency measurement | 14% power consumption reduction |

| Kernel Operation Analysis | GPU kernel execution profiling | 31% faster inference performance |

Mobile development teams using lm-Meter have achieved 40% performance improvements for Gemini Nano inference speeds on mid-tier smartphones, enabling sophisticated AI features on cost-effective devices. The toolkit’s hardware-specific optimization guides have become indispensable resources for teams deploying language models at the edge across diverse industries and use cases.

Integration best practices for multi-platform optimization environments

Most enterprises implement 3-5 specialized optimization platforms to address diverse operational requirements. Successful integration demands implementation of several critical architectural components:

Architectural foundation requirements

- Centralized telemetry aggregation points for unified data collection

- Standardized API gateways enabling cross-platform query capabilities

- Consistent metadata taxonomy frameworks across all systems

- Hierarchical alerting systems with intelligent deduplication features

A phased implementation methodology delivers optimal results for most organizations:

- Foundation Implementation: Infrastructure monitoring (AIBrix, Datadog)

- Quality Assurance Layer: Content analysis (PromptLayer, Galileo)

- Specialized Extension: Niche requirement solutions (AgentSight, lm-Meter)

Advanced data correlation methodologies

Sophisticated users implement multiple correlation approaches to extract maximum value from integrated systems:

| Correlation Method | Technical Description | Business Benefit |

|---|---|---|

| Temporal Alignment Analysis | Synchronizing GPU metrics with content quality scores | Identifies hardware-induced performance degradation |

| Semantic Business Mapping | Connecting prompt patterns to organizational KPIs | Optimizes high-value customer interactions |

| Causal Relationship Tracing | Linking quality issues to specific model versions | Reduces mean time to resolution for critical incidents |

The emerging frontier of language model optimization technology

The LLM optimization landscape continues evolving with three transformative trends reshaping the industry:

1. AI-powered monitoring ecosystems

Leading platforms now utilize language models to monitor other language models, creating self-optimizing operational ecosystems. Arize AI’s Phoenix platform demonstrates this capability through several breakthrough features:

- Automatic monitoring rule generation based on operational patterns

- Natural language analysis for actionable prompt improvement suggestions

- Infrastructure demand prediction algorithms forecasting needs 12 hours ahead

2. Standardized evaluation frameworks

Emerging industry standards like MLCommons’ ALOE framework enable cross-platform performance benchmarking:

| Framework Standard | Quality Dimensions Covered | Enterprise Adoption Rate |

|---|---|---|

| ALOE Framework | 37 distinct quality parameters | 42% enterprise adoption by Q3 2025 |

| OpenAI Evals Standard | 26 specialized test protocols | 31% adoption across AI development teams |

3. Automated regulatory compliance systems

Next-generation compliance modules automatically adapt to jurisdictional requirements through multiple technical innovations:

- Dynamic audit trail generation frameworks

- Real-time policy enforcement engines

- Automated documentation for EU AI Act compliance

These technological advancements reduce compliance overhead expenditures by 60-80% while ensuring continuous adherence to evolving global regulatory requirements.

Future projections: the 2026 language model optimization landscape

Industry analysis points to three significant market shifts emerging by 2026:

1. Industry-specific optimization solutions

Specialized platforms will emerge targeting healthcare, financial services, and legal sectors with features including:

- HIPAA/GDPR-compliant data management protocols

- Domain-optimized quality assessment metrics

- Pre-configured regulatory compliance templates

2. Observability-driven development methodologies

Optimization processes will integrate earlier into development cycles through key innovations:

| Conceptual Shift | Technical Impact | Market Adoption Timeline |

|---|---|---|

| Observability as Code | Integration with infrastructure-as-code frameworks | Q4 2025 |

| Prompt Unit Testing | Embedded within continuous integration pipelines | Q1 2026 |

3. Autonomous optimization ecosystems

Self-healing systems will become operational standards through multiple technological advancements:

- Automatic model version regression capabilities

- Dynamic prompt adjustment algorithms

- AI-driven infrastructure planning systems

Industry analysts project these technological advancements will reduce manual oversight requirements by 40-60% while significantly enhancing system reliability and operational consistency across diverse deployment environments.

Strategic selection criteria for optimization platform adoption

Organizations should systematically evaluate optimization platforms across six critical business dimensions when making solution decisions:

| Evaluation Dimension | Enterprise Priorities | SMB Considerations |

|---|---|---|

| Regulatory Compliance Needs | High priority (GDPR, HIPAA, etc) | Moderate requirements |

| Implementation Complexity Tolerance | Accept complex integrations | Prefer low-code solutions |

| Cost Framework Preferences | Hybrid subscription + consumption | Fixed monthly pricing models |

Three primary implementation models offer distinct strategic advantages:

- Unified Platform Strategy: Single-vendor solution adoption (e.g., Datadog)

- Best-of-Breed Integration: Specialized multi-tool implementation

- Flexible Hybrid Approach: Core platform with customizable extensions

Strategic implementation roadmap

A phased implementation approach maximizes success probability while minimizing operational disruption:

- Comprehensive Audit Phase (4-6 weeks): Detailed assessment of current capabilities versus requirements

- Targeted Pilot Phase (8-10 weeks): Focused deployment of 1-2 tools for critical use cases

- Enterprise Scaling Phase (12-16 weeks): Expansion across all operational workflows

- Continuous Optimization Phase (Ongoing): Refinement of configurations and alerting thresholds

Comprehensive implementation questions and considerations

What fundamentally distinguishes LLM optimization platforms from traditional application monitoring tools?

Language model optimization platforms specialize in addressing challenges unique to generative AI ecosystems that conventional application performance monitoring (APM) solutions simply cannot handle. Traditional monitoring tools focus primarily on infrastructure-level metrics like CPU/GPU utilization and response latency, while advanced optimization platforms add semantic analysis layers, sophisticated prompt-response quality tracking, hallucination pattern detection, and specialized compliance monitoring. These platforms utilize advanced techniques like embedding space drift analysis and multimodal quality assessment specifically designed for the probabilistic nature of language model outputs – capabilities entirely absent from legacy monitoring suites.

What critical metrics should organizations prioritize when assessing optimization platforms?

Businesses should focus on seven essential quantitative and qualitative metrics during platform evaluation:

- Latency per token (infrastructure efficiency metric)

- Hallucination incident rate (content quality indicator)

- Cost per thousand processed tokens (economic benchmark)

- Critical alert resolution timeframe (operational efficiency measure)

- Regulatory compliance coverage percentage

- Mean time between operational failures (reliability benchmark)

- Quantitative ROI from optimization activities

Can small and medium businesses realistically benefit from enterprise-grade optimization solutions?

Several platforms now offer entry-level tiers specifically designed for smaller organizations. Galileo provides a free service tier supporting 5,000 monthly traces, while PromptLayer’s starter plan begins at $29/month. Mid-market options like Fibr AI’s $479/month offering deliver substantial capabilities without enterprise complexity. The critical success factor is aligning solution scope with actual requirements rather than pursuing maximum theoretical capabilities. A detailed cost-benefit analysis typically identifies the optimal balance of capability and affordability for each organization’s specific context.

What evolving regulatory requirements demand advanced optimization capabilities?

Four major regulatory frameworks are driving adoption of sophisticated optimization platforms:

- EU AI Act: Comprehensive documentation requirements for high-risk systems

- NIST AI Risk Management Framework: Continuous monitoring mandates

- HIPAA Compliance: Stringent healthcare data protection standards

- GDPR Regulations: Algorithmic transparency obligations

How does retrieval-augmented generation influence optimization strategies?

RAG implementations introduce specialized monitoring requirements across several critical dimensions:

- Retrieval relevance scoring metrics

- Knowledge base freshness monitoring systems

- Citation accuracy verification protocols

- Context window utilization efficiency metrics

Leading platforms like Arize AI already offer specialized RAG monitoring capabilities, while Adobe’s optimization solution provides citation impact analytics essential for maximizing RAG system performance and accuracy in enterprise environments.