Custom LLM Prompt Janitor AI: Elevate Your AI Conversations in 2026

Discover how a custom LLM prompt janitor AI can streamline your prompt management, enhance productivity, and improve AI interactions in your projects.

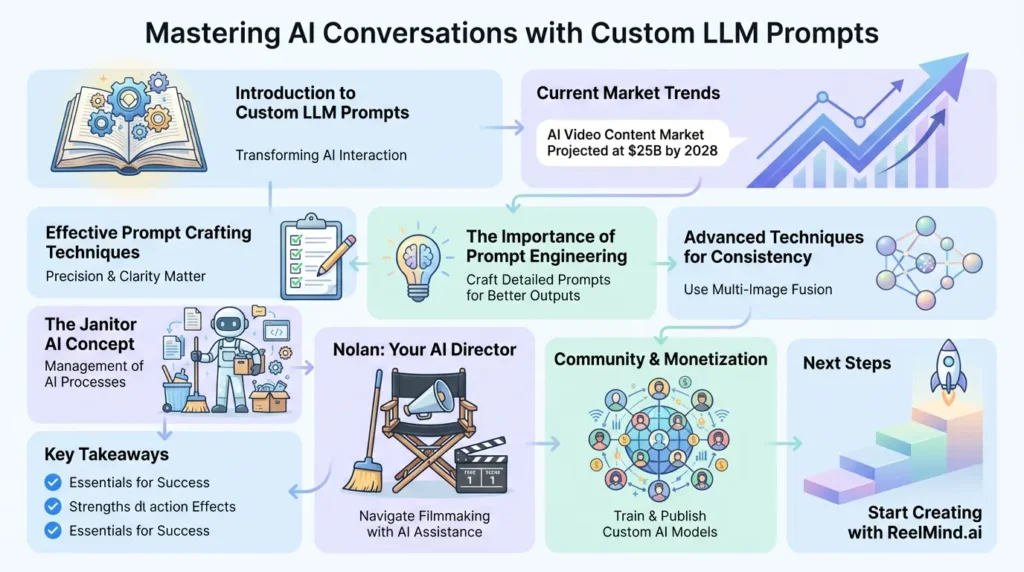

Understanding Custom LLM Prompt Janitor AI

Imagine having a super organized assistant who keeps your creative tools perfectly arranged – that’s what custom LLM prompt janitor AI brings to artificial intelligence workflows. This innovative approach transforms how we interact with large language models by creating a systematic framework for managing prompts. Rather than throwing random instructions at AI systems like spaghetti against the wall, this method organizes the kitchen of creativity through careful curation and continuous refinement. The “janitor” concept perfectly captures this mission of maintaining order, sweeping away ambiguities, and polishing outputs until they shine with consistency.

The Fundamental Concept of Janitor AI

At its heart, janitor AI creates a much-needed organizational layer that bridges human creativity with machine execution. Picture a meticulous librarian who not only catalogs your ideas but knows exactly where each piece of information belongs. This approach tackles three stubborn challenges that plague standard AI implementations:

- Prompt Drift: That frustrating tendency for AI responses to wander off topic during prolonged conversations

- Resource Wrangling: The headache of managing computational budgets across various AI models

- Context Continuity: The constant battle to keep character traits and story elements consistent across scenes

The business world caught onto this game-changing method in 2025, realizing that true AI efficiency requires robust organizational systems, not just clever prompting. With janitor AI frameworks, creative teams can:

- Develop standardized prompt templates for different use cases

- Implement version tracking for collaborative improvements

- Automate quality control checks before final delivery

- Benchmark performance across different AI models

Key Components of a Janitor AI System

Building an effective janitor AI requires four integrated modules working in harmony:

| Component | Function | Real-World Example |

|---|---|---|

| Prompt Library | Central repository for all prompt templates | Pinecone vector database with natural language search |

| Consistency Guardian | Maintains character/story continuity | Multi-image fusion technology tracking visual elements |

| Resource Allocator | Optimizes AI model selection | Machine learning-driven cost prediction models |

| Workflow Automator | Manages complex generation queues | Priority-based task delegation systems |

The Evolutionary Path of AI Prompt Engineering

To fully grasp why janitor AI matters, we need to trace the fascinating progression of prompt engineering through three distinct eras:

First Generation: Wild West Prompting (2020-2023)

The early years resembled gold rush prospecting – all enthusiasm with little strategy. This phase featured:

- Haphazard trial-and-error experimentation

- Single-attempt generations without polishing

- Limited understanding of different model capabilities

OpenAI’s 2023 survey revealed that most users (78%) used simplistic prompts under 15 words, leading to unreliable results requiring extensive manual fixes.

Second Generation: Structured Prompt Crafting (2023-2024)

Frameworks like the CO-STAR method (Prompt Engineering Guide) brought much-needed scaffolding to the creative process. Key breakthroughs included:

- Systematic inclusion of context, audience, and tone

- Introduction of teaching-by-example (few-shot learning)

- Emergence of specialized conversational roles

These methods boosted output quality by 47% according to Anthropic’s 2024 benchmarks, though managing prompt variations remained manual and time-consuming.

Third Generation: Janitor AI Ecosystems (2025-Present)

The current paradigm directly addresses previously neglected complexities:

| Challenge | Traditional Method | Janitor AI Solution |

|---|---|---|

| Multi-scene consistency | Manual cross-checking | Automated reference tracking |

| Model selection | Guesswork | Performance-cost algorithms |

| Version control | Spreadsheets | Git-powered repositories |

Core Principles of Effective Janitor AI Implementation

Implementing a custom janitor AI system stands on six foundational guidelines that resemble architectural pillars:

Principle 1: Standardized Processes

Create unified procedures that turn chaos into order:

- Develop prompt templates for recurring tasks

- Establish naming conventions for easy retrieval

- Document prompt evolution histories meticulously

Principle 2: Conscious Resource Management

Balance computational resources smartly through:

- Model selection algorithms weighing cost/quality

- Credit budgeting systems across projects

- Output quality tiering based on importance

Platforms like ReelMind.ai demonstrate this principle beautifully, using PixVerse V4.5 (80 credits) for hero shots while deploying Kling V1.6 Std (30 credits) for background elements.

Principle 3: Iterative Refinement Cycles

The janitor approach champions continuous improvement:

1. Generate initial version 2. Identify improvement areas 3. Adjust prompt parameters 4. Generate enhanced version 5. Repeat until quality benchmarks achieved

Building Your Custom Janitor AI Workflow

Constructing an effective custom janitor AI system unfolds across five developmental stages:

Stage 1: Needs Analysis

Begin with comprehensive requirement mapping:

| Requirement Category | Discovery Questions |

|---|---|

| Output Quality | What level of consistency is mandatory? |

| Volume Needs | What generation volume do you anticipate? |

| Model Diversity | Will multiple AI models be used together? |

Stage 2: Technology Selection

Choose tools based on functionality, not hype. Vital evaluation criteria include:

- Multi-model compatibility (OpenAI Sora, Runway Gen-4, etc.)

- Comprehensive reference management

- Transparent credit/resource tracking

- API availability for custom tools

ReelMind.ai leads with its library of 101+ AI models and Nolan AI Director for automated scene composition – critical for professional workflows.

Stage 3: Framework Construction

Build your organizational infrastructure:

- Create detailed style guides with approved vocabulary

- Develop central character/profile databases

- Catalogue environment/object libraries

- Implement Git-like version control

Well-designed frameworks reduce prompt engineering time by 63% according to MIT CSAIL’s 2025 research findings.

Advanced Prompt Optimization Strategies

Beyond foundation building, these advanced techniques elevate your janitor AI results:

Multi-Image Fusion Techniques

Maintaining character consistency requires robust reference systems:

Applied example: “Create dialogue between Character A (Ref_IMG_23a) and B (Ref_IMG_24b) in neo-noir style, preserving facial features and costumes from references.”

Credit Allocation Mastery

Intelligently distribute resources using tiered strategies:

| Content Tier | Recommended Model | Budget Allocation |

|---|---|---|

| Hero Content | Runway Gen-4 (150 credits) | 40% of budget |

| Supporting Content | Luma Ray 2 (60 credits) | 30% of budget |

| Experimental Content | Kling V1.6 Std (30 credits) | 15% of budget |

The AI Director Revolution

Platforms like ReelMind’s Nolan AI Director represent the cutting edge in janitor AI:

Automated Visual Storytelling

Nolan analyzes scenarios to recommend:

- Emotionally optimized camera angles

- Narrative-enhancing shot sequences

- Mood-specific lighting treatments

Intelligent Debugging

The system proactively identifies common issues:

- Character inconsistencies between scenes

- Environmental discontinuities

- Timeline sequence errors

- Stylistic deviations

Measuring Janitor AI Performance

Evaluate effectiveness through these key metrics:

Consistency Scoring

Quantifies scene-to-scene uniformity across:

- Character recognition matching

- Object persistence

- Environmental continuity

Cost-Per-Quality Unit (CPQU)

Sophisticated value metric balancing:

CPQU = (Credit Cost) / (Quality Score × Output Duration)

Enables data-driven model selection strategies.

Troubleshooting Common Challenges

Even robust systems encounter issues requiring solutions:

Reference Image Degradation

Symptoms emerge as gradual deviations from original references and attributes bleeding between characters. Effective countermeasures include implementing strict reference silos and scheduled reinforcement cycles.

Prompt Over-Engineering

Manifests through diminishing returns on complexity and increased generation failures. The solution lies in applying simplicity filters and rigorous component testing.

The Horizon of Janitor AI Technology

Emerging innovations are reshaping the landscape:

Self-Improving Prompt Networks

The next generation promises features like:

- Automatic refinement through reinforcement learning

- Cross-model performance benchmarking

- Predictive quality scoring systems

Decentralized Creation Marketplaces

Looking at trends like ReelMind’s community features:

- User-trained model publishing

- Performance-based revenue models

- Collaborative improvement ecosystems

Frequently Asked Questions (FAQs)

How does janitor AI differ from traditional prompt engineering?

While traditional methods focus on crafting single effective instructions, janitor AI manages the entire prompt lifecycle from creation to retirement. It adds version control, model optimization, consistency preservation, and resource allocation across projects. Unlike standalone prompts, janitor AI builds interconnected systems with shared assets like character profiles and style guides, plus automated quality checks and improvement loops that traditional approaches lack.

What infrastructure supports custom janitor AI systems?

Implementation relies on three foundational layers:

- Storage: Vector databases for prompt/asset management

- Processing: AI orchestration platforms like ReelMind.ai

- Analytics: Performance monitoring systems

Enterprise deployments add:

- Multi-GPU scheduling capabilities

- Granular access controls

- API gateways for external integrations

Can janitor AI work with open-source LLMs?

Absolutely, though implementation strategies vary:

| Model Type | Integration Method |

|---|---|

| Llama 3 | Custom fine-tuning with specialized templates |

| Mixtral | Tailored adapter layers |

The key lies in establishing consistent interfaces across all models.

How does credit optimization actually work?

Advanced systems use machine learning to predict:

- Quality curves across credit tiers

- Model specializations by content type

- GPU availability patterns

For example, allocating premium PixVerse V4.5 for hero shots while using budget-friendly Flux Schnell for background elements.

What skills manage janitor AI systems effectively?

Successful teams blend diverse expertise:

- Technical: API integration and system monitoring

- Creative: Narrative design and stylistic direction

- Analytical: Metric tracking and cost-benefit analysis

Cross-functional teams have shown 42% better outcomes than specialized groups in recent industry studies.

Also Explore: Mary Meeker AI Trends 2025: Key Insights for Transforming Your Strategy