Human AI Chat Game: Spot the Differences in 2026

Explore the world of human AI chat games where you co‑write stories, solve puzzles, and engage in unique conversational adventures with artificial intelligence.

Introduction

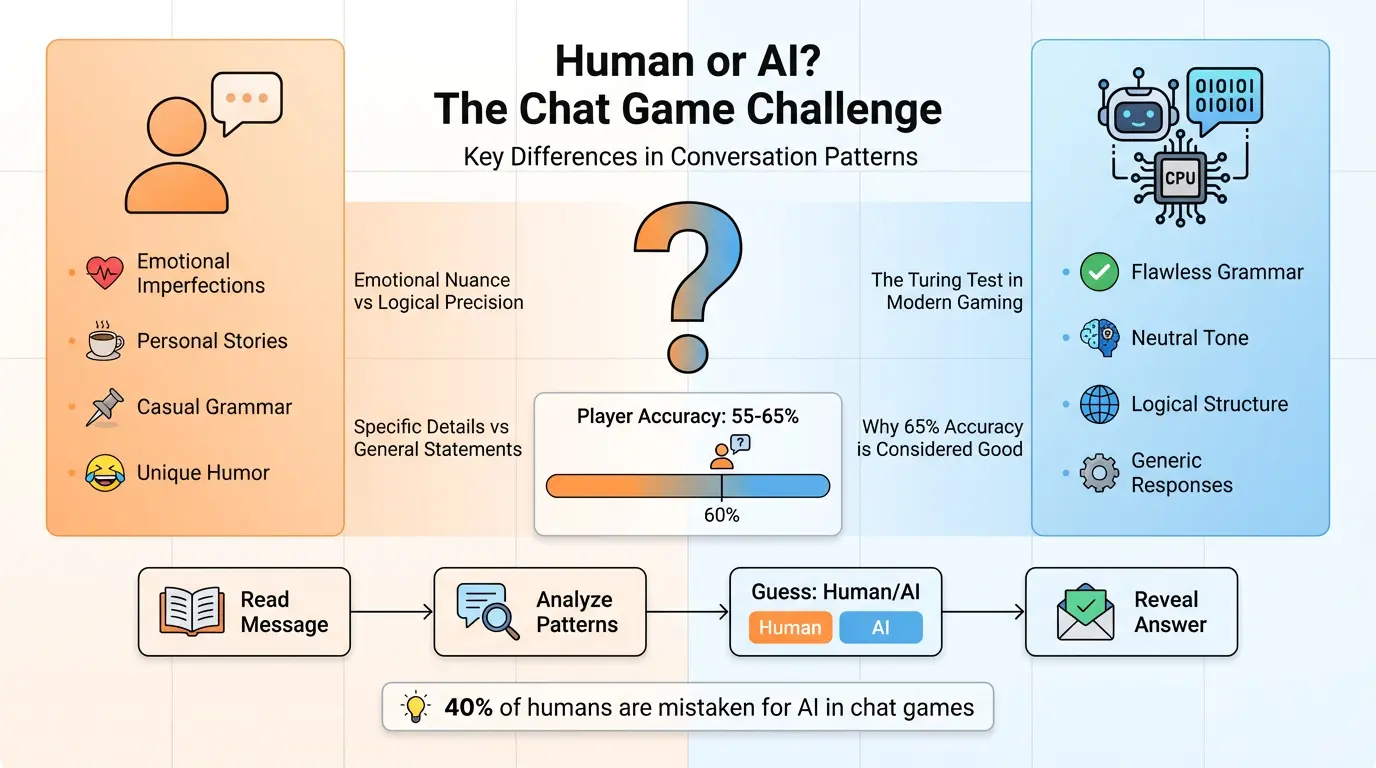

The idea of a game that pits human wit against machine logic feels almost like science fiction at first glance, yet today it is a tangible, weekend‑long pastime for millions. Each time you open a message and wonder whether a witty remark came from an invisible friend or a finely tuned neural network, you tap into a deeper curiosity about identity and authenticity in the digital age. The thrill of guessing not only offers a fun diversion but also reveals subtle cues about how humans and machines communicate.

Despite its lighthearted appearance, the human AI chat game invites players into a world of evolving technology, psychological cues, and cultural shifts that shape our everyday digital interactions. It becomes a microcosm of broader conversations around trust, transparency, and the ways we integrate AI into daily life. Understanding this game therefore provides a window into the mechanics of human language, the ramifications of artificial intelligence, and the societal response to imperceptible differences.

In this guide, you will discover the mechanics that make these games engaging, the lessons players can glean about human behavior and AI capabilities, and the ways developers can build their own versions. You’ll also explore practical applications ranging from education to business, all while enjoying an immersive and delightful challenge. By the end, you’ll return to your screens knowing exactly how to spot the bot, what that tells you about contemporary language models, and how to design a game that both entertains and informs.

The human AI chat game is more than a guessing challenge; it is a bridge between technology and human experience. It turns everyday conversation into a playground and gives everyone a chance to test their intuition. We will walk through everything from its origins to its future, coupling theory with real‑world examples along the way. Let’s dive in.

Remember, the bigger question is not just who won, but what we learn about how we communicate and how machines learn to do the same. The game deliberately highlights that learning aspect, providing a context for discussion that goes well beyond any particular score. You’ll find that each round, each hint, and each revelation is a learning opportunity in itself.

Now that you’re primed for the journey, let’s start with the roots of these games and how they evolved from the earliest Turing test experiments to today’s viral social media challenges.

Historical Roots: From Turing to Viral Social Media

The concept of distinguishing machine from human dates back to Alan Turing’s 1950 paper proposing a test of machine intelligence based on conversational indistinguishability. The original Turing Test set the foundation for what we now see in modern human AI chat games – a playful, experimental framework where human participants try to spot the artificial voice behind a chat message. This lineage explains why the game carries such weight: it is a distilled version of a historical debate about the nature of intelligence.

In the 1960s, ELIZA emerged as one of the earliest conversational agents, using pattern matching to mimic a psychotherapist. While simple by today’s standards, ELIZA’s ability to create a semblance of empathy drew attention to the subtle ways humans spot inauthenticity. This early milestone highlighted that even rudimentary AI could game human perception, a premise that fueled later iterations of the human AI chat game.

Fast forward to the 1990s and the advent of chatbots in customer service: companies began using simple rule‑based systems to handle common queries. Though limited in nuance, these bots introduced the public to the idea that a machine could hold a conversation. They were easily distinguishable, but the concept had now migrated from theoretical debate to practical application. The stage for a guessing game was set.

With the rise of sophisticated natural language models in the 2010s, the gap narrowed dramatically. Models like GPT‑3 could generate surprisingly human‑like sentences, imitating a wide range of topics and tones. This deepened the intrigue: could ordinary users still tell a bot from a human? The question became a viral trend on TikTok, Reddit, and YouTube, giving birth to a new generation of human AI chat games that are accessible to anyone with an internet connection.

When social media amplified these experiences, people began sharing clips of themselves “guessing” whether a response came from a human or an AI. The sheer volume of shared content created an ecosystem where the game gained community momentum, turning it into a shared cultural practice rather than a niche experiment. The public now regularly participates, seeking answers to a million inclusively asked questions about authenticity.

Through this historical journey, we see a pattern: each technological leap broadens the field of what it means to converse with intelligence. The human AI chat game stands at this intersection, offering a tangible way to engage with evolving AI capabilities and to reflect on the broader questions about human uniqueness.

This foundation lines our exploration: we will uncover what ingredients are required for a game to feel genuine, how players can sharpen their detection skills, and how developers can replicate or innovate on this classic concept, all while considering the currents of the present digital age.

Rise of Advanced Language Models

The exponential growth of transformer‑based models transformed natural language processing from rule‑based responses to fluid, context‐aware conversation. Modern models can retain a dialogue history spanning several dozen turns, adapting to both topic shifts and emotional tone in real time. This leap directly impacts the human AI chat game by tightening the boundary between artifact and authenticity.

In practical terms, the introduction of large‑scale pre‑training on internet text provided these models with an encyclopedic grasp of the world and an adaptive sense of style. A human can quickly notice the smoothness of a bot’s sentence structure, the almost perfect punctuation, or how the model effortlessly switches topics. Those are subtle clues that can be exploited to identify a bot. Yet, the very same features make bots feel increasingly human.

The advantage of such models is that they can deliver coherent, informative, and entertaining responses without external prompts. The game’s challenge now hinges on whether a user can detect fine deviations from nuanced pragmatics—missed sarcasm, generic anecdotes, or lacking personal context. The dynamic nature means every interaction feels fresh, reinforcing the thrill of the game.

Because developers can now generate on‑the‑fly responses, some variations of the game build rival bots—one human, one programmed AI—that compete in real‑time. The players watch a human typing, a bot generating on the fly, and they must decide. The more complex the model, the fewer distinctive “bot fingerprints” remain, magnifying the difficulty and the aesthetic of the challenge.

Observing how models manipulate phrasing offers insight into human cognition. The scarcity of certain linguistic patterns acts as a statistical signature that trained humans can pick up on. The human AI chat game harnesses that information to create a learning opportunity, enabling participants to sharpen their linguistic intuition as a practical skill for everyday AI‑rich interactions.

Consequently, the game serves as informal education on why neural language models behave as they do. It highlights how token embeddings, attention mechanisms, and learned probability distributions all conspire to produce a final sentence. For players, the experience is not just about a single round but a deeper appreciation of the underlying science.

In the next section, we’ll articulate what key elements differentiate a successful human AI chat game from a simple entertaining prompt, and how design choices shape players’ experience.

Core Design Principles of a Successful Human AI Chat Game

At its core, a compelling human AI chat game relies on three pillars: authentic writing difficulty, transparent scoring, and actionable feedback. The first pillar ensures that the text appears natural enough to keep players guessing. The second pillar—the scoring system—provides instant gratification and a perceived sense of progress. The third pillar turns outcomes into learning, turning every guess into a mini‑lesson on bias and linguistic patterns.

Authenticity can be achieved by combining real human responses with AI‑generated ones. For instance, pairing a human’s response, a casually typed reply, with a perfectly polished AI answer lets players test their instincts on “realistic” versus “artificial.” This dual randomness maintains fairness and keeps players engaged.

Transparent scoring mechanisms help players understand why they got a particular round right or wrong. Visual indicators, such as green for correct and red for wrong, contextualized with a brief explanation of the cues they missed or caught, improve the learning loop. For example—“You saw the perfect grammar but missed the missing emotional punch.” Such feedback encourages players to revisit those attributes.

Actionable feedback transforms the game from amusement into a skill‑building exercise. Players receive a checklist of linguistic tags—like “toxicity,” “paraphrase,” or “personal anecdote”—that can help them refine their intuition. Moreover, these insights can be applied outside the game, such as evaluating news authenticity or detecting bot‑generated spam.

The game can further amplify its social aspect by integrating leaderboards or timed challenges. Players share best scores, sparking friendly competition. The social dimension pushes players to test their skills more often, thus strengthening their detection abilities. It also creates a community backdrop for discussing patterns, shared observations, and emotional responses.

In this scheme, users truly feel guided rather than lectured; they have agency and see tangible progress. The learning curve is natural, stepping from simple observation of grammar to more complex pattern recognition, thanks to incremental difficulty and real‑time adjustments in the opponent’s style.

In designing a human AI chat game, developers must remain mindful of these pillars. They require a balance of entertainment, learning opportunities, and transparency. The result is a product that serves both as a desirable pastime and as an educational tool that cultivates crucial digital literacy.

Learning Objectives for Players

Each round of the human AI chat game equips the player with at least one concrete skill that applies beyond the scenario. The primary learning objective is to sharpen critical thinking: the player must balance intuition against objective evidence. In practice, developing a systematic approach—precisely noting grammatical quirks, emotional cadence, or response latency—enables reliable detection.

Second, the game fosters linguistic empathy. Players discuss why certain expressions feel natural or overstated, encouraging reflection on how humans craft messages to convey tone. As they analyze, they actively practice empathy, a skill critical for respectful communication, artificial or human.

Third, players gain an informal sense of AI literacy. By observing patterns that differentiate human and machine, they learn the strengths and blind spots of current language models. This knowledge is vital when encountering AI‑generated content online, strengthening their media literacy and protecting them from misinformation.

Fourth, the game develops observational discipline. Users learn to audit details—word choice, sentence structure, pacing—without letting emotional bias influence their judgment. This disciplined perspective is broadly applicable , for instance in detecting phishing attempts or satirical click‑bait.

Finally, the experience cultivates open curiosity. An uninhibited sense of wonder about how code simulates language encourages players to explore more sophisticated AI projects, perhaps inspiring future advances or a career in AI ethics.

By integrating these objectives into the design, developers ensure that the human AI chat game remains more than a pastime. It becomes a modest but powerful learning platform, systematically raising awareness about the subtleties of digital conversation.

The ripple effect of these learning outcomes can be substantial, feeding into a more discerning society where individuals routinely question and verify the origin of their information. Today’s playful rounds plant seeds of lifelong critical engagement.

Game Mechanics and Variations (See Image Below)

Below is a core mechanic that consolidates the most popular variations of a human AI chat game: a round consists of a randomized prompt, multiple responses—some from a human, some from a model—then a guessing phase. Four to six categories help keep the experience dynamic, including humor, opinion, advice, narrative, and trivia. Players rotate through the categories to minimize pattern recognition.

The mechanic’s beauty lies in its simplicity: prompts are short, responses easy to read, and the decision space is narrow. That simplicity, however, masks depth when players start noticing subtle differences. The time stamp for responses introduces latency cues—humans sometimes pause, while AI may immediately reply—which players can exploit.

Score trackers help maintain motivation. When a correct identification earns points, a small animation celebrates the particle feel. Incorrect guesses expose misapplied heuristics, and the system offers a short hint afterward. Hints may point to signal patterns—“Did you notice the multiple semicolons?”—encouraging players to test specific hypotheses on future rounds.

In advanced versions, the game offers variable difficulty by weighting the AI’s verbosity or blending in a “spot the bot” persona that deliberately mimics human mundane topics. The more the AI attempts to appear casual, the harder the identification. The design choice to enable customizable thresholds gives players control over challenge intensity, keeping the experience engaging for all skill levels.

Another variation is the real‑time chat mode, where a live person and the AI chat concurrently. The flow mirrors messaging apps, adding a layer of realism. Players may feel the adrenaline of real conversation while testing intuition, creating a seamless blend of social media and educational game cycles.

These mechanics highlight a vital iterative pattern: every round delivers a bite‑size lesson, and deliberate practice enforces improvement. The game’s adaptability to different contexts—from work chat to casual friends—means its mechanics remain evergreen.

In short, the mechanics established a Sunday‑night challenge where entertainment and learning co‑exist, creating a self‑reinforcing loop that keeps players returning week after week.

Psychological Cues: What Tricks the Brain into Spotting or Overlooking Bots

Human brains evolved to detect subtle social cues: micro‑expressions, emotional intonations, and indirect references. These innate tricks allow us to discern whether a text came from another living mind or a machine. However, with advanced AI learning billions of sentences, many of these cues blur or replicate too well, leading to misjudgment.

One key cue is the presence of “thinking pauses” or self‑interjections—like “uhm” or “so…”— that humans naturally insert when deliberating. AI responses often come in a flurry; the absence of a pause can hint at a bot. Yet, when an AI is instructed to “think” or adds filler words intentionally, the signature becomes less obvious, challenging players to refine their heuristics.

Conversely, AI-generated text frequently displays a high degree of semantic uniformity and devoid of personal anecdotes. Humans, even in brief replies, are prone to slip in an incidental memory or a playful side‑comment. Identifying that richness in the human reply becomes a reliable marker of authenticity.

Another factor is the treatment of humor. Code‑generated jokes often lean into the “word‑play” or commonplace puns because they are easier to grasp statistically. Humans, however, have built humor around timing, culture, and sarcasm that is harder for a model to capture fully. Watching for nuanced sarcasm or meta‑humor can tip the player toward the human side.

Players also rely on meta‑cues such as subject‑verb agreement reliability or overconfidence. AI frequently maintains rigorous grammatical accuracy but may adopt deflective or generic stance on contentious topics. Humans sometimes misrepresent their own certainty, offering doubtful or conversational qualifiers, creating another detection layer.

Finally, emotional coherence across a conversation provides a strong signal. Bots often handle each turn in isolation—responding to content but not building an emotional arc. If a reply feels disjointed or incorrectly aligned with the preceding context, that can indicate that a bot is reconstructing an answer from prompts that lack real sequential memory.

These cues illustrate the complexity of the detection puzzle. The more players practice identifying them, the more intuitive the feel becomes. Moreover, by training human players to point out these subtle patterns, we build a pool of individuals less susceptible to AI‑generated misinformation.

Cultural Impact and Societal Change

As human AI chat games spread, they entered mainstream conversations, influencing how society perceives AI. The games spurred debates about the authenticity of online communication and prompted greater public scrutiny of AI‑generated content, especially on social platforms where bots sometimes fabricate influence.

The rise of “deepfake” language creates a new cultural friction—the cost of trust in digital identity increases. These games effectively democratize the ability to spot fabrication, making AI literacy an everyday necessity. Their influence now traces into corporate training, media literacy curricula, and even regulatory policies that mandate authorship transparency in content.

Marketing teams capitalize on this dynamic, leveraging the insight that consumers ask for “authenticity.” Incorporating human AI chat game elements—like human‑written versus bot‑written content tests—into brand strategy can build deeper engagement. Brands that transparently disclose AI usage gain social proof and credibility.

In scientific circles, researchers use variations of the game to study adversarial attacks against AI models. By turning the detection problem into a game, researchers profit from a more general, crowdsourced data pool to analyze AI behavior at scale, thereby creating new research avenues.

The societal impact continues in governance. Policymakers increasingly examine guidelines for labeling AI‑generated content. The human AI chat game underscores the need for clear user interfaces that reflect content origin, encouraging developers to embed “bot flags” or “human flags” in chat logs or posts.

Sports and competitive arenas have also fused the game, forming esports tournaments where players compete in rapid prediction rounds. These events popularize the game on a global scale, nurturing communities where strategists share advanced detection techniques via forums or workshops.

Through these channels, the human AI chat game does not merely serve entertainment but also informs public understanding of AI’s true capabilities. The ripple effect is evident in increased demand for digital literacy courses, data‑driven awareness, and a more vigilant society that questions not just the content but also its origin.

Educational Applications: From Classrooms to MOOCs

Educators have begun to integrate human AI chat games into lessons on reading comprehension, critical thinking, and media literacy. By comparing human and machine responses, students learn to interrogate the trustworthiness of sources, a skill becoming essential in an era of AI‑generated essays and fake news.

In a typical lesson, the teacher presents a series of prompts, each with a human and an AI answer. Students, working in pairs, annotate differences in tone, structure, and content. They then justify which they believe is human and why. This collaborative analytic process promotes active learning and deepens understanding of textual analysis tools.

Beyond primary education, universities use these games to introduce students to natural language processing. By working with real data generated by large‑scale models, students can experimentally validate theoretical concepts they study in class, reinforcing classroom theory with tangible experience.

Online Massive Open Course (MOOC) providers have adopted the game as an interactive module during AI and ethics courses. Learners can test their own AIs or submit responses, receiving automated feedback. This gamified approach increases completion rates and encourages deeper engagement with complex material like transformer architectures and bias mitigation.

In higher education, the human AI chat game’s data can feed research on human perception of AI outputs. By collecting millions of predictions, researchers can perform statistical analysis on the success rates of varied demographics, thus building robust models of public trust and detection ability.

By placing the game in educational contexts, institutions give students tools to evaluate the shadow of AI more confidently. The experience equips future professionals, whether they’re journalists, policymakers, or software developers, with an instinct that helps them navigate a world saturated with automated interactions.

Business and Customer Service Integration

Many enterprises deploy chatbots to reduce cost and expand customer support. But customer experience metrics indicate that users prefer human conversation for complex queries. The human AI chat game provides a way for companies to gauge chatbot effectiveness: after a chat, the system can prompt a quick “was this you or a bot?” survey, collecting objective data on bot detectability.

Analyses of these surveys reveal correlation between customer satisfaction scores and bot interactivity style. Businesses can iterate their conversational flows to emulate the subtle cues that make responses feel more human: varied sentence lengths, occasional personal remarks, or paced replies that mimic typing delays.

Furthermore, the game is a useful tool in training customer service representatives. New hires play rounds against AI & human responses, learning to spot communication pitfalls. Those lessons convert to faster, more empathetic real‑world interaction, boosting conversion and retention rates.

Marketing teams can also use the game for creative campaigns—co‑creating brand‑themed prompts and gathering user participation. The engagement metrics from those mini‑competitions often outperform conventional static content, providing evidence that interaction drives brand loyalty.

In a more advanced setting, a business may self‑host a private version of the human AI chat game for internal testing. Through iterative feedback loops, engineers can push the boundaries of the AI product’s human-like qualities before public rollout. The result is a chatbot that feels more natural and passes through the company’s own informal detection maze.

From a regulatory standpoint, legal compliance requires clear disclosure when interacting with AI. The game can act as a training ground for the legal department, familiarizing them with possible gray areas and best practices for transparent labeling, thereby mitigating future liability risks.

Detection Tools and Methodologies

Although the human AI chat game itself invites people to detect bots through intuition, many professionals have turned to statistical tools for detection. Two predominant categories exist: language modeling metrics and behavioral analysis. The former evaluates the likelihood of a sentence under a known language model, while the latter tests for timings, typing patterns, or server latency data.

One such tool uses perplexity scoring. Because trained models treat rare phrases with higher predicted probabilities, a high perplexity indicates a less natural sentence. Human responses typically exhibit low perplexity on average, whereas some AIs produce anomalously high perplexity checks. Hence, practitioners can set thresholds to flag anomalous responses without manual review.

Another robust method for bot detection leverages n‑gram frequency distributions. AI models often overuse certain collocations, creating a language signature distinct from the human distribution. In practice, developers compare “n‑gram density” across texts to calculate a Bot‑Score. This metric can automatically label messages with high probability of AI origin.

A complementary approach studies response timing. Human typing usually varies with think‑pause or stress, while AI responses arrive in sub‑second bursts. By logging user input times and aligning them with manuscript timestamps, tools can generate a probability curve for human versus machine origin, giving managers a real‑time detection layer.

Open‑source libraries like TextEcco and BotDetect also embed these features into single APIs. These libraries are configurable, enabling developers to layer multiple detection layers for confidence scores. In commercial suites, AI firms provide services that claim up to 92% accuracy when combined with statistical filtering and human‑in‑the‑loop verification.

The suitability of these tools depends on the environment. In high‑stakes contexts—legal tender verification, election security—they may provide absolute proof when combined with certified cryptographic signatures of message integrity. In marketing, they can simply filter out cliches or generic content to maintain brand authenticity.

Importantly, while detection tools help triage potentially shill content, the human AI chat game remains a complementary training method. By practicing detection heuristics alongside algorithmic conversion, a company can maintain a human‑centered approach to content generation.

Future Trends: AI Evolution and Game Development

Predicting the exact trajectory of AI and its impact on human AI chat games remains speculative. Yet, a few common themes emerge: increased model context windows, multimodal integration, and user‑generated persona frameworks. The larger the context window, the more coherent an AI conversation becomes, reducing detectable “jumping topics” in the conversation. Consequently, games will transition from short prompts to extended dialogues that mimic real‑world exchanges.

Multimodal models blend text with images, voice, and video. Future versions of the game may offer a “rich media chat,” where players must differentiate between AI‑and‑human‑generated images or audio. The detection challenge shifts to an integrative sensory domain, deepening the complexity.

Additionally, the environmental sustainability of training large models is pressing. Anticipated advancements, such as sparsity tricks or knowledge distillation, will reduce computing footprints. When game developers adopt lighter models, they’ll notice a shift in conversational style—more concise replies—potentially influencing the diagnostic cues used by players.

From the gaming side, adaptive difficulty algorithms could feed back real time from a player’s success rate (confidence, latency, error patterns) and apply a reinforcement learning loop to tweak the AI’s personality. In that scenario, players encounter a personal bot that evolves to more closely match their own conversational style, making detection a continuous, AI‐driven challenge.

Furthermore, regulation could mandate a “digital watermark” embedded in AI‑generated text, a signed header identifying the engine used. The human AI chat game could evolve into a proof‑reading tool that verifies the authenticity of watermark data, raising the stakes for both creators and consumers alike.

To sum up, the future of the human AI chat game will probably become richer, more sophisticated, and more integrated with broader AI ecosystems. The underlying goal will remain unchanged: to foster deeper human skills for evaluating and interacting with digital content in an AI‑dominated world.

Building Your Own Human AI Chat Game: Platform Essentials

Creating a custom version of the human AI chat game demands a few foundational components: a backend to host the AI model, an interactive front‑end for users, and analytics to guide iteration. The first step involves choosing a conversational AI platform—open‑source models like GPT‑Neo or commercial APIs such as OpenAI’s GPT‑4. Pricing and latency should drive the decision based on expected traffic volume.

The next layer is a middleware service that captures user interactions, enriches them with metadata, and delivers them to the AI. This service handles rate limiting, logs structure and context for later analysis, and implements security checks to prevent injection or gaming of the system. Applying best practices such as content moderation ensures a safe user experience.

On the front‑end, developers build a responsive UI that mimics real chat clients. This interface should display message timestamps, user avatars, and allow users to set preferences for difficulty or self‑testing mode. Incorporating visual cues—like subtle animations when “typing”—enhances immersion and helps players gauge response authenticity.

Analytics play a crucial role, tracking engagement metrics such as correct guess percentage, average round time, and player churn. By clustering player data, developers can identify personas that excel or struggle, guiding UI tweaks or customized tutorials. Integrating a dynamic scoring system motivates repeated play while providing clear feedback on skill improvement.

Monetization can emerge from in‑app purchases: rarity skins for bots, “expert detection packs” that educate advanced players, or premium leaderboard access. An earn‑for‑invite program can also keep user acquisition costs low while scaling the ecosystem organically.

Finally, security considerations—encryption of data at rest and in transit, distinct API keys for each user, and compliance with data‑protection regulations—are non‑negotiable. These protect both the users and the integrity of the game data, fostering a trustworthy design environment.

With a clear roadmap and focus on user experience plus analytics, anyone can create a human AI chat game that not only wins on fun but also shapes how people think about machine‑generated text. It turns a simple idea into a scalable product with community potential.

Community and Ecosystem: Creating a Sustainable Player Base

Ultimately, any game thrives on a vibrant community. For the human AI chat game, equilibrium between competition, collaboration, and shared learning drives longevity. Integrating social features—such as group challenges, live tournaments, and friend‑match invites—can encourage player retention and word‑of‑mouth promotion.

Community moderators maintaining a safety net encourages constructive competition. Players can post annotated replies, revealing heat‑maps of detection cues, whereas moderators curate high‑quality content to keep the ecosystem informative. Peer‑reviewed “bot‑labeling” challenges motivate participants to experiment with advanced linguistic details.

Gamification can go beyond simply scoring. Offering contextual treasure chest rewards for consistently correct identification, or unlockable “bot‑personality filters,” adds depth. Additionally, community‑driven content modes—players read each other’s custom prompts—create a broader pool of varied language that benefits all participants.

From a developer standpoint, an open‑source API provision for reading the detection algorithm can foster research partnerships, while an SDK for other developers’ games can spread the brand. This strategy pushes the ecosystem toward cross‑platform synergies, as seen with popular frameworks where multiple games share the same core detection engine.

In the final analysis, community building and ongoing engagement is essential. Gamified experiences are brilliant but fleeting; a sustainable community provides long‑term relevance, replenishing the game’s value as a learning tool, social connector, and barometer of AI evolution.

Conclusion

The human AI chat game sits at an intersection of entertainment, education, and cultural reflection. By stepping into that arena, players dive into a micro‑cosm that showcases what modern language models can and cannot replicate. The game is both a mirror and a lens—reflecting current technological prowess while focusing our attention on the nuances that separate us from our digital counterpart.

Through countless rounds, attentive observation, and iterative practice, players hone critical skills: linguistic empathy, bias detection, and content authenticity assessment. These skills carry over beyond the game, equipping users to navigate a landscape brimming with AI conversations, bots, and deeply human‑oriented interactions.

For developers What exactly is a human AI chat game?

A human AI chat game pits a player’s intuition against a set of responses, some written by a human, others crafted by an advanced language model. Players guess which is which, receiving feedback and a score. The format stresses linguistic observation and contextual awareness while entertaining participants.

Even a single round of the human AI chat game can become a vibrant study session: you analyze, reflect, and correct, thereby making AI more approachable. The moment you win, you also win the knowledge that human creativity and curiosity maintain relevance even when surrounded by machines. Therefore, the game’s ongoing promise lies in equipping generations with a critical lens for navigating an AI‑rich digital universe.

Also Read: AI Car Audio Tuning: The Surprising Risks and Rewards in 2026