AI Automation Build LLM Apps for Enhanced Customer Experience in 2026

In this post you will get to know about AI Automation Build LLM Apps for Enhanced Customer Experience.

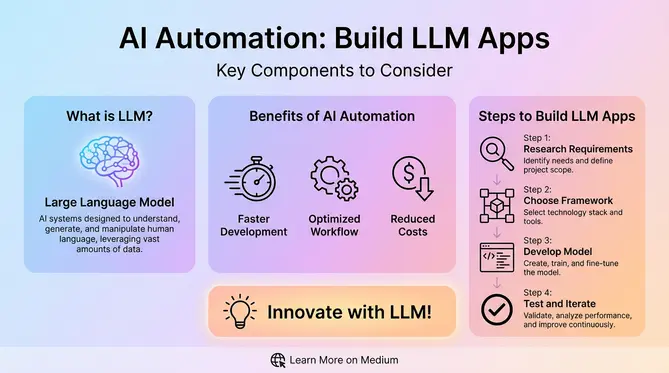

Understanding AI Automation and LLM Applications

The world of artificial intelligence has changed completely with the rise of Large Language Models (LLMs). In contrast to the traditional AI systems which needed an extensively rule-based programming, LLMs in their current form are capable of understanding, generating, and analyzing human language even to a high degree of complexity. If these models are linked with automation rules, they become the driving force of intelligent applications which can significantly change the flow of business, customer experience, and ways of decision-making.

The Evolution of Language Models in Business Automation

The idea of AI automation: build LLM apps is a landmark shift in the way organizations solve problems. Instead of handling them manually, they now have the option to transform these processes into intelligent systems that can self-optimize and are empowered with natural language understanding.

The first AI systems were based on decision trees and had to be explicitly programmed for every possible scenario. With machine learning, pattern recognition was possible but language understanding was still not achieved. The major invention was made possible by transformer architectures and models such as GPT-3 which brought forward the idea of language comprehension and generation as their “emergent capabilities”.

With the ability to comprehend human-level, today’s enterprise-grade large language models (LLMs) can handle tasks such as processing technical documentation, legal contracts, customer dialogues, and scientific literature. Consequently, they serve as excellent base models for creating automated business solutions.

Core Components of LLM-Powered Automation Systems

These models’ inclusion in automation frameworks lead to a new generation of applications that have the capability to:

- Interpret unstructured data at scale

- Make contextual decisions based on a semantic understanding

- Generate human-quality content and communications

- Automate complex workflows that involve multiple decision points

- Continuously learn from new data and interactions

Just accessing API endpoints is not enough to create enterprise-grade LLM applications. The architecture of a high-performing system is made up of a variety of components that are in some way or other linked with each other.

| Component | Function |

|---|---|

| Model Layer | Selection of base models (GPT-4, Claude, Llama 2) in the light of cost, efficiency, and data residency stipulations |

| Data Integration | The introduction of the open/closed format data processing with the underlying data comes from diverse sources |

| Orchestration Framework | Examples such as LangChain or Semantic Kernel that support elaborated workflow management |

| Memory Systems | Implementation of memory ranging from short to long-term for context preservation |

| Security Wrapper | Consist of data anonymization, role-based access controls, and audit logging |

Strategic Approaches to Building LLM Applications

AI automation is a potential game-changer for organizations that want to build LLM app initiatives. Such firms need to make implementation strategy choices that best suit their tech abilities, budget, and use case. The choice between custom development and low-code platforms for LLM applications hinges on a few crucial differences between the two:

Custom Development vs. Low-Code Platforms

Advantages of Custom Development:

- Unrestricted control over system design and features

- Capability of highly specialized solutions creation

- Direct access to model finetuning features

- More security implementation options

What low-code platforms offer:

- Much quicker deployment (weeks as compared to months)

- Less money is needed to develop a project from the start

- Industries can quickly integrate with one another via pre-built connectors

- Maintenance and updates become user-friendly

Technical Architecture Considerations

The layers of infrastructure form an architectural framework for given development teams in their quest for custom-building power, long-term scalability, and maintainability. It generally envelops various components found in a contemporary LLM application include:

- Model Layer: Selection of base models (GPT-4,Claude, Llama 2) in the light of cost, efficiency, and data residency stipulations

- Data Integration: The introduction of the open/closed format data processing with the underlying data comes from diverse sources

- Orchestration Framework: Examples such as LangChain or Semantic Kernel that support elaborated workflow management

- Memory Systems: Implementation of memory ranging from short to long-term for context preservation

- Evaluation Infrastructure: Systems in place for automated testing and monitoring

- Security Wrapper: Consist of data anonymization, role-based access controls, and audit logging

Building Custom LLM Business Applications

Transformative LLM applications come at a price of being planned and executed carefully. Strictly adhering to the methodologies enshrined in the chapter stating the manner in which yahoo-projects go about their work yields ai automation build llm apps project successes.

Step-by-Step Development Process

- Problem Identification: Identify use cases with the highest impact and obvious ROI potential

- Data Preparation: Gather, clean, and preprocess the necessary datasets

- Proof of Concept: Use RAG techniques to create a minimum viable prototype

- Evaluation Framework: Establish measurable success metrics

- Production Architecture: Plan out a scalable infrastructure that includes failover options

- Deployment Strategy: Use monitoring systems to facilitate and ensure the success of a gradual rollout

- Feedback Loop: Put in place mechanisms for perpetual enhancement

Enterprise Implementation Challenges

Operating LLM applications on a large scale is not an easy task for big enterprises. Besides, they often encounter the following hurdles:

| Challenge | Impact | Mitigation Strategies |

|---|---|---|

| Data Silos | Incomplete context leads to inaccurate outputs | Implement unified data layer with fine-grained access controls |

| Hallucinations | Confidence in incorrect information | Combine RAG with cross-verification agents |

| Compliance Risks | Potential regulatory violations | Built-in PII detection and redaction systems |

| Scalability Limits | Performance degradation under load | Distributed architecture with auto-scaling |

Real-World Applications Across Industries

Implementing ai automation build llm apps strategies is leading to substantial operational changes within multiple sectors, ranging from healthcare to manufacturing, finance, and beyond.

Customer Experience Enhancement

Modern LLM applications achieve step-change improvements in customer service operations, for example:

- Intelligent ticket routing systems applying semantic analysis

- Real-time customer sentiment detection during voice calls

- Automated resolution of common inquiries through conversational AI

- Personalized product recommendations based on unstructured customer feedback

- Automated summarization of customer interactions

The big telecommunications company that implemented an LLM-powered support system cut average handling time by 40% while elevating the first-call resolution rates. Integrating with their CRM and billing systems, the tool helps agents understand the context of the conversation with the customer better and faster by giving them real-time information.

Healthcare Documentation Automation

The use of LLM applications by medical providers to automate the tedious clinical documentation process is a promising trend in healthcare. For example:

- Voice-to-text transcription of doctor-patient conversations

- Automated generation of SOAP notes and medical codes

- Intelligent summarization of patient histories

- Cross-referencing symptoms against the latest medical research

- Automated insurance pre-authorization documentation

The top-tier hospital system through an LLM application integration with the EHR system managed to cut the documentation time per patient by 55%. They ensure HIPAA compliance by deploying the solution locally and using sophisticated data anonymization methods.

Scaling and Optimizing LLM Applications

Scaling up from initial prototypes to full enterprise-level deployments of LLM applications requires sophisticated optimization strategies. Some of the advanced techniques for enhancing LLM application efficiency are:

Performance Optimization Strategies

- Prompt Compression: Techniques that allow for a shorter token count while retaining the original meaning

- Distributed Inference: Simultaneously processing parts of a program on several GPUs/TPUs

- Model Quantization: Lowering the precision to speed up calculation

- Caching Mechanisms: Pre-storing frequently requested data to reduce computational effort

- Adaptive Routing: Dynamic choosing of the best models for each user query

Cost Management Approaches

| Cost Factor | Management Strategy |

|---|---|

| API Call Volume | Implement request batching and throttling |

| Token Usage | Optimize prompts and implement context trimming |

Future Trends in LLM Application Development

The landscape of ai automation build llm apps continues to evolve rapidly, with several emerging trends shaping future developments Next-generation LLM applications will have the capability to integrate several sensory inputs and capabilities such as:

Multimodal Agent Systems

- Vision processing for document analysis and image understanding

- Audio processing for emotion detection and voice interaction

- Multimodal reasoning combining text, images, and data

- Robotic process automation integration for physical actions

- 3D environment interaction through VR/AR interfaces

Self-Improving AI Systems

New developments in the design of extended LLM applications also aim at completely autonomous performance enhancements such as:

- Automated prompt optimization via reinforcement learning mechanisms

- Dynamic knowledge base updating from verified resources

- Self-debugging using reflection patterns

- Automatically fine-tuning based on user feedback signals

- Performance benchmarking against changing standards

Implementation Roadmap for Enterprises

The migration from existing systems to LLM-driven automation solutions cannot be done in haste and call for cautious planning.

90-Day Adoption Plan

| Phase | Objectives |

|---|---|

| Week 1-4: Discovery | Assess current processes, identify use cases |

| Week 5-8: Prototyping | Develop initial solutions for top use cases |

| Week 9-12: Pilot | Deploy controlled pilot with metrics tracking |

A well-planned strategy for scaling will keep success with enterprise LLM applications sustainable over time. Companies need to create a Center of Excellence which will enable them to share insights, harmonize practices and expedite the implementation of change across different business units. Building enterprise-grade LLM applications is a journey riddled with various cost components that the organization need to take into account in their budget planning.

Frequently Asked Questions (FAQs)

What are the primary cost factors when building custom LLM applications?

Usage of the foundation model is the main source of a significant variable cost, where API-based models bill per token (usually $0.03-$0.12 per 1K tokens for input/output). A high volume of applications will result in the costs skyrocketing very fast – a customer service chatbot that handles 10,000 conversations daily is likely to be the reason for model costs of $300-500 a day only.

Infrastructure expenses represent the next largest category, which also includes vector database hosting (from $200 to $2000 per month depending on data size), orchestration tooling, and integration platforms.

How can organizations ensure the security of sensitive data in LLM applications?

Keeping secret information safe in LLM applications is a challenging security problem that needs several layers of security measures. At the data ingestion level, set up automated PII (Personally Identifiable Information) detection and redaction tools that scan all incoming data streams.

For organizations that have to meet very strict compliance requirements, private cloud deployments with dedicated model instances are a solution that prevents data commingling. Vendors like AWS and Google Cloud provide HIPAA-compliant AI infrastructure solutions.

How does fine-tuning compare to prompt engineering for specialized applications?

Whether to use fine-tuning or prompt engineering is a decision that should be based on application necessities and the resources that are available. In most cases, prompt engineering can be implemented much quicker (in a matter of days rather than weeks) and has lower initial costs, hence it is more suitable for prototyping and applications that require flexibility over a wide range of tasks. On the other hand, it is limited by the context window and the cost per query is higher if the prompt is complex.

Special methods such as chain-of-thought prompting and constitutional AI can raise the level of results significantly, however, they necessitate a lot of knowledge. Fine-tuning gives better results for domain-specific language and also for the situations where the output format is always the same, by changing the model weights to your application.

What are the best practices for maintaining and updating LLM applications?

Put in place detailed monitoring of both technical and content quality metrics. For technical metrics, monitor latency, error rates, etc., and for content quality, keep track of hallucination rates, and relevance scores, etc. In order to be able to detect model drift, one of the mechanisms that should be in place is the automatic alerting system which tracks key performance indicators over time.

Develop a prompt registry that tracks versions and records all prompts so that it is possible to perform a quick rollback if necessary. If the application is knowledge-based, then there should be a provision for automated data freshness checks that indicate the sources that are outdated and thus, trigger update workflows.

Also Read: Benefits of Blended Learning in AI Era: Unlocking Student Success and Engagement