3D Camera Calibration from Photo AI: Mastering Single-Image Precision in 2026

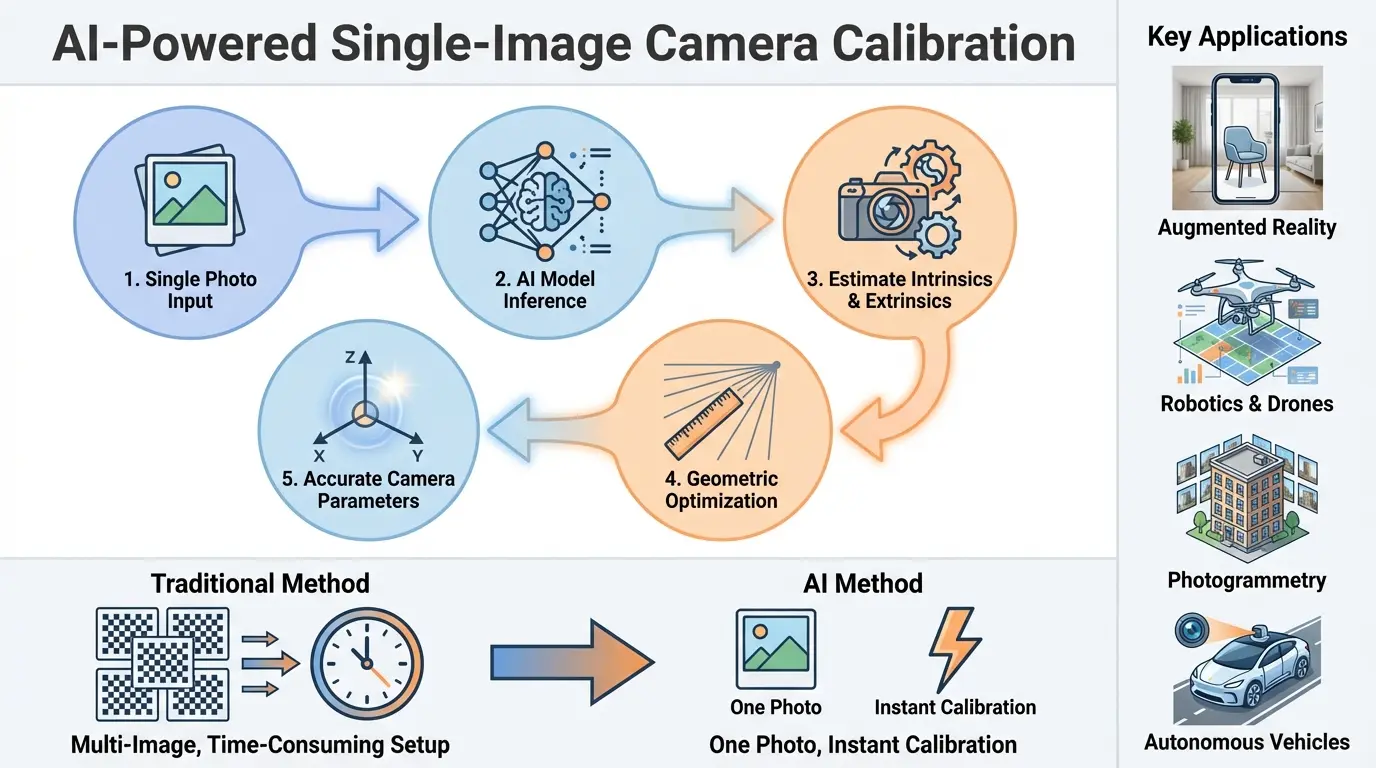

Learn how AI can perform 3D Camera Calibration from Photo AI. This guide explains the process, benefits, and tools for extracting 3D data from 2D images.

The Core Concepts Behind 3D Camera Calibration

The process of calibration determines a camera’s internal characteristics and its position in space using artificial intelligence that analyzes visual information from photographs. This technological advancement allows machines to understand spatial relationships from flat images through pattern recognition and geometric analysis. For 50 years, technicians required specialized equipment and controlled environments to perform calibration, but contemporary methods have eliminated these cumbersome requirements.

When light passes through a camera lens, it creates distortions that affect how three-dimensional objects appear in two-dimensional images. Previous calibration approaches involved complex mathematical models to compensate for these distortions, requiring hours of manual measurements. Modern techniques use neural networks that automatically identify distortion patterns and calculate spatial relationships, achieving accurate results without physical measurement tools. These systems can analyze architectural elements, natural features, or everyday objects within photographs to establish precise calibration parameters.

The Development of Camera Calibration Methods

From the 1970s until recently, professionals used checkerboard patterns and specialized targets to measure camera properties under strictly controlled lighting conditions. These methods necessitated multiple photographs from various angles and complex mathematical computations to determine calibration parameters. The early 2000s saw advancements using planar patterns that simplified the process somewhat, but still required physical calibration objects and controlled environments.

Between 2010-2020, researchers began combining machine learning with traditional photogrammetric principles, creating hybrid systems that could estimate some calibration parameters from single images. The real transformation came when deep learning architectures demonstrated the capability to predict complete calibration metrics from ordinary photographs. Contemporary systems now automatically detect edges, analyze line straightness, and calculate distortion coefficients without specialized targets through advanced neural networks trained on millions of calibrated images.

| Calibration Era | Time Period | Key Characteristics |

|---|---|---|

| Measurement-Based | 1970s-1980s | Physical rulers, optical benches |

| Multi-Image Analysis | 1980s-2000s | Triangulation, feature matching |

| Pattern Recognition | 2000-2020 | Checkerboards, circle grids |

| Neural Network | 2018-Present | Single-image analysis, prediction models |

Mathematical Foundations in Practice

Calibration establishes relationships between three-dimensional points and their two-dimensional projections through mathematical models. The fundamental calculations involve:

- Focal length calculations determining field of view

- Optical center identification within image frames

- Distortion coefficient measurement for lens correction

- Spatial orientation calculations using vanishing points

Advanced implementations employ convolutional layers that detect edges and line segments, spatial transformers that analyze geometric relationships, and regression networks that output calibration parameters. These systems essentially convert visual patterns into mathematical representations through layers of neural processing that mimic human vision interpretation.

The AI Transformation in Camera Calibration

The integration of artificial intelligence removes traditional bottlenecks in photogrammetric workflows by enabling calibration during normal operational use. Field technicians no longer need pre-calibrated equipment since modern systems automatically adjust parameters during image capture. This advancement revolutionized aerial surveying, architectural documentation, and factory automation where immediate measurements are critical.

Current approaches combine multiple neural network architectures to address different aspects of calibration. Initial feature extraction networks identify structural elements within images, geometric reasoning layers analyze spatial relationships, optimization modules refine parameters, and validation components estimate measurement confidence levels. The synergy of these components achieves accuracy comparable to laboratory-grade calibration using ordinary field equipment.

Performance Comparison: Traditional vs AI Systems

| Performance Factor | Conventional Methods | AI-Driven Approach |

|---|---|---|

| Input Requirements | Multiple target images | Single operation photo |

| Equipment Needs | Specialized calibration targets | Standard cameras/devices |

| Setup Duration | 30+ minutes preparation | Instant processing |

| Measurement Consistency | Laboratory-dependent | Field-adaptive results |

| Diverse Condition Handling | Controlled environments only | Variable lighting/weather tolerance |

The diagram below illustrates how neural networks extract geometric information from ordinary scenes:

Technical Implementation of Modern Calibration Tools

Development teams implement multi-stage architectures that progressively enhance calibration accuracy through sequential processing phases. Modular systems first perform global scene analysis, then local feature examination, followed by geometric verification, and final parameter optimization. This approach maintains computational efficiency while ensuring robust performance across diverse imaging conditions.

Commercial solutions incorporate proprietary technologies that automate the entire calibration pipeline:

- Scene assessment for viable geometric features

- Distortion estimation through curve analysis

- Focal length prediction using depth cues

- Continuous parameter optimization loops

- Measurement uncertainty quantification

Practical Application Workflow

Field implementation follows these operational steps:

- Image Selection

- Select photos with clear structural elements and straight lines

- Avoid images with excessive blur or poor contrast

- Prioritize scenes with orthogonal angles and perspective depth

- Feature Identification

- Automated edge detection for line segment analysis

- Vanishing point calculation through line convergence

- Natural pattern recognition for texture analysis

- Neural Processing

- Deep network generates initial calibration estimates

- Geometric constraints refine distortion parameters

- Multi-layer optimizations minimize projection errors

- Output Verification

- Reprojection error analysis

- Parameter confidence scoring

- Visualization of calibration effects on source image

Deployment Challenges and Solutions

Real-world implementation faces several technical hurdles:

- Hardware Limitations

- Mobile deployment requires model quantization

- Edge computing needs efficient neural architectures

- Environmental Variability

- Dynamic lighting compensation algorithms

- Weather effect mitigation through synthetic training

- Camera Diversity

- Multi-sensor calibration databases

- Lens-specific distortion profiles

Industry Applications of AI Calibration

From construction sites to medical facilities, automated calibration technologies enable new capabilities across sectors. The capacity to extract precise 3D data from ordinary photographs transforms workflows in numerous fields while reducing equipment costs and technical barriers.

Augmented Reality Implementation

Retail applications leverage instant calibration to place virtual furniture in customer homes through smartphone cameras. Medical AR systems use calibration for precise surgical navigation overlays that adjust to changing viewpoints. These systems achieve millimeter accuracy without manual setup through real-time parameter adjustments during device movement.

Autonomous Vehicle Navigation

Self-driving systems maintain continuous calibration while operating through environmental analysis. Tesla’s latest driver assistance systems compensate for temperature-induced sensor drift and minor impacts by constantly monitoring lane markings and roadside features. This eliminates dealership calibration visits while improving safety through real-time adjustments. For more on automotive implementations, visit OpenCV’s automotive solutions.

Industrial Metrology Advancements

Factory robots now perform precision alignment using camera systems that self-calibrate between production runs. Construction drones generate survey-grade maps without pre-flight calibration through continuous aerial image analysis. These applications reduce setup time while increasing measurement frequency for quality control processes.

Future Trajectory of Calibration Technologies

Emerging research directions promise to further reduce calibration complexity while expanding application possibilities. Integration with complementary technologies will create seamless measurement systems requiring minimal human intervention.

Emerging Technical Innovations

Several frontier technologies are converging with calibration systems:

- Generative Models

- Synthetic training data generation for rare scenarios

- Distortion correction through image regeneration

- Quantum Computing

- Optimization of complex calibration parameters

- Real-time analysis of multi-camera arrays

- Neuromorphic Hardware

- Low-power continuous calibration chipsets

- Event-based cameras for dynamic calibration

Societal Implications and Ethics

As calibration technologies proliferate, important considerations emerge:

- Privacy protections against detailed environmental reconstruction

- Regulatory frameworks for measurement-critical applications

- Security protocols preventing calibration-based spoofing attacks

- Algorithmic bias mitigation across global environmental diversity

Industry leaders have established ethical guidelines through consortia like the Imaging Technology Standards Board to ensure responsible development as these powerful technologies evolve.

Implementation Pathways for Developers

Integration options vary from open-source libraries to enterprise-grade solutions depending on application requirements and performance needs. Developers can choose from multiple implementation approaches balancing control, accuracy, and computational demands.

Open-Source Resources

The open-source community provides foundational tools:

- OpenCV’s calibration modules with deep learning extensions

- PyTorch-based geometric vision libraries

- TensorFlow implementations for mobile deployment

These resources enable customization for specific camera types or operational environments through accessible APIs and extensive documentation.

Commercial Platform Capabilities

| Solution | Key Features | Industry Focus |

|---|---|---|

| CalibraPro Enterprise | Cloud API, multi-camera support | Robotics, automation |

| VisionCalib Mobile SDK | Smartphone optimization | Consumer applications |

| GeoCalibrate Suite | Geospatial certification | Surveying, mapping |

Forward-Looking Perspectives

The convergence of artificial intelligence with photogrammetric principles will make manual camera calibration obsolete within this decade. Emerging innovations suggest three key development vectors:

- Embedded calibration processors in camera hardware

- Generative AI reconstructing scenes from distorted inputs

- Integrated calibration within NeRF reconstruction pipelines

These advancements will create measurement systems that continuously self-diagnose and adjust while operating in dynamic environments, enabling new applications unimagined with traditional calibration approaches.

Frequently Asked Questions (FAQs)

Can AI calibration replace traditional methods entirely?

For most commercial and industrial applications, AI-driven calibration already delivers sufficient accuracy while offering significant operational advantages. High-precision scientific applications still require traditional methods for traceable certifications, but even these domains are adopting hybrid approaches that boost efficiency. The complete replacement timeline depends on measurement tolerance requirements and the establishment of standardized validation protocols for AI-generated results.

What camera types work with modern calibration tools?

Effective calibration has been demonstrated across:

- Smartphone cameras including recent multi-lens designs

- Industrial inspection cameras with specialized optics

- Aerial mapping cameras on drones and aircraft

- Medical imaging devices with proper adaptation

Specialized systems requiring specific approaches include microscope imaging stacks, underwater housings with dome ports, and panoramic camera arrays with complex overlaps.

How do environmental factors impact calibration accuracy?

Lighting conditions, atmospheric effects, and physical obstructions affect results through:

- Low light reducing feature detection reliability

- Atmospheric haze diminishing contrast at distance

- Partial obstructions disrupting geometric patterns

- Reflective surfaces creating false features

Advanced systems mitigate these factors through multi-spectral analysis, computational photography techniques, and physics-based image processing models trained on diverse environmental data.

What security measures protect calibration systems?

Enterprise implementations incorporate multiple safeguards:

- Secure data transmission with end-to-end encryption

- Authentication protocols for calibration input sources

- Continuous vulnerability assessments

- Access control for parameter modification

Regulatory frameworks now emerging will establish certification requirements for safety-critical applications like autonomous vehicles and medical navigation systems.

How should businesses evaluate calibration solutions?

| Evaluation Criteria | Performance Indicators |

|---|---|

| Accuracy Validation | Mean reprojection error, batch consistency |

| Operational Efficiency | Processing speed, hardware requirements |

| Environmental Robustness | Performance across lighting/weather conditions |

| Scalability | Multi-camera handling, distributed processing |

Also Read: AI Floral Coloring Book Prompts Generator: Boost Your Design Skills in 2026